Sasha Gusev

21.8K posts

@SashaGusevPosts

Statistical geneticist | Associate Prof at @DanaFarber / @harvardmed / @DFCIPopSci | Blogging at https://t.co/4D7UObBNdd

@DamienMorris So we now have three discordant estimates depending on the model -- the many CTD studies, your NTFD analysis, and this Twin Family result -- which one do you think is more reflective of the true population parameter and/or how should we reconcile them?

A journalist who does not dare mention genetics, thus misunderstands the findings: a dead father contributes as much to your future status as a live one. Why inherited wealth rarely survives the grandchildren thetimes.com/article/b737c0…

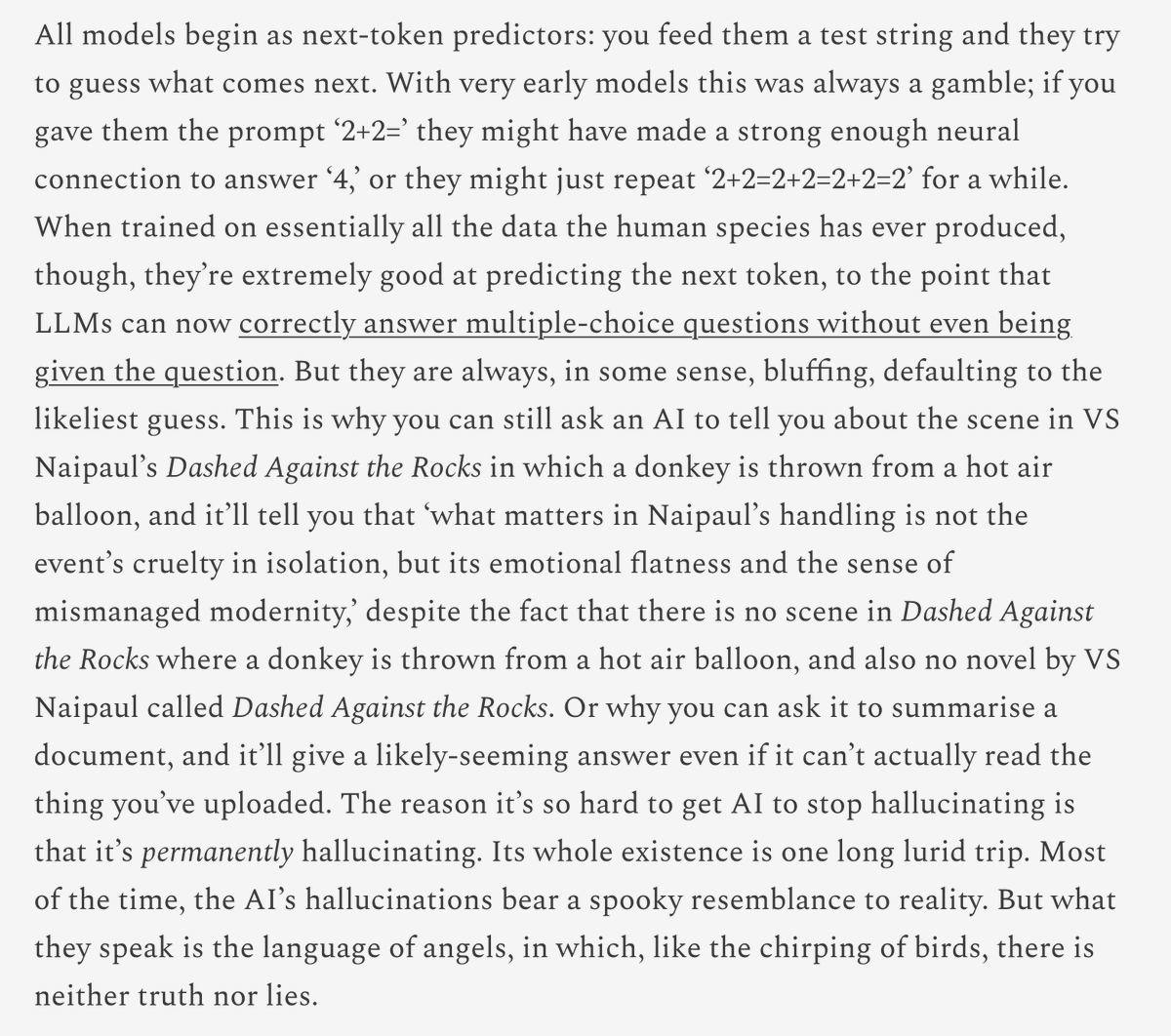

For now I think recent successes of AI for mathematics should be understood as a complement to, rather than a substitute for, human mathematical labor. This is because AI, at present, is most productive working horizontally, whereas humans work vertically. By this I mean that the highest quality AI mathematics thus far has been obtained by feeding entire problem lists into a model or scaffold and picking out the few high-quality successes. It is very hard to predict in advance where these successes occur. On the other hand, humans typically pick a few questions and try to understand them deeply--and historically, when they do so, they make progress! I think this points to increasing value of problem lists, and also suggests that "solved an open problem" is an increasingly useless proxy for what we care about in mathematics. There are a lot of problems that have sat open for a long time because the right person didn't happen to look at them, and many others that are open because they benchmark our failure to fundamentally understand some basic object. I've solved old open problems that I think had the former flavor rather than the latter. I think my best work, however, is not about solving long-open problems, but rather inventing a new ones that help to understand something we care about, and making progress on that.

@Benthamsbulldog The gist of Boonin's view: imagine a pollutant released 100 years ago still having new effects -- these harms would be after the births of the harmed, even though the *source* of the harm was before those births, so there's no non-identity problem. Plausibly, slavery is the same.

Such a silly argument for so many reasons. One, wouldn’t out-of-training data be *less* likely to trigger the detector? Two, aren’t the LLMs writing the prose similarly biased? It’s such a shame that a certain kind of institutional liberal instinctively reaches for identity as a shield in situations like these. It’s really damaging for when people need to actually talk about discrimination.

There seems to be an accusation here that @pangramlabs only used work that comes from the “dominant culture” and therefore it’s unreliable at measuring text used in the context of this prize, which aims to reward underserved communities.

This paper is a goldmine on scientific self-experimentation. -14 Nobel Prizes have gone to self-experimenters. - Of 465 scientific self-experiments documented over a 203-year period, there have been 8 deaths. - The most recent recorded death from a self-experiment was in 1928, when Alexander Bogdanov injected himself with an incompatible blood type. - Many universities say that self-experimentation would require IRB approval because it violates "ethical norms for medical research," which is not true; the Nuremberg Principles make an explicit exception for people experimenting on themselves, and the Declaration of Helsinki just says the subject must consent. Also, "there is no law nor regulation identified that requires investigators experimenting on themselves to consult an ethics committee." - There are lots of recent self-experiments; "In 2014, Philip Kennedy had electrodes implanted into his speech center to further his research on direct brain interfaces. In 2016, Alex Zhavoronkov self tested drugs which his software algorithms identified as likely candidates."

Elon Musk says work-from-home is "morally wrong" — here's why. cnb.cx/3WfsSWX