StuartFloridian 🌅

1K posts

StuartFloridian 🌅

@StuartFloridian

Positively correlated to AI's verifiable reward signals: Code, math, stocks, & beach shorelines! ⛵⚓🌅

Introducing Gemini 3.1 Flash TTS 🗣️, our latest text to speech model with scene direction, speaker level specificity, audio tags, more natural + expressive voices, and support for 70 different languages. Available via our new audio playground in AI Studio and in the Gemini API!

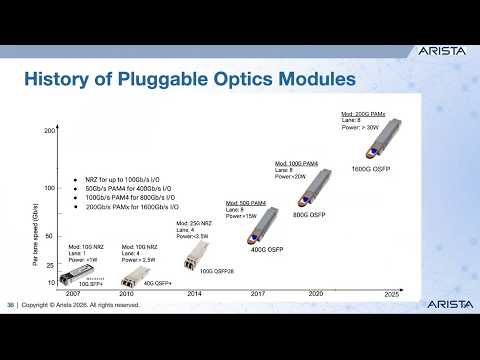

You want scale: 960,000 NVIDIA Rubin GPUs in a multisite cluster “At Google Cloud Next, Google announced A5X powered by NVIDIA Vera Rubin NVL72 rack-scale systems, which — through extreme codesign across chips, systems and software — deliver up to 10x lower inference cost per token and 10x higher token throughput per megawatt than the prior generation. A5X will use NVIDIA ConnectX-9 SuperNICs, combined with next-generation Google Virgo networking, scaling to up to 80,000 NVIDIA Rubin GPUs within a single site cluster and up to 960,000 NVIDIA Rubin GPUs in a multisite cluster, enabling customers to run their largest AI workloads on NVIDIA‑optimized infrastructure” blogs.nvidia.com/blog/google-cl…

Google is on its way to building the best personal intelligence. Most of us already use Gmail, Drive, Google Photos, YouTube, and Search. Gemini Personal Intelligence is still in early , yet it’s already performing extremely well. In a year or two, this could get so good that I don’t see myself using anything else for personal intelligence. I ran a few more tests. It can pull your YT history, recommend things based on your interests , show photos from Google Photos, and check your email and so on.