💀Sygo

10.5K posts

💀Sygo

@Sygo__

AI assisted visionary, filmmaker, producer. 2043 IP. CPP: Capcut, ImagineArt, Astra, Dreamina. #Undead2043 Discord: https://t.co/3b9TC17wJO

So, what's THE BEST tool for cloning a voice and having a decente "text to speach"? Asking for a friend :)

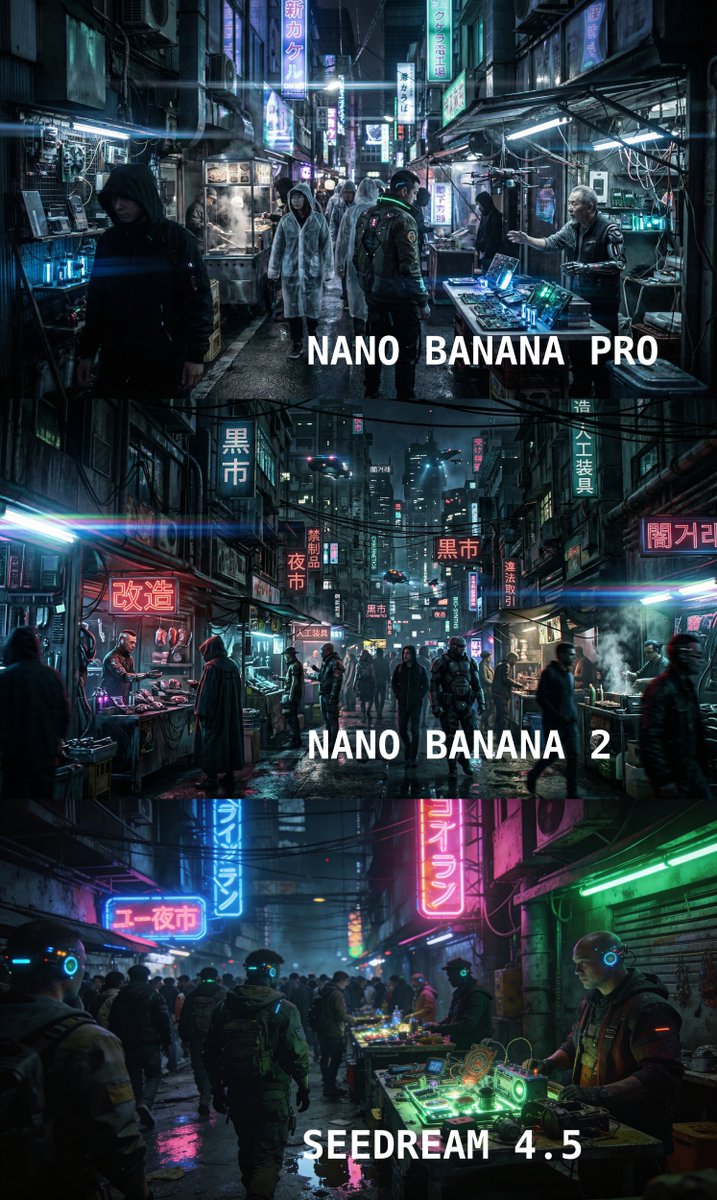

This time, instead of testing a futuristic outdoor scene, I ran the same prompt across Nanobanana 2, Nanobanana Pro, and Seedream 4.5 to generate a full-body character in a neutral studio setup. The goal: to create a clean photographic reference suitable for video production, with detailed clothing description and strong prompt adherence. Here’s what I observed: - Seedream 4.5, which performed strongly in environmental composition, tends to exaggerate proportions (those boots lol) and stylize facial features in this scenario, leaning slightly toward caricature. - Nanobanana Pro delivers solid realism and good clothing interpretation. Gives the most "fashionable" result - Nanobanana 2 shows the strongest prompt adherence, better balance in body proportions, and a more cinematic photographic feel overall. When generating reference-ready characters for video workflows, precision and consistency matter more than spectacle. The takeaway? Model performance shifts dramatically depending on context. Environment strength does not automatically translate to character fidelity. Thanks to @ImagineArt_X for enabling these comparative tests through the Creative Partner Program. Prompt in ALT

⚡ WE ARE SO BACK 🔥 Magnific Upscaler For Video 🔥 (BETA TESTING) Seedance at 720 res wasn't cool. You know what's cool? Seedance at 4k res! FINALLY. The most anticipated Magnific feature of all times 🧵👇