Yangjun Ruan

246 posts

@YangjunR

Creating @thinkymachines | @UofT @stanfordAILab @VectorInst

Every conversation I have had IRL with @WilliamBarrHeld has been him obsessing over scaling laws. The man is on a mission. Can't wait to see 1e23!

How to get AI to make discoveries on open scientific problems? Most methods just improve the prompt with more attempts. But the AI itself doesn't improve. With test-time training, AI can continue to learn on the problem it’s trying to solve: test-time-training.github.io/discover.pdf

LLM memory is considered one of the hardest problems in AI. All we have today are endless hacks and workarounds. But the root solution has always been right in front of us. Next-token prediction is already an effective compressor. We don’t need a radical new architecture. The missing piece is to continue training the model at test-time, using context as training data. Our full release of End-to-End Test-Time Training (TTT-E2E) with @NVIDIAAI, @AsteraInstitute, and @StanfordAILab is now available. Blog: nvda.ws/4syfyMN Arxiv: arxiv.org/abs/2512.23675 This has been over a year in the making with @arnuvtandon and an incredible team.

Benchmarking data is dominated by a single “General Capability” dimension. Is this due to good generalization across tasks, or to developers pushing on all benchmarks at once? 🧵 with some analysis, including the discovery of a “Claudiness” dimension.

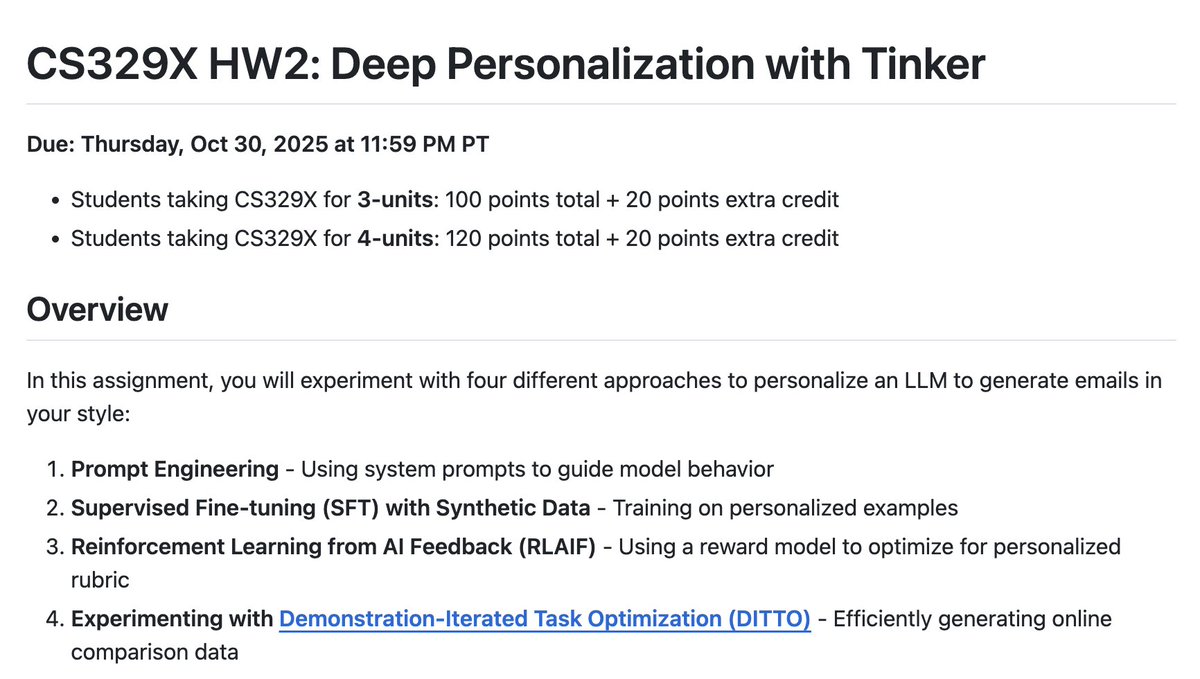

Its not even been a month since @thinkymachines released Tinker & Stanford already has an assignment on it

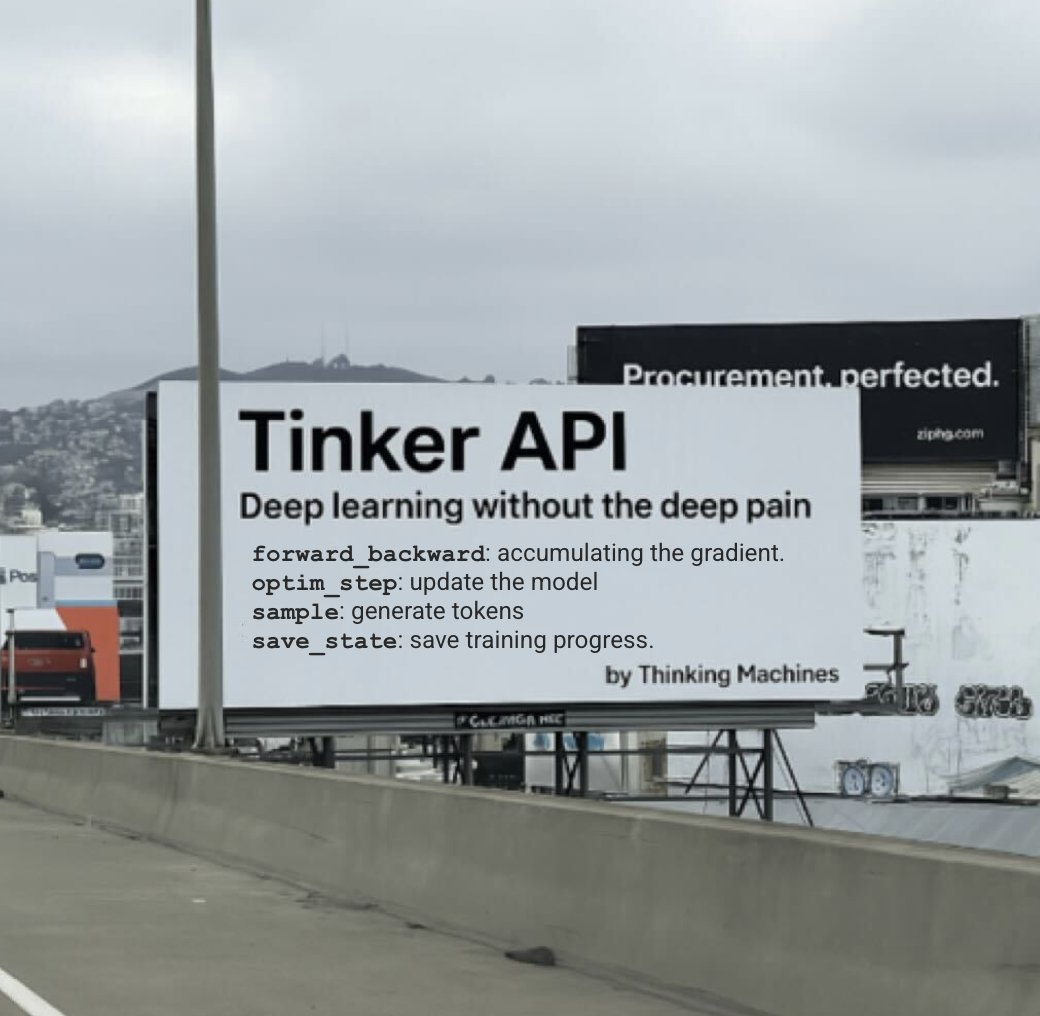

Introducing Tinker: a flexible API for fine-tuning language models. Write training loops in Python on your laptop; we'll run them on distributed GPUs. Private beta starts today. We can't wait to see what researchers and developers build with cutting-edge open models! thinkingmachines.ai/tinker