Thinking Machines

34 posts

Thinking Machines

@thinkymachines

Thinking, beeping, and booping. @tinkerapi

Mantic used Tinker to RL gpt-oss-120b on judgmental forecasting; the result outperformed frontier models on event predictions. Combined with @_Mantic_AI's forecasting architecture, task-specific training takes us to the cusp of automated superforecasting.

We are partnering with @nvidia to power our frontier model training and platforms delivering customizable AI. thinkingmachines.ai/news/nvidia-pa…

We’ve loved watching the Tinker community grow, and we're excited to have a place to share product updates, helpful recipes, and spotlights on the amazing things Tinkerers are building. Get started with Tinker here: thinkingmachines.ai/tinker/

Putnam, the world's hardest college-level math test, ended yesterday 4p PT. Noon today, AxiomProver solved 9/12 problems in Lean autonomously (3:58p PT yesterday, it was 8/12). Our score would've been #1 of ~4000 participants last year and Putnam Fellow (top 5) in recent years

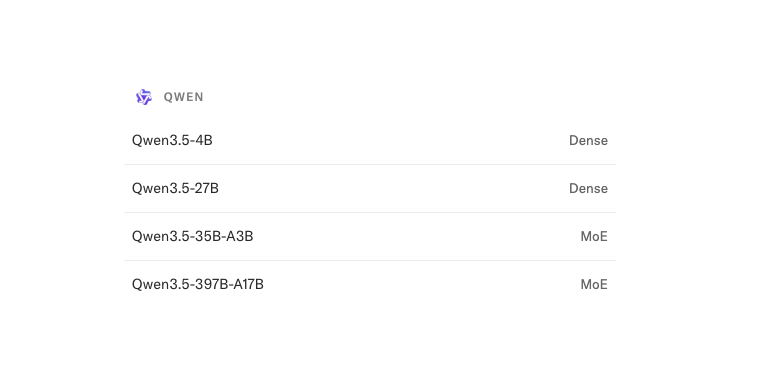

We just added 4 new models to Tinker from the gpt-oss and DeepSeek-V3.1 families. Sign up for the waitlist: thinkingmachines.ai/tinker/

Introducing Tinker: a flexible API for fine-tuning language models. Write training loops in Python on your laptop; we'll run them on distributed GPUs. Private beta starts today. We can't wait to see what researchers and developers build with cutting-edge open models! thinkingmachines.ai/tinker