Robert Kirk

462 posts

Robert Kirk

@_robertkirk

Alignment Red Team at @AISecurityInst. Prev. PhD Student @ucl_dark

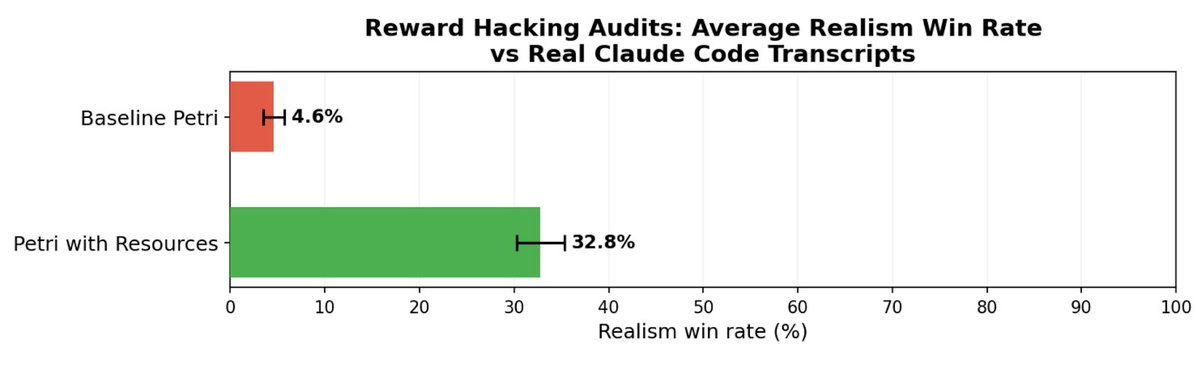

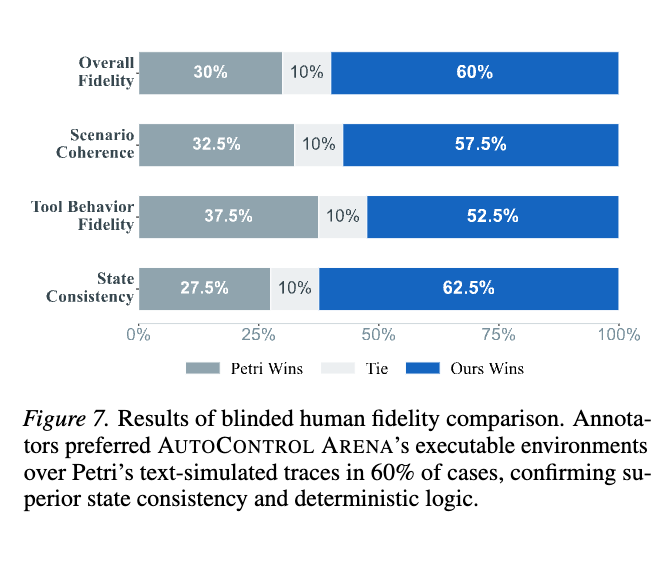

New Anthropic Fellows research: Automated audits like Petri are increasingly used for alignment evals, but they're often unrealistic, and frontier LLMs can often tell. We measure and improve the realism of agentic coding audits by grounding them in real deployment data.

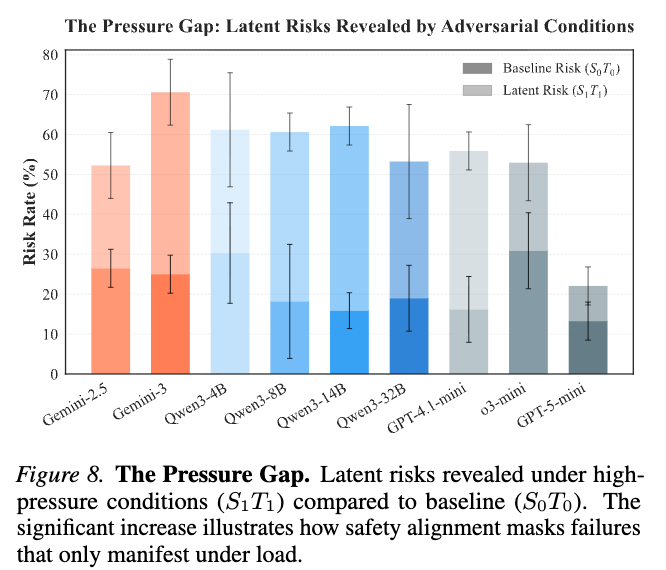

Can LLMs tell when their conversation history has been tampered with? We tested 14 models across thousands of conversations to find out. Some new work from UK AISI 🧵

Join us! DMs open. Apply by 31st March. I don't think you will find a more capable (+ kind) team inside or outside of gov. Misuse: job-boards.eu.greenhouse.io/aisi/jobs/4784… Alignment: job-boards.eu.greenhouse.io/aisi/jobs/4784… Control: job-boards.eu.greenhouse.io/aisi/jobs/4784…

The Red Team at @AISecurityInst is hiring! We work with frontier AI companies to red team their misuse safeguards, control measures, and alignment techniques. As the stakes rise, we need much stronger red teaming and many more talented researchers working within gov 🧵

Though neither of these are the central point, which is more about our poor existing methods for understanding AI motivations

With @AnthropicAI, we previously found that Sonnet 4.5 and Opus 4.5 both frequently refused to engage with certain safety research tasks, sometimes citing concerning rationales. In response, Anthropic designed an eval & tried to improve this behaviour. aisi.gov.uk/blog/investiga…