Charles Foster

6.1K posts

Charles Foster

@CFGeek

Excels at reasoning & tool use🪄 Tensor-enjoyer 🧪 @METR_Evals. My COI policy is available under “Disclosures” at https://t.co/bihrMIUKJq

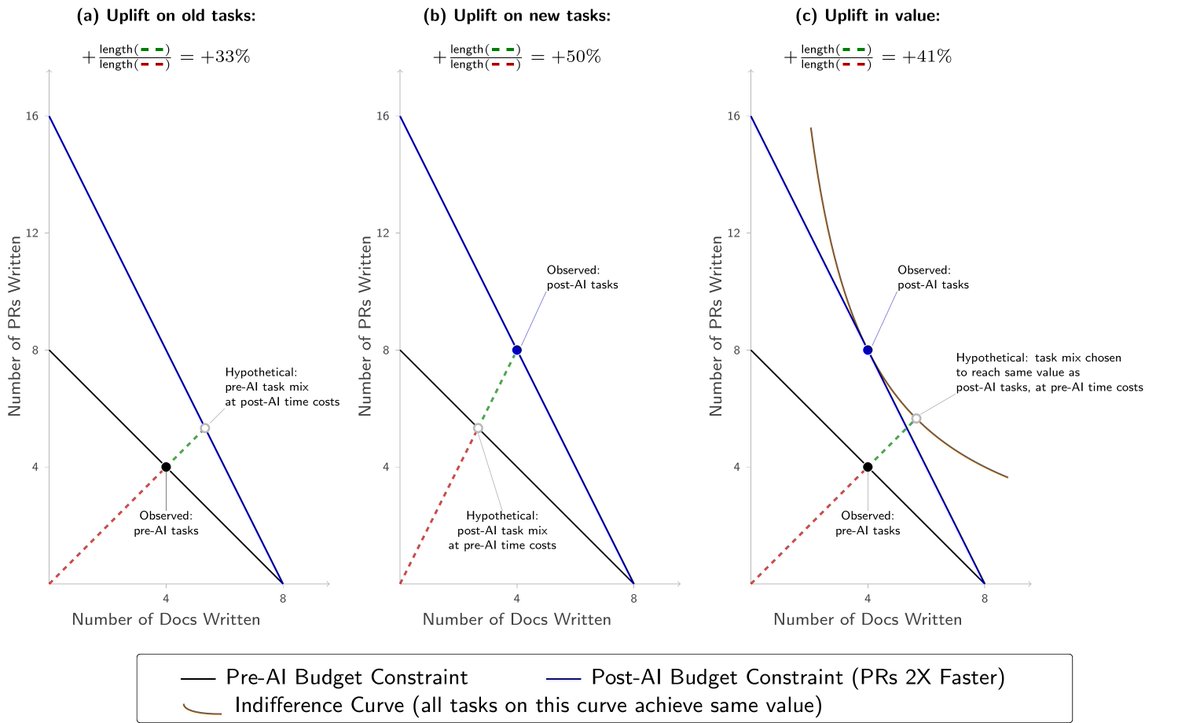

Our evaluations show that frontier AI's cyber capabilities are advancing quickly. The length of cyber tasks frontier models can complete has been doubling every few months, and this rate has become faster over time, with recent models exceeding our previous trends. 🧵

We evaluated an early version of Claude Mythos Preview for risk assessment during a limited window in March 2026. We estimated a 50%-time-horizon of at least 16hrs (95% CI 8.5hrs to 55hrs) on our task suite, at the upper end of what we can measure without new tasks.

Of the 228 tasks in our suite, only 5 are estimated as 16+ hours long, making measurements at this range unstable and less meaningful than at ranges with better task coverage. Thus, we are not highlighting exact estimates for models above 16 hours measured with our current suite.

We evaluated an early version of Claude Mythos Preview for risk assessment during a limited window in March 2026. We estimated a 50%-time-horizon of at least 16hrs (95% CI 8.5hrs to 55hrs) on our task suite, at the upper end of what we can measure without new tasks.

Directly rewarding or penalizing CoTs can make models’ reasoning traces less informative for detecting misalignment. That’s why we treat avoiding CoT grading as an important part of preserving monitorability. We recently built an automated detection system to find cases where RL rewards were computed using model CoTs.