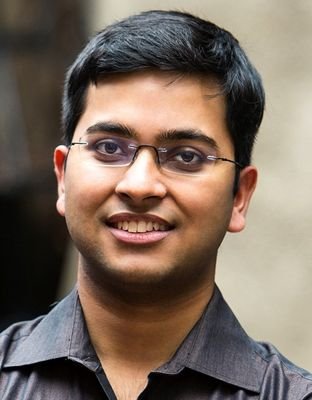

Our paper has been accepted for Oral presentation at #CVPR 🎉🎉 Kudos to the team: @karan_uppal3, @abhinav_java See you in Denver! On a side note, I am finishing my fellowship by June and looking for full-time research roles. DMs open.

Abhinav Java

69 posts

@abhinav_java

navigating the sea of entropy | RF @Microsoft Research | Prev @Adobe, @dtu_delhi | Hala Madrid!

Our paper has been accepted for Oral presentation at #CVPR 🎉🎉 Kudos to the team: @karan_uppal3, @abhinav_java See you in Denver! On a side note, I am finishing my fellowship by June and looking for full-time research roles. DMs open.

New paper: Understanding Task Transfer in Vision–Language Models How does finetuning a model on one task affect its performance on other tasks? @karan_uppal3 and @abhinav_java are presenting this work at Unireps, NeurIPS!! 📍 Ballroom 20D ⏰ 3:45 PM – 5:00 PM Come and say Hi!🧵

After 24 hours of complete silence except for that single social media statement, ICLR has now decided to disregard all author and reviewer discussions during the two week rebuttal period 🤣 Quite a surprising way to wrap up this year’s review process

Shout out to @DSPyOSS GEPA (From 20:15). cc @LakshyAAAgrawal

Launch Day 3 of 6: Layout Model Updates 🚀 If your doc parser gets layout wrong, everything downstream breaks. ⚠️ Reading order scrambled → unusable output ⚠️ Blocks missed → footnotes, financial figures, legal text lost We just rolled out major layout upgrades at Datalab to fix this at the root.

🔍 What is deep research & how can AI master it? Join us for a fireside chat on a new benchmark LiveDRBench w/: 🎙 @abhinav_java of @Microsoft Research India 🎙 @Ceciletamura of @ploutosai world.ploutos.dev/stream/crystal…

TL;DR 🧠 🔹 Optimized ReAct Prompting + 🔹 SFT to exploit test-time compute + 🔹 RL finetuning to learn when to stop ➡️ Only 1k training examples ⚡ Lower inference latency 📄 arxiv.org/abs/2507.07634…