Nick

2.2K posts

Nick

@roboflock

techno-optimist 🚀 | INTJ | all-in TSLA/X.AI/Neuralink | future shepherd of robots 🐑 | Get your Tesla with 500€ off: https://t.co/MFw7eZblE0

Humans saw stones and sticks and decided to make this

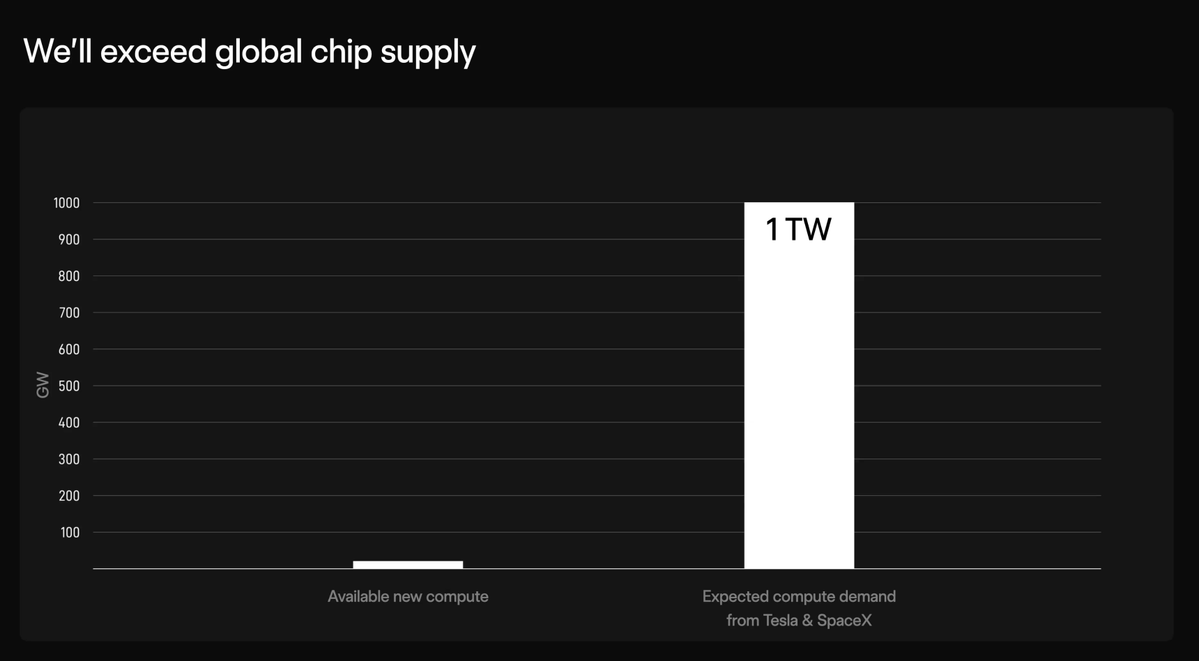

SpaceX Compute in Space What exactly does a grid of 1 million compute satellites buy you? Each “AI Sat Mini” is expected to deliver 100 kW of compute, roughly equivalent to 400 AI5 processors running at 250 W each*. Scale that to 1 million satellites, and you get 100 GW of total compute power. For context, today the xAI Colossus cluster is on the order of ~2 GW— and it’s already massive. This system would be ~50× larger. Importantly, this would primarily be inference compute, not a tightly coupled training cluster. In other words, this capacity is dedicated to serving real-world requests, not model training. Today, those requests are mostly: AI assistant queries Image generation Video generation Let’s focus on LLM inference (text queries): Each satellite produces 100 kW × 24 hours = 2,400 kWh per day. That’s roughly equivalent to ~30 fully charged Tesla vehicles (assuming ~80 kWh per vehicle). If we assume 0.5 Wh per query (reasonable for detailed or technical prompts), each satellite can handle: ~4.8 million queries per day Across a 1-million-satellite constellation: ~4.8 trillion queries per day (That's ~600 such queries per day for every person on Earth.) Of course, inference cost varies widely. Video generation or complex multi-agent workloads— like those seen in frontier systems such as Grok 4.20—consume significantly more compute. But even with that variability, the scale here is the point. *SpaceX will use a new chip from the Tesla/SpaceX Terafab collaboration, called the “D2,” for space-based compute. There are no specs available yet. AI5 is for Robotaxi (FSD) and Optimus.

@MeekMill Brother just ask Claude to help you with Claude.