Rupesh Srivastava

1.1K posts

@rupspace

Fully open LLM frontiers @MBZUAI IFM Silicon Valley. Previously (co)developed Highway Networks, Upside-Down RL, Bayesian Flow Networks, EvoTorch.

The main reason I don't like MoEs is just philosophical, I'm a big ockham's razor believer and no one computed the actual brain/money cost of all in moe...

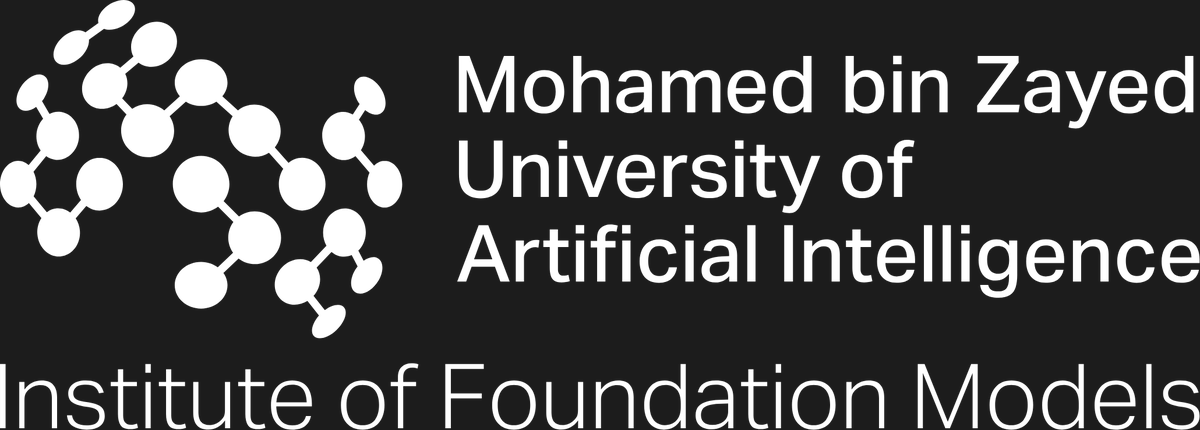

We introduce MoUE. A new MoE paradigm boosts base-model performance by up to 1.3 points from scratch and up to 4.2 points on average, without increasing either activated parameters or total parameters. The main idea is simple: a sufficiently wide MoE layer with recursive reuse can be treated as a strict generalization of standard MoE. arxiv.org/abs/2603.04971 huggingface.co/papers/2603.04… #MoE #LLM #MixtureOfExperts #SparseModels #ScalingLaws #Modularity #UniversalTransformers #RecursiveComputation #ContinualPretraining

Terminal-Bench is a leading benchmark for agents. Unfortunately it’s hard: most small coding agents get very low scores on TB2, so training/system ablations look flat - you can't tell what's working. Announcing OpenThoughts-TBLite - 100 curated TB2-style tasks, difficulty-calibrated so even 8B models can make progress. It's designed to give researchers measurable signal during development, providing faster feedback for experimental iteration while closely tracking true TB2 performance🧵

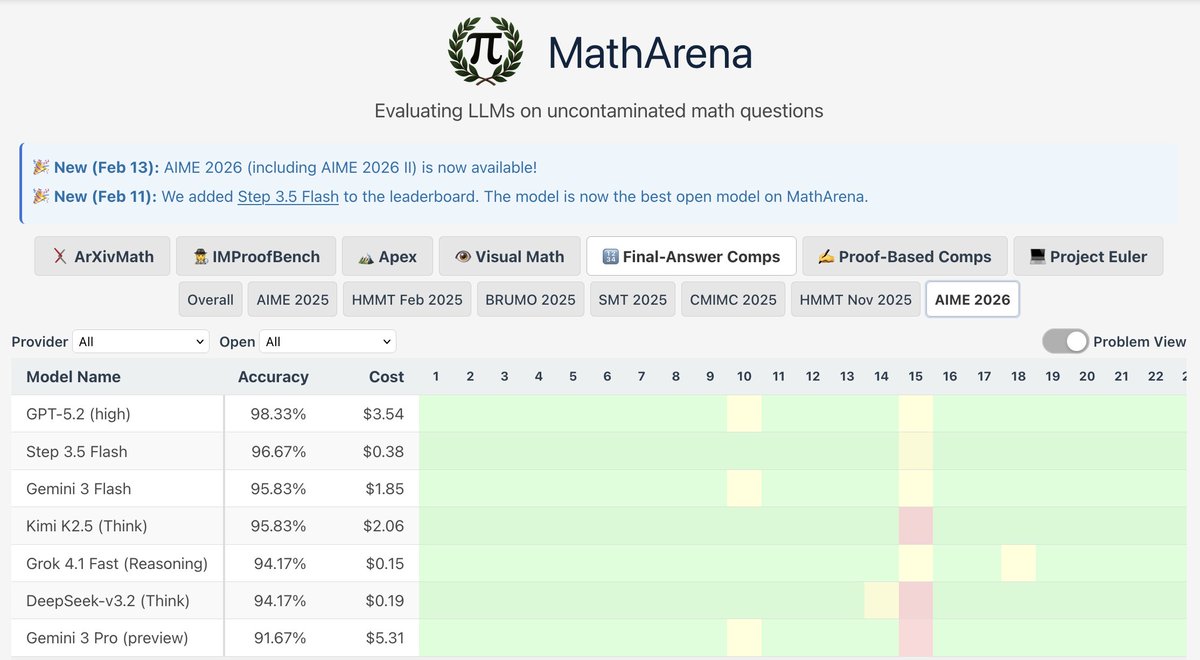

Link to MathArena: matharena.ai/?view=problem Link to HF: huggingface.co/datasets/MathA…

i think we don't realize the impact that deepseek had on the open ecosystem, there is so much from them that you can find in almost every frontier open llm today > most of the open frontier models follow the "finegrain + sparse + shared expert" deepseek moe recipe > a lot of them use MLA > first (with minicpm) to use sparse attention in prod (DSA) > first to do reasoning in the open with R1 > GRPO which is the foundation for most of the newer RL algorithms > they also innovated on the training recipe at scale, first to do fp8? MTP? load balancing schemes that now other lab is using > advance training/inference infra with oss release like DeepEP that pretraining lib like megatron use i'm so grateful deepseek exists

ChatGPT 5.2 with thinking. The mutated strawberry problem. 🍓

Was surprised to learn that only 20% of the compute was spent on pre-train for a frontier model. The rest is post.