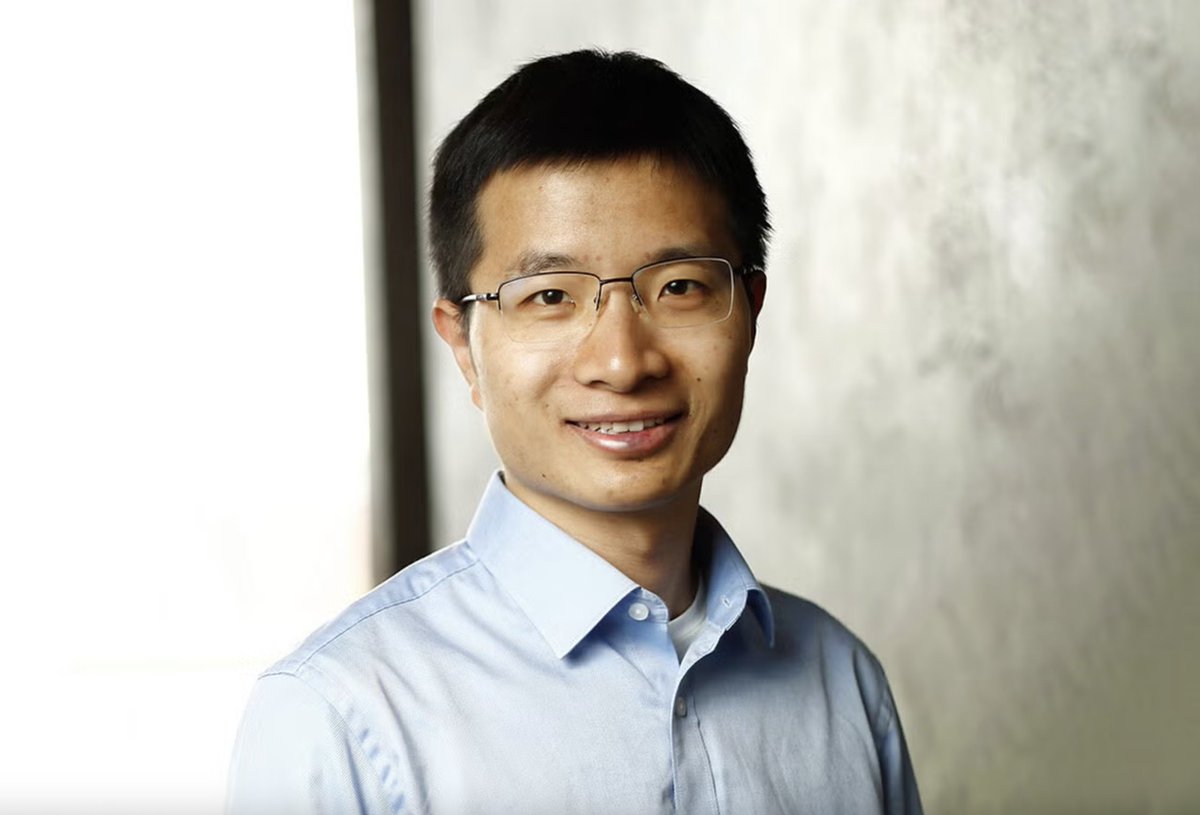

Jingfeng Wu

123 posts

@uuujingfeng

Postdoc @SimonsInstitute @UCBerkeley; alumnus of @JohnsHopkins @PKU1898; DL theory, opt, and stat learning.

Congratulations to Prof. Weijie Su (@weijie444) from our Statistics and Data Science Department on being named the recipient of this year's Committee of Presidents of Statistical Societies (@COPSSNews) Presidents' Award: whr.tn/3ZToJu9 The honor is given annually to a young member of the statistical community in recognition of outstanding contributions to the profession of statistics. It's jointly sponsored by five statistical societies: @AmstatNews, @ENAR_ibs, @InstMathStat, @SSC_stat, and @WNAR_ibs.

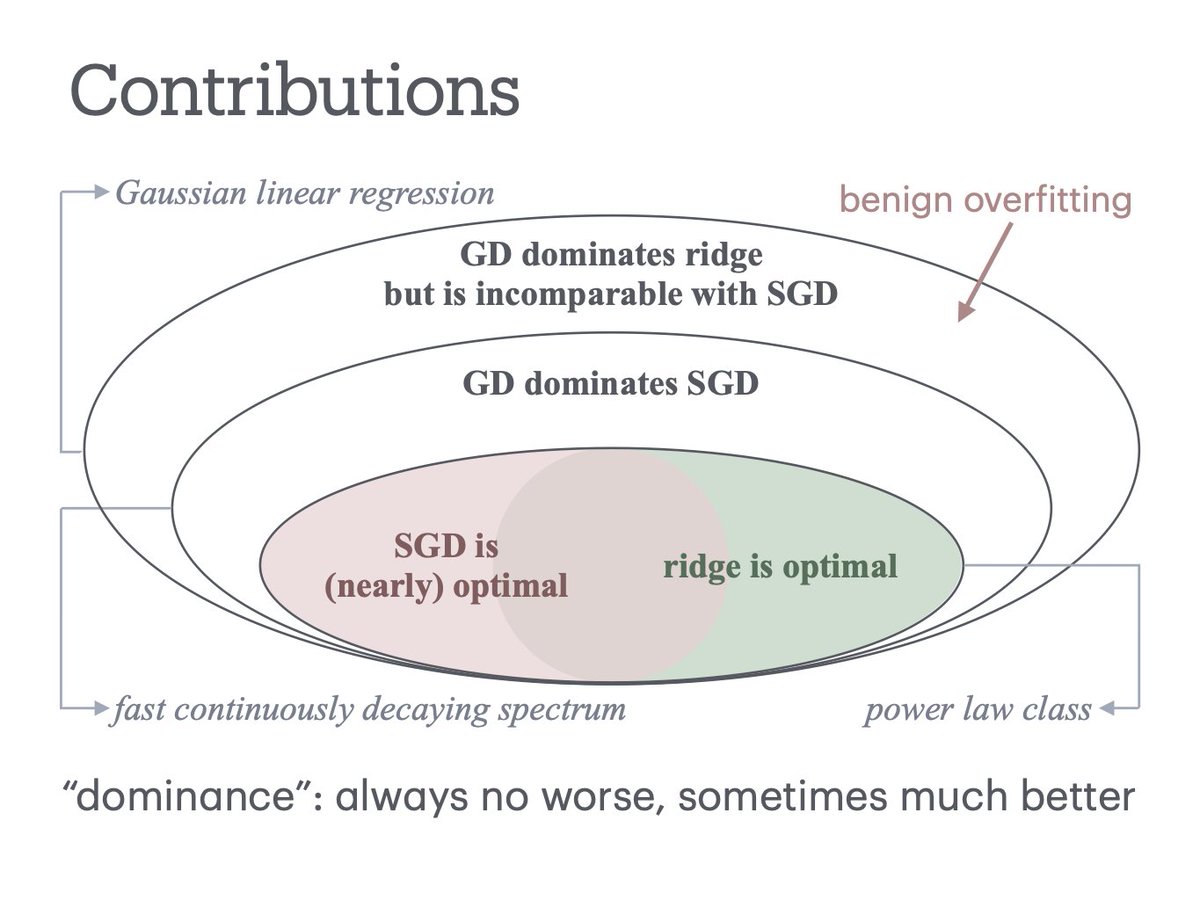

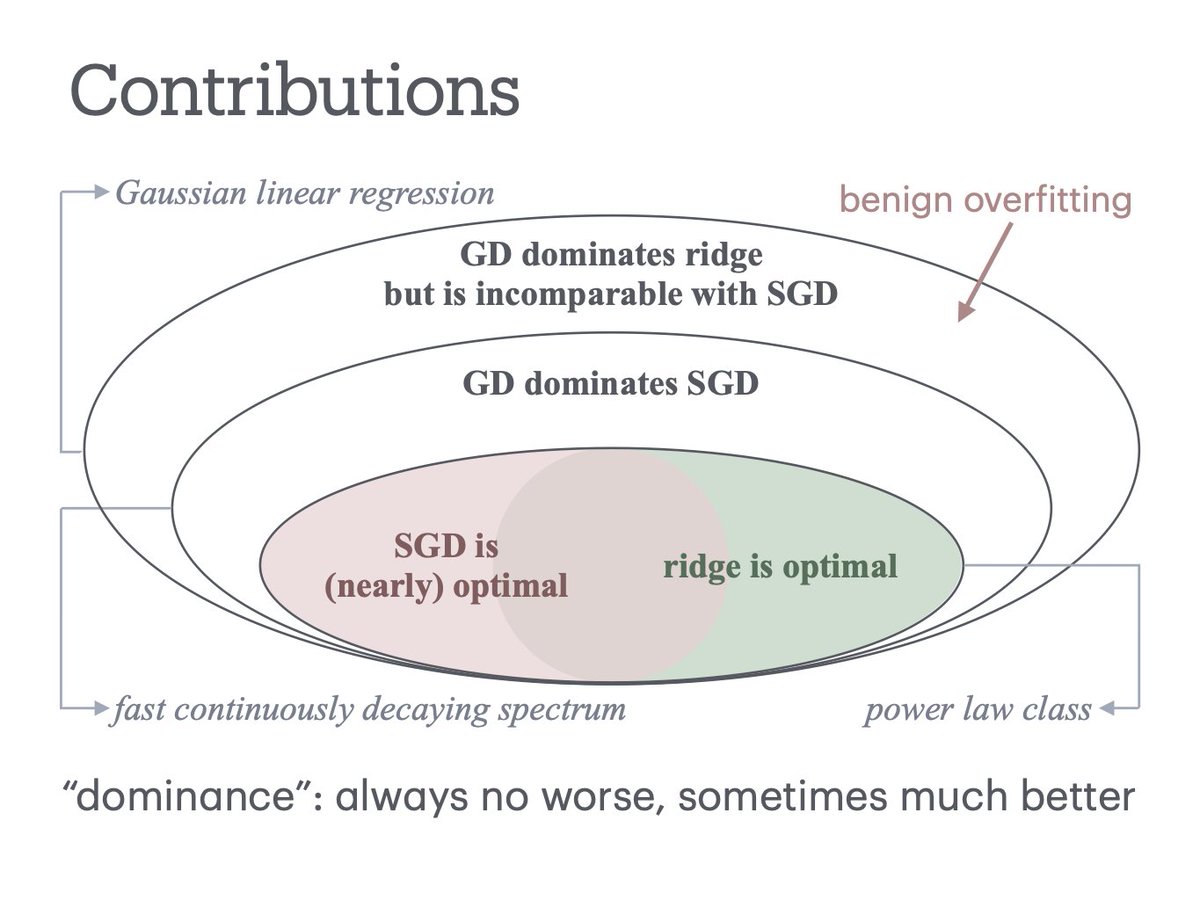

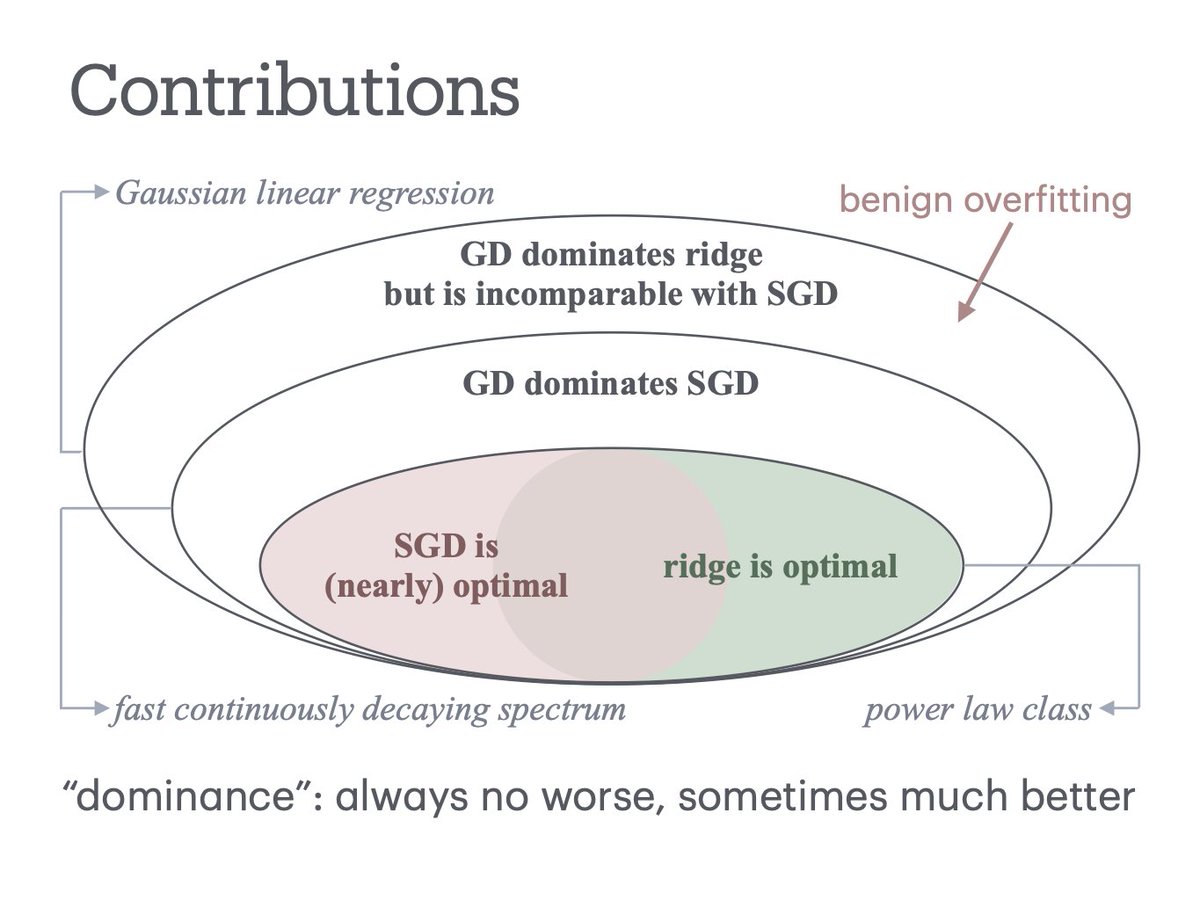

sharing a new paper w Peter Bartlett, @jasondeanlee, @ShamKakade6, Bin Yu ppl talking about implicit regularization, but how good is it? We show its surprisingly effective, that GD dominates ridge for all linear regression, w/ more cool stuff on GD vs SGD arxiv.org/abs/2509.17251