Shitty power trader

75 posts

Shitty power trader

@DomResidAgg

who tf let a zoomer trade virtuals? up 4m ytd on dom:dom

Data center electricity demand is on pace to surpass today's entire grid by 2030, and communities across the country are already pushing back on the construction that would get us there. Scott's answer to that resistance is a concept called BYOE, Bring Your Own Electricity. "The data center boom represents the largest private capital investment in U.S. infrastructure in modern history. Instead of bringing only enough only what's needed to cover their own consumption, what if data centers brought 110% and put the excess back on the grid, at or below cost? If a data center consumes 1 gigawatt of power, they should bring 1.1 gigawatts online, whether behind the meter or via a traditional grid deployment. The extra 0.1 gigawatts, or 100 MW, would be enough to power over 50,000 homes, and if put back on the grid for low cost, would reduce consumer electricity prices and strengthen our grid at no cost to the taxpayer. This approach is good for Americans and good for the companies building and operating data centers. It means lower electric bills for consumers, less resistance for data center developers, and a faster path to AI dominance for America." (Net Positive, @generalmatter)

If you paid even a penny in federal income tax last year, you paid more than: Tesla Southwest Disney Live Nation HP United PayPal CVS Health Palantir Citigroup PG&E 3M That's right. They paid $0 in federal income tax. It's time for big corporations to pay their fair share.

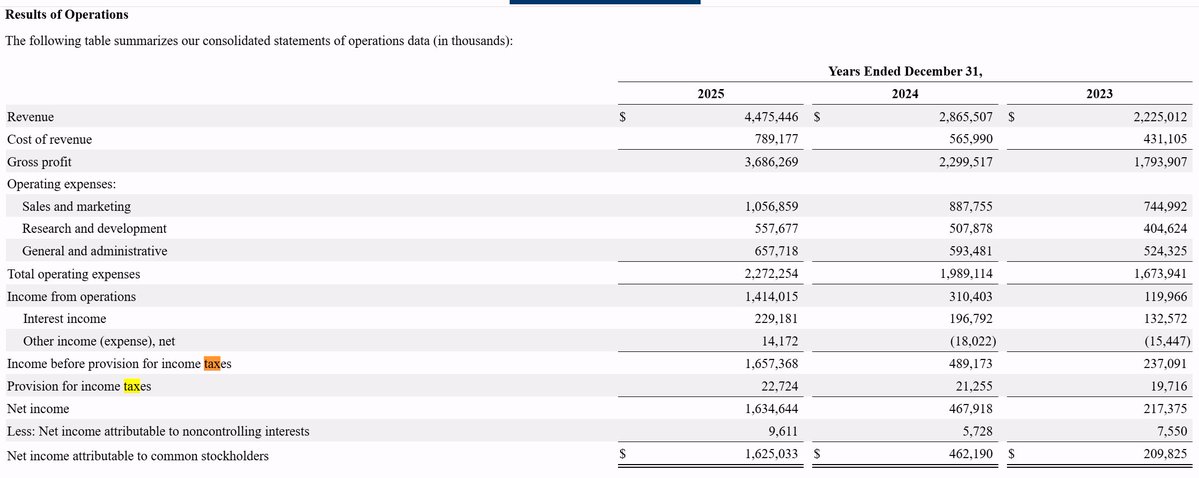

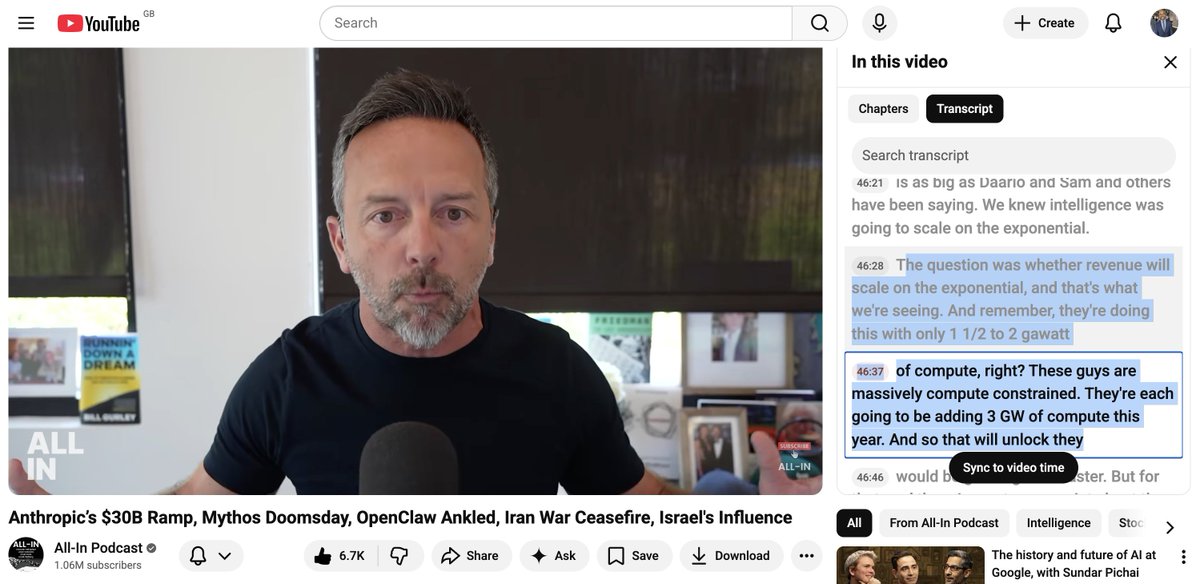

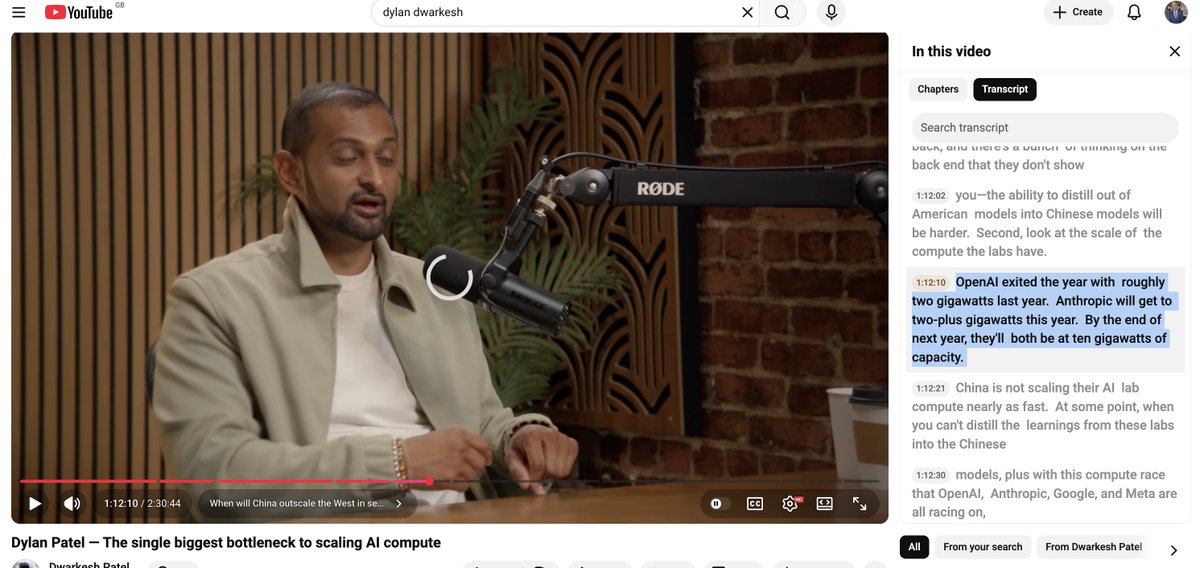

.@altcap had some insightful takes and some arguably newer disclosures on OAI/Anthropic (he's an investor in both) worth tracking: Anthropic's Revenue Ramp: Brad called this the fastest revenue explosion in technology history. $1B run rate end of 2024, $4B by mid-2025, $9B by end of 2025, then $30B by end of March 2026. He noted they hit their year-end target by Q1. To contextualize the monthly adds, he said Anthropic added the equivalent of Databricks plus Palantir combined in a single month. He wouldn't be shocked if Anthropic exits the year at $80-100B in revenue. "TAM for Intelligence" Thesis: Brad's central argument is that intelligence has a near-infinite TAM, fundamentally different from any prior technology market. He stressed this isn't zero-sum between Anthropic and OpenAI. Millions of self-interested actors (consumers, enterprises, 1,000+ paying $1M+ annually) are all demanding the product simultaneously. Same Jevons paradox argument: unit cost of intelligence is plummeting because model capability is surging, which drives more consumption. Gross Margins and "Accidental Profitability": Brad pushed back hard on the narrative that these companies are bleeding cash. His logic: the biggest cost input is compute, and Anthropic only has ~1.5-2 GW of capacity. That compute cost is relatively fixed whether revenue is $1B or $80B. So gross margins are expanding 'explosively.' He suggested the companies may hit 'accidental profitability' because they literally can't spend revenue fast enough on compute buildout. He also noted Anthropic has only 2,500 people versus Google crossing similar revenue thresholds with 120,000. Inference costs are down 90% year over year. Anthropic's Strategic Focus as Competitive Advantage: Brad credited Anthropic's discipline in saying no. No multimodal, no video, no hardware, no chips, no building data centers. They concentrated entirely on coding and co-work as the path to AGI/ASI, executed with 2,500 people all pulling in the same direction. That focus, combined with the coding lead, is what let them come from being "counted out" a year ago to now dominating that market OpenAI Feelin Shor-term Pain but Still Optimistic: Brad said he's a buyer of OpenAI shares today despite the negative vibes (employees leaving, strategy questions, secondary market trading below last valuation). He called it "peak OpenAI FUD." His case: it starts with great researchers and models. The upcoming Spud model (first Blackwell-trained model) is being previewed and people are telling him it's on par with Mythos. Gross vs. Net Revenue Distraction: Brad dismissed the gross vs. net revenue debate (Anthropic reportedly presents gross, OpenAI net). He said the hyperscaler distribution commissions are single-digit percentages of total revenue. Whether you haircut Anthropic by 5-10% or gross up OpenAI, the comparison is roughly apples-to-apples and it's a distraction from the real story.

Vineyard Wind 1 completes turbine installation after court ruling capecodtimes.com/story/news/env…