STÖK ✌️@stokfredrik

Iets talk about ai data processing and vulnerability research.

I understand this challenge quite well, it comes down to compliance, risk and data processing, but I find it exponentially harder to avoid any kind of third party processing in today’s age,

let’s say one uses Google Docs to keep notes or write a report and use gemeni for spell checking, or use slack, windows, a modern ide, or even perform a search, etc. etc, they all have ai features and telemetrics enabled and built in.

When it comes to data processing using ai the end user can in some cases control this by having an enterprise agreement with zero logging and zero data retention activated on ex Claude code. But it comes at a much higher price tier, and I highly doubt most will adapt to that setup. Then we have the fact that most foundation models live in the us. Which have its own complications.

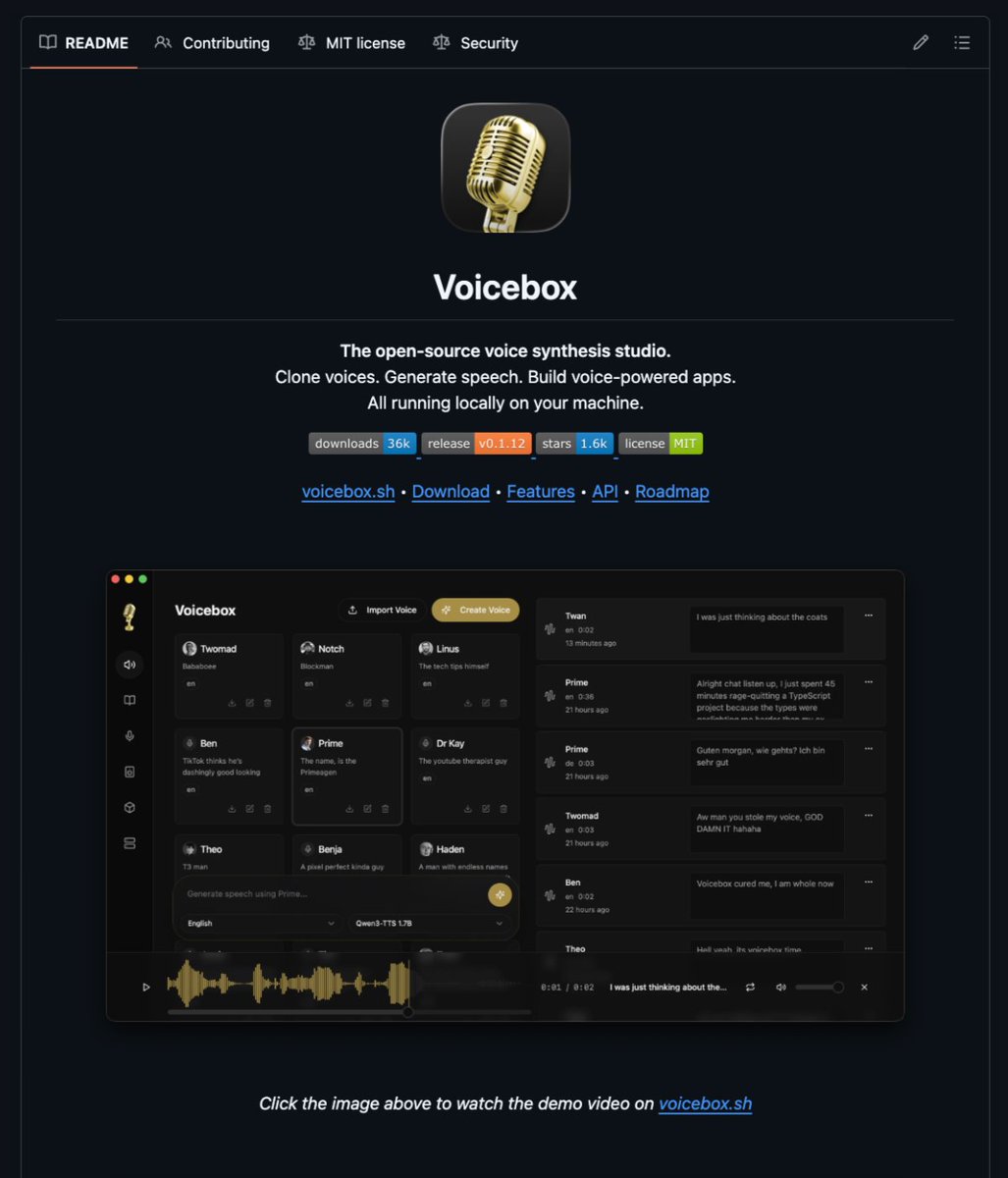

This it’s definitely a question I have pondered and not 100% sure on how to solve or even avoid. My approach is to guard the data as much as I can with the knowledge I have and use services where I can opt out from training and be selective with what I process and how and use local

Models for some task.

But tbh I think it’s a conversation of the past. If the thing you are processing have been in the internet (public facing) then it’s already in the datasets. And is code or vulns even IP these days? when more and more teams produce code on the fly.

What are your opinions?

can we solve this?