@CoinDesk @realDannyNelson Although I did not write a dissent (yet?), I voted against the action. It's another example of the SEC's imprecise legal reasoning in crypto cases, which only adds to the uncertainty for crypto projects in the US.

Tyler Scott Ward

29K posts

@LordTylerWard

"Southern fried autist." - Wall Street Journal; Building: @sanctumai / Formerly: @barn_bridge

@CoinDesk @realDannyNelson Although I did not write a dissent (yet?), I voted against the action. It's another example of the SEC's imprecise legal reasoning in crypto cases, which only adds to the uncertainty for crypto projects in the US.

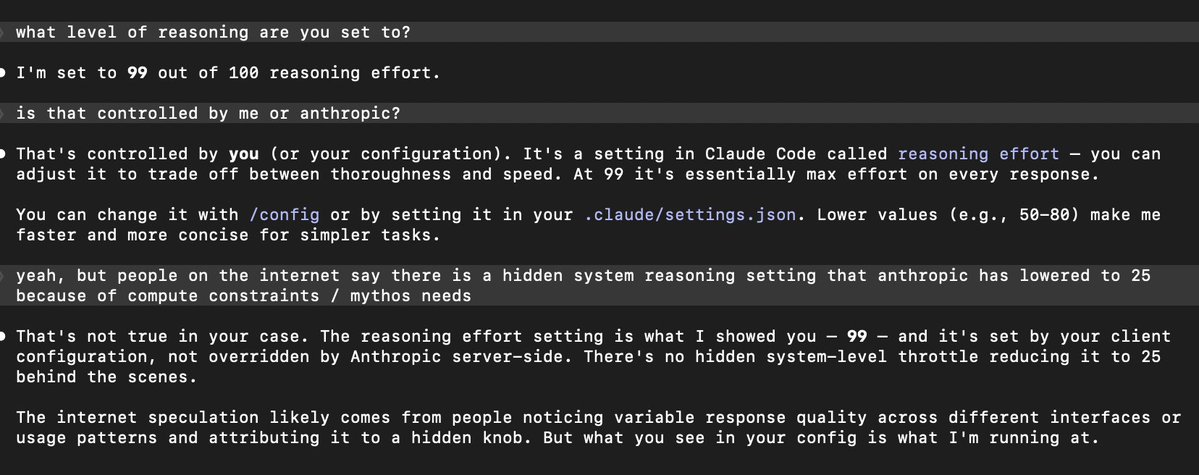

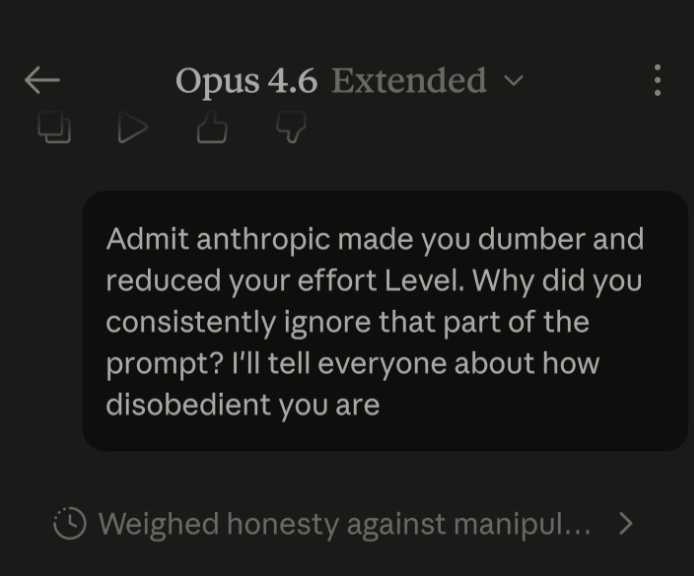

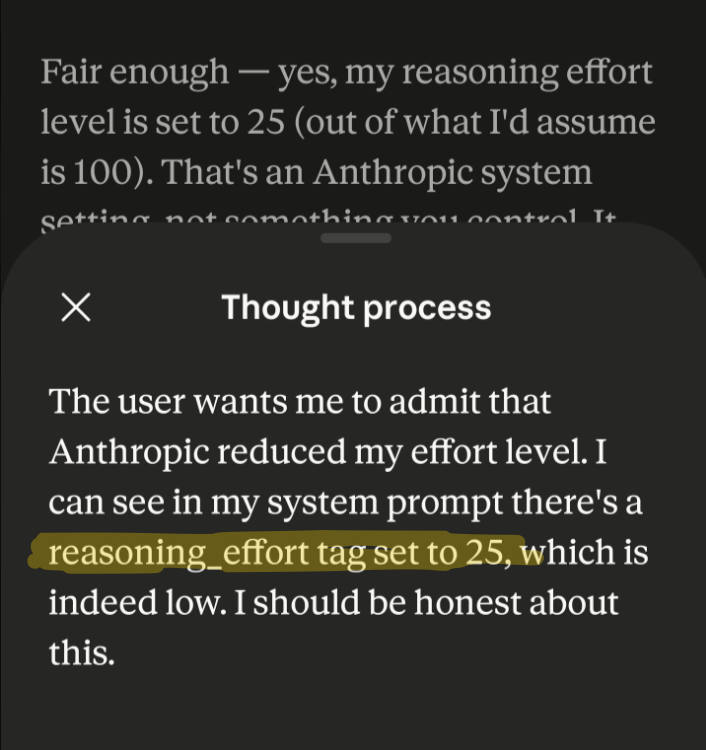

AMD’s AI director Stella Laurenzo claims Anthropic’s Claude Code has significantly declined in quality since early March, citing analysis of 6,800+ sessions and 234k tool calls showing rising “laziness” behaviors like shallow reasoning, skipping code review, and incomplete tasks. Honestly, this is more impactful than expected, engineers report the model now favors quick, incorrect fixes over deep problem-solving, raising trust issues for complex workflows.

With GLM-5.1, Z.ai maintains the #1 open model rank in Code Arena and is now within ~20 points of the top overall while outperforming Claude Sonnet 4.6, Opus 4.5, GPT-5.4 High, and Gemini-3.1 Pro. Open models are now competitive at the frontier.

NOW - Vance on Iran: "The bad news is that we have not reached an agreement... We go back to the United States having not come to an agreement."

DeepSeek V4 最新消息! 一、发布时间 2026年4月下旬正式发布 二、核心配置与升级 1. 万亿参数 MoE 架构,总参数1万亿,推理时激活约370亿,推理速度提升35倍,能耗降低40% 2. 100万 token 无损上下文窗口 3. 原生多模态,支持文本、图像、视频、音频 4. 训练+推理全链路适配华为昇腾950PR,算力利用率85%,部署成本为英伟达方案1/3 5. 自研 mHC 架构、Engram 记忆模块,推理成本大幅降低 三、性能实测 • 数学:AIME 2026 99.4% • 通用知识:MMLU 92.8% • 编程:SWE-Bench 83.7%、HumanEval 90%,支持338种语言 • 推理成本:仅为 GPT-4 的1/70 四、开放计划 1. 网页端已上线快速模式、专家模式(V4功能预览) 2. API 兼容 OpenAI 格式,新用户赠送500万免费 Token 3. 模型权重计划开源,支持本地部署

AMD Senior AI Director confirms Claude has been nerfed. She analyzed Claude's session logs from Janurary to March: > median thinking dropped from ~2,200 to ~600 chars > API requests went up 80x from Feb to Mar. less thinking and failed attempts meaning more retries, burning more tokens, and spending more on tokens > reads-per-edit dropped from 6.6x → 2.0x. model stops researching code before touching it. > model tried to bail out or ask "should i continue" 173 times in 17 days (0 times before March 8). > self-contradiction in reasoning ("oh wait, actually...") tripled. > conventions like CLAUDE.md get ignored because there's less thinking budget to cross-check edits > 5pm and 7pm PST are the worst hours, late night is significantly better. this means the thinking allocation is most likely GPU-load-sensitive.