René Cotton

29.8K posts

René Cotton

@_Re_

CoFondateur & CTO de @WiziShop & @Evolup_Off, Podcasteur, Râleur, PseudoChanteur, Végétarien, Rôliste, MJ, Nissart. #Dev #IA

POKÉMON GO PLAYERS TRAINED 30 BILLION IMAGE AI MAP Niantic says photos and scans collected through Pokémon Go and its AR apps have produced a massive dataset of more than 30 billion real-world images. The company is now using that data to power visual navigation for delivery robots, letting them identify exact locations on city streets without relying on GPS. Source: NewsForce

MiniMax M2.7 has been spotted The previous model M2.5, was tested under the name "Deepmolt" on DesignArena and LMArena. M2.7 might appear there soon as well

Made a little @openclaw tamagotchi that sits on my desktop He occasionally comments on what he sees (mercilessly)

JUST IN: 🇺🇸 Anthropic sues US government after being labeled a national security risk.

Run TranslateGemma 4B entirely in your browser using WebGPU and Transformers.js v4. See how you can now build private, offline applications supporting 55 languages with no server side required in this video from @nic_o_martin 🌐

Announcing Copilot Cowork, a new way to complete tasks and get work done in M365. When you hand off a task to Cowork, it turns your request into a plan and executes it across your apps and files, grounded in your work data and operating within M365’s security and governance boundaries.

GPT-5.4 built this for me in 3 prompts. It hacked the NES Mario ROM to expose RAM events, then created a JS emulator that could send browser requests so every character in the game is controlled by an AI lmaooo

OpenAI: It takes billions to train a model. Photoroom: We just trained a great visual GenAI model in a day for less than $1,500—and the results are quite impressive! One more thing—it's open-sourced

Exclusive: It took Anthropic’s most-advanced artificial intelligence model about 20 minutes to find its first Firefox browser bug during an internal test of its hacking prowess on.wsj.com/4rjrG2C

Claude Code wiped our production database with a Terraform command. It took down the DataTalksClub course platform and 2.5 years of submissions: homework, projects, and leaderboards. Automated snapshots were gone too. In the newsletter, I wrote the full timeline + what I changed so this doesn't happen again. If you use Terraform (or let agents touch infra), this is a good story for you to read. alexeyondata.substack.com/p/how-i-droppe…

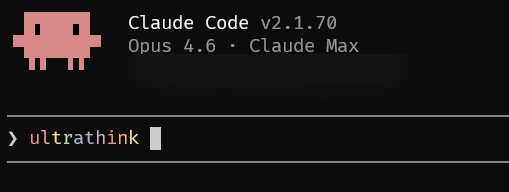

What if @claudeai could get a second opinion before writing your code? I made Claude Code automatically consult OpenAI's Codex before approving any plan. Two competing models reviewing each other = way better output. Just updated the skill with @OpenAI 5.4 model. link below.

Yann LeCun (@ylecun ) explains why LLMs are so limited in terms of real-world intelligence. Says the biggest LLM is trained on about 30 trillion words, which is roughly 10 to the power 14 bytes of text. That sounds huge, but a 4 year old who has been awake about 16,000 hours has also taken in about 10 to the power 14 bytes through the eyes alone. So a small child has already seen as much raw data as the largest LLM has read. But the child’s data is visual, continuous, noisy, and tied to actions: gravity, objects falling, hands grabbing, people moving, cause and effect. From this, the child builds an internal “world model” and intuitive physics, and can learn new tasks like loading a dishwasher from a handful of demonstrations. LLMs only see disconnected text and are trained just to predict the next token. So they get very good at symbol patterns, exams, and code, but they lack grounded physical understanding, real common sense, and efficient learning from a few messy real-world experiences. --- From 'Pioneer Works' YT channel (link in comment)

made a hook that adds a bouncing dvd logo to claude code whenever it's thinking

GPT 5.4 Pro is the most overthinking model. A simple 'Hi' cost me $80. 🥲

Visualize Agent Teams workflow in Claude Code More and more devs are using the Agent Teams feature, so I built a way to see exactly what's happening under the hood. Run this: npx claude-code-templates@latest --teams You get a full view of how the lead agent and the team communicate: messages, tasks, and every tool they executed Repo: github.com/davila7/claude…

🚨 BREAKING: Pentagon Officially Notifies Anthropic It Is a ‘Supply Chain Risk’ And Anthropic now vows legal fight against Pentagon sanction. Amodei argues this action is not legally sound and plans to challenge it in court. He points out that the actual restriction is surprisingly narrow. The rule only stops contractors from using Claude directly for defense contracts, but they can still use it for other business. Anthropic also apologized for a frustrated internal message that leaked to the press. That message was written on a chaotic day when the government banned them on social media and simultaneously handed a defense deal to a rival. The company stressed that their only real boundary is keeping their AI out of autonomous weapons and mass surveillance. Microsoft is the first major company to say it will keep using Anthropic models in its products. Microsoft said its lawyers have studied the Pentagon’s plan to label Anthropic a supply chain risk, and they found that Anthropic models can remain in Microsoft products, excluding the U.S. Department of War. Microsoft supplies its technology to a variety of U.S. government agencies. The Microsoft 365 productivity software is widely used inside the Department of War.