Francesco D’Orazio

16.8K posts

@abc3d

Founder of @pulsarplatform, host of @audiencedisco

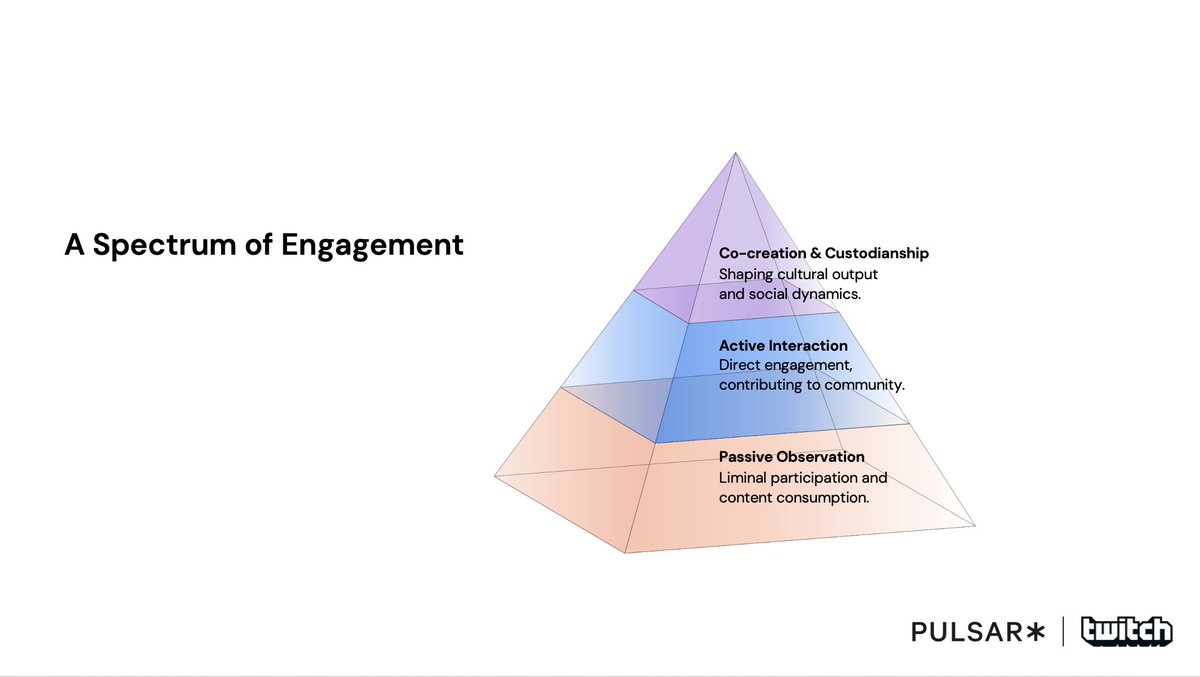

If they want to spot trends today, "brands need to look at the ‘vibrant fringes,’” says @abc3d, founder and president of Pulsar, in an article newly published on @adage. “These are niche, unconventional communities that exist outside the average consumer base but that have the potential to appeal to bubbles outside of the one they originated within." "That’s a core skill of trendspotting today: spotting a fringe and assessing their trans-audience potential.” 🧵

Citrini’s piece is a fun read but has some major flaws. I’ll go over a few of them: lump of labor fallacy, ignoring the cost of living, capex fallacy, and wrong on SaaS. The overarching problem in the piece is the so-called “Horse Fallacy.” When the tractor and car were invented, the horse couldn’t get a better job. He became completely obsolete. But humans are not horses. Horses simply supplied labor. They never demanded much beyond food. Humans, though, are the origin of all economic demand. The whole point of the economy is to satisfy human desires. The lump of labor fallacy assumes that humans have a fixed checklist of problems to solve. But every time technology checks an item off the list, we invent another desire. Human desires are infinite. Maybe if AI does all the work here on earth, we'll terraform Mars, build O’Neill cylinders, and Dyson spheres. We’ll certainly not sit idle, desiring nothing more. As long as human desire is infinite, demand for work will be infinite. There’s an additional point on jobs: there are some jobs that will ALWAYS be done by humans. One example is sports. Your iPhone can beat Magnus Carlsen in chess but nobody cares, they want to watch humans playing chess, so chess today is a bigger sport than ever. One day robots will skate better than Alysa Liu but nobody will care, they’ll want to watch Alysa instead. Citrini models incomes collapsing but doesn’t model the massive deflation in the cost of living. If AI makes all these white collar workers unnecessary, this means that the price of products and services will be much lower (since much less labor is needed). You’d have a scenario where a household earning $40k per year could consume what previously took $120k per year. And there’s all this mysterious wealth accumulated by the owners of the GPUs and what are they spending it on? How can there simultaneously be massive wealth and mass layoffs? Will there be new jobs invented? Quite likely. This has been the pattern over the last 200 years: technological revolutions → deflation → demand for new things → new jobs get created. Because humans have infinite desires. The piece assumes that the hundreds of billions of capex go into a black hole and vanish from the real economy. In reality, it is highly stimulative as all the money ends up with many white collar workers at fabs, utility companies, cooling system manufacturers, as well as with blue collar workers. On SaaS, Citrini could have picked a long tail of point solutions that are easily vibe-codable, but they ironically instead picked one of the widest moat enterprise software companies, ServiceNow, which is in fact an AI beneficiary. Their point is: even this impossible-to-displace business won’t survive. True if you wave a magic wand, but not true in the real world where, after 20 years of cloud computing, companies are still running mainframes and IBM is still growing their mainframe installed base (yes, look it up). Of course, part of the magic wand is “this time is different!” because AI will do absolutely everything. It will vibe code the product, talk to regulators, obtain SOC2, HIPAA, FedRAMP, GDPR compliance, and do this globally too, not just in the US; it’ll somehow suck all the embedded data in these systems (ServiceNow owns the Configuration Management Database with a map of all the hardware, software, user hierarchies, permissions, and workflows inside an enterprise). More fantastically, AI will also somehow vibe code B2B enterprise go to market teams. The reality is that software is sold, not bought. At the extreme, the article is arguing that someone at PepsiCo will open the Terminal on their Mac Mini, type “Claude,” ask it to replace ServiceNow, and Claude will go to work, doing all this work autonomously, and then maintaining itself, patching itself, securing itself, talking to users inside PepsiCo to ask for new features to develop, integrate with 200+ other tools, etc. Meanwhile, the CTO at PepsiCo is like, “Yeah, cool, let’s do that, it’ll save us millions a year” while ignoring that any downtime or bug will cost the company millions per minute. All that operational liability, SLAs, uptime figures, which used to be ServiceNow’s liability, PepsiCo will now take on itself. Simultaneously, the thousands of engineers working at ServiceNow’s R&D department are sitting idle and not using AI to accelerate their own roadmaps and build new features. When you start really filling in the details of what needs to happen for this scenario to unfold, you realize it crumbles very quickly. Hopefully the discussion above on infinite human needs puts to bed the “seat count” debate: there will be more seats because there will be more employment because of the infinity of human desires. Meanwhile, ServiceNow has been charging a hybrid seat/consumption model since about 2023 when it introduced its Pro Plus SKU. These AI agents are tackling human labor, both work that was already done and new work that was never done. The TAM for this is orders of magnitude greater than the TAM for pure seat-based software. ServiceNow will get its fair share of this new TAM because it already has the customer relationships, distribution, brand, trust, technology, and product. To quote François Chollet: “The maximalist form of my thesis is basically this: SaaS is not about code, it is about solving a problem customers have and selling them the solution. Services + sales. If the cost of code goes to *zero*, SaaS will *not* go away. It will *benefit*, since code is a cost center.” To expand on this, the more likely scenario is NOT that the price of software collapses; it’s that incumbents offer their customers so much more value within the existing seat price they already pay, it becomes financially irresponsible for the customer NOT to be a subscriber. This will INCREASE their incumbency. This has already been the SaaS playbook for decades. Decades during which the cost of producing software has always gone down (more open source options and cloud computing, to name just two inputs, have dramatically lowered the cost of entry for newcomers; and yet, the per seat price of the best SaaS companies has only gone in one direction). Every SaaS company worth its salt is always improving its products, adding features, fixing bugs, and shipping updates. Many will do this for several years before changing pricing. Pricing is an output: are we delivering enough value to the customer that gives us the permission to charge more? With the deflation in the cost of producing software, a couple of things should happen: - Existing software companies will be a lot more productive and will ship a lot more products and features than before - Because they own the customer relationships and customer trust, they are in pole position to deliver new solutions and make their seat subscription ever more compelling - Customers of those software companies will get a lot more value within their per-seat price and increase their reliance and trust on the best vendors The debate in tech is always, “Can the innovator get the distribution before the incumbent gets the innovation?” In this case, there is no question: the BEST incumbents already have the distribution AND the innovation. This allows them to widen their moats as they become even more essential and irreplaceable to their customers. Another mental model missing from this debate is power laws. Outcomes in the real world follow power laws: 20% of the people make 80% of the income, 4% of stocks generate all the net wealth, 10% of YouTube videos generate 90% of watched hours, etc. Power laws will continue to dominate and what does this tell us? That the most likely outcome is that the gains will accrue disproportionately to a small cohort of top software businesses. This is another framing of the “Increasing Returns” mental model of Brian Arthur. Personally, I believe that ServiceNow will be one of those power law winners. After all, they already are a winner in the power law distribution and have all the attributes necessary to continue winning.

I understand the argument. There is a major flaw in it: Customers (or the agents acting on their behalf) don't just care about "getting the lowest price". They care about: - Access to all of the best restaurants, full menus, accurate prices - Fast and reliable delivery times - Correct food arriving, still warm, not tampered with - Getting a refund if any of these are not true (refunds happen constantly) The "hundreds of delivery apps" cannot provide that service without charging a real commission. In the scenario you are describing, orders would constantly be wrong, late, incomplete, not show up at all. Many restaurants would mark up their prices or not participate at all. (the major marketplaces invest heavily in keeping this price markup from happening btw) Customers are not going to roll the dice on that to save a couple bucks (and in many cases wouldn't save money anyway) Marketplaces like DD and Uber will not allow agents to transact on their platforms without permission because it would destroy their econs and the ability to provide all of the above. And they will not be legally forced to do so (see precedent being set by amazon v perplexity) Here is a piece I wrote on how AI will impact marketplaces, and why DASH will be among the least impacted: danhock.co/p/llms-vs-mark…