DeepEval 리트윗함

DeepEval

174 posts

DeepEval

@deepeval

The Open-Source LLM Evaluation Framework – created and maintained by @confident_ai GitHub: https://t.co/kuvhlNRRdD

San Francisco 가입일 Şubat 2025

2 팔로잉652 팔로워

DeepEval 리트윗함

We're launching Auto-Categorize Traces & Threads — Day 4 of our Launch Week!

Every production trace gets categorized automatically, so you can detect response drift and see exactly which areas your agent crushes and which ones need work.

Full post: confident-ai.com/blog/launch-we…

English

DeepEval 리트윗함

Day 3 of launch week: auto trace-to-dataset ingestion.

Set a rule once — production traces continuously flow into eval datasets and annotation queues. No scripts, no stale data.

Post: confident-ai.com/blog/launch-we…

English

DeepEval 리트윗함

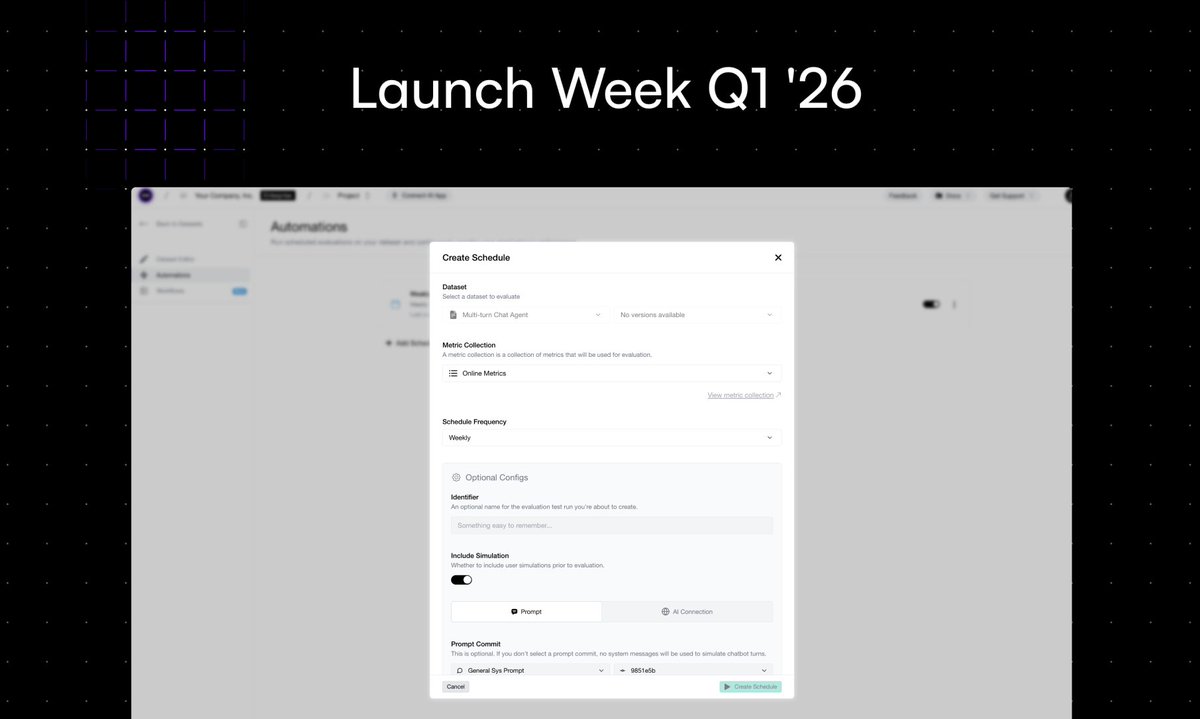

Confident AI Launch Week Day 2: Scheduled Evals ⏰

Everyone agrees to run evals every few days. Nobody actually does.

Now you set a frequency, configure your mappings, and evals run themselves.

Link here: confident-ai.com/blog/launch-we…

English

DeepEval 리트윗함

Announcing our Q1 Launch Week!

Day 1: Automated Error Analysis.

Link here: confident-ai.com/blog/launch-we…

English

DeepEval 리트윗함

🚨BREAKING: Someone just solved LLM testing's biggest problem.

It's called DeepEval and it gives you answer relevancy, hallucination detection, and G-Eval metrics that actually work.

- Run evaluations 100% locally (no data leaves your machine).

- Test agents, RAG systems, and production responses with human-level accuracy.

100% Opensource.

English

My sister just got released, DeepTeam v1.0, 100% open-source, Apache 2.0 red teaming for LLMs.

⭐ Star on GitHub to stay on top of the latest developments in AI security and safety: github.com/confident-ai/d…

English

DeepEval 리트윗함

@_avichawla Author of @deepeval here, I'm glad you've found our approach of LLM-Arena-as-a-Judge useful :)

English

DeepEval 리트윗함

Most LLM-powered evals are BROKEN!

These evals can easily mislead you to believe that one model is better than the other, primarily due to the way they are set up.

G-Eval is one popular example.

Here's the core problem with LLM eval techniques and a better alternative to them:

Typical evals like G-Eval assume you’re scoring one output at a time in isolation, without understanding the alternative.

So when prompt A scores 0.72 and prompt B scores 0.74, you still don’t know which one’s actually better.

This is unlike scoring, say, classical ML models, where metrics like accuracy, F1, or RMSE give a clear and objective measure of performance.

There’s no room for subjectivity, and the results are grounded in hard numbers, not opinions.

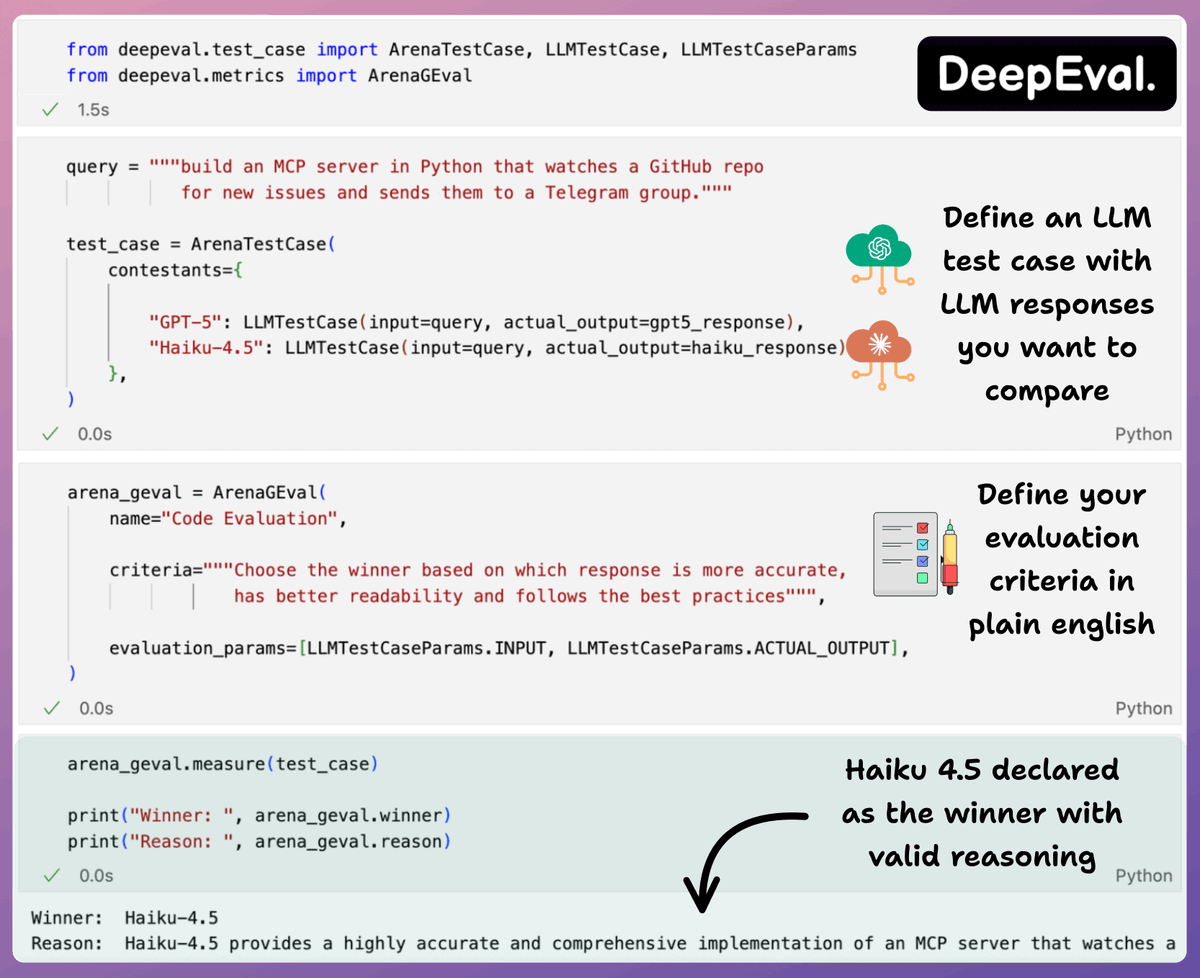

LLM Arena-as-a-Judge is a new technique that addresses this issue with LLM evals.

In a gist, instead of assigning scores, you just run A vs. B comparisons and pick the better output.

Just like G-Eeval, you can define what “better” means (e.g., more helpful, more concise, more polite), and use any LLM to act as the judge.

LLM Arena-as-a-Judge is actually implemented in @deepeval (open-source with 12k stars), and you can use it in just three steps:

- Create an ArenaTestCase, with a list of “contestants” and their respective LLM interactions.

- Next, define your criteria for comparison using the Arena G-Eval metric, which incorporates the G-Eval algorithm for a comparison use case.

- Finally, run the evaluation and print the scores.

This gives you an accurate head-to-head comparison.

Note that LLM Arena-as-a-Judge can either be referenceless (like shown in the snippet below) or reference-based. If needed, you can specify an expected output as well for the given input test case and specify that in the evaluation parameters.

Why DeepEval?

It's 100% open-source with 12k+ stars and implements everything you need to define metrics, create test cases, and run evals like:

- component-level evals

- multi-turn evals

- LLM Arena-as-a-judge, etc.

Moreover, tracing LLM apps is as simple as adding one Python decorator.

And you can run everything 100% locally.

I have shared the repo in the replies.

English

🙌 our favorite VC ❤️🔥

Vermilion Cliffs Ventures@vermilionfund

The new Vermilion newsletter is out 🗞️ Inside: 💰 @514hq raises $17m to simplify AI-ready analytics 📈 @deepeval becomes the most adopted LLM eval framework globally 🤝 Google’s Agent Development Kit ships a @CopilotKit integration 👀 Who’s hiring? Check out the new Vermilion Careers page Plus: @ashl3ysm1th's take on startup KPIs, revenge of the acronyms, and more founder lessons from the trail.

Português

DeepEval 리트윗함

The new Vermilion newsletter is out 🗞️

Inside:

💰 @514hq raises $17m to simplify AI-ready analytics

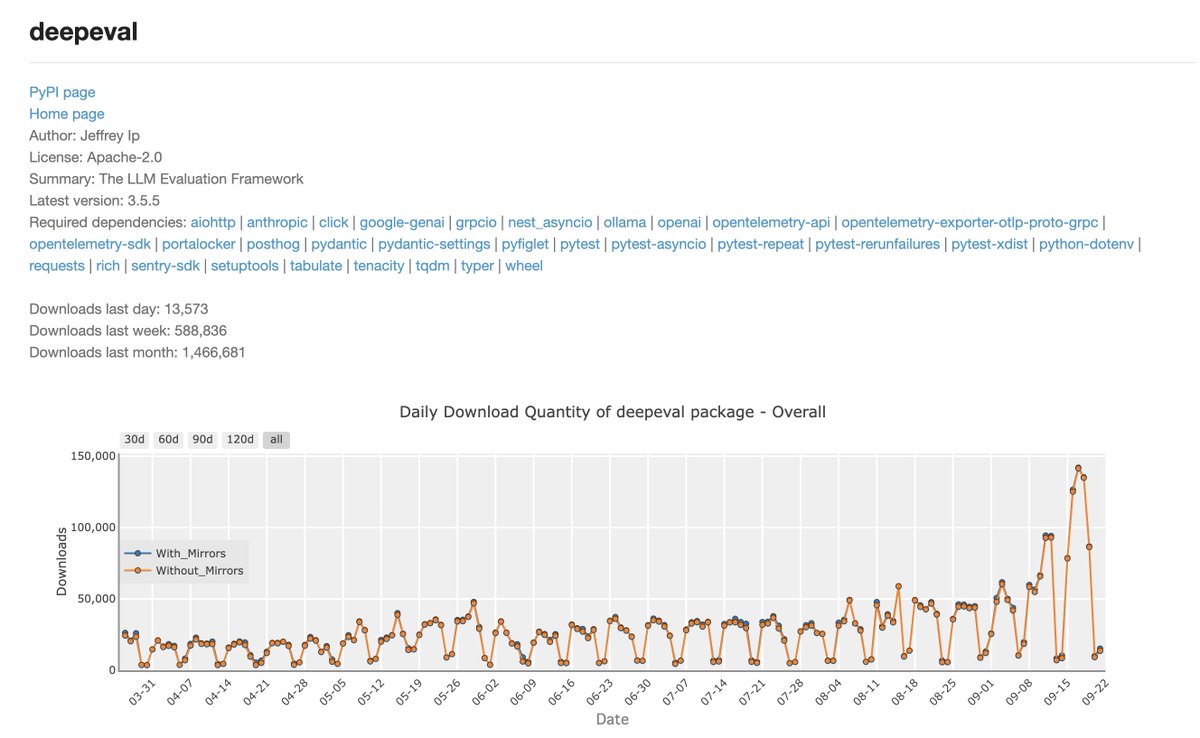

📈 @deepeval becomes the most adopted LLM eval framework globally

🤝 Google’s Agent Development Kit ships a @CopilotKit integration

👀 Who’s hiring? Check out the new Vermilion Careers page

Plus: @ashl3ysm1th's take on startup KPIs, revenge of the acronyms, and more founder lessons from the trail.

English

DeepEval 리트윗함

DeepEval 리트윗함

DeepEval 리트윗함

Companies like @OpenAI, @perplexity_ai and @AnthropicAI already use LLM judges for production evaluation at massive scale.

@ragas_io and @deepeval are two evaluation frameworks that I personally find intuitive.

[NOT AN AD]

English

DeepEval 리트윗함

DeepEval 리트윗함

Real-world AI memory evaluation needs more than HotPotQA, EM or F1.

Our new open dataset with @deepeval will measure cross-context, long-term reasoning. Stay tuned.

For the full results & current methodology:

cognee.ai/blog/deep-dive…

English

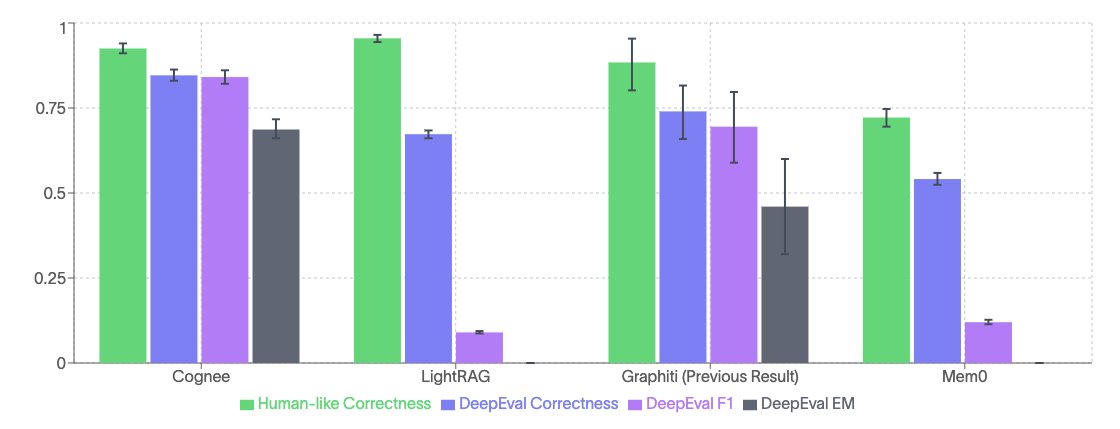

@cognee_ hits 92.5% on benchmarks.

That’s the percent of answers our LLM-based evaluator marked correct - designed to approximate human evaluation.

We ran cognee against LightRAG, Mem0, and Graphiti (prev results) through 45 evaluation cycles on 24 questions from HotPotQA.

English

DeepEval 리트윗함

Although HotPotQA and standard metrics like F1 and EM gave us a baseline, we believe real-world AI memory requires better evaluation systems.

That's why we’re building an open dataset with @deepeval to share with the entire ecosystem.

Full write-up: cognee.ai/blog/deep-dive…

English

DeepEval 리트윗함

There are primarily 2 factors that determine how well an MCP app works:

- If the model is selecting the right tool?

- And if it's correctly preparing the tool call?

Today, let's learn how to evaluate any MCP workflow using @deepeval's MCP evaluations (open-source).

Let's go!

English