Chris Adamczyk 리트윗함

Chris Adamczyk

1.2K posts

Chris Adamczyk 리트윗함

Chris Adamczyk 리트윗함

Chris Adamczyk 리트윗함

Chris Adamczyk 리트윗함

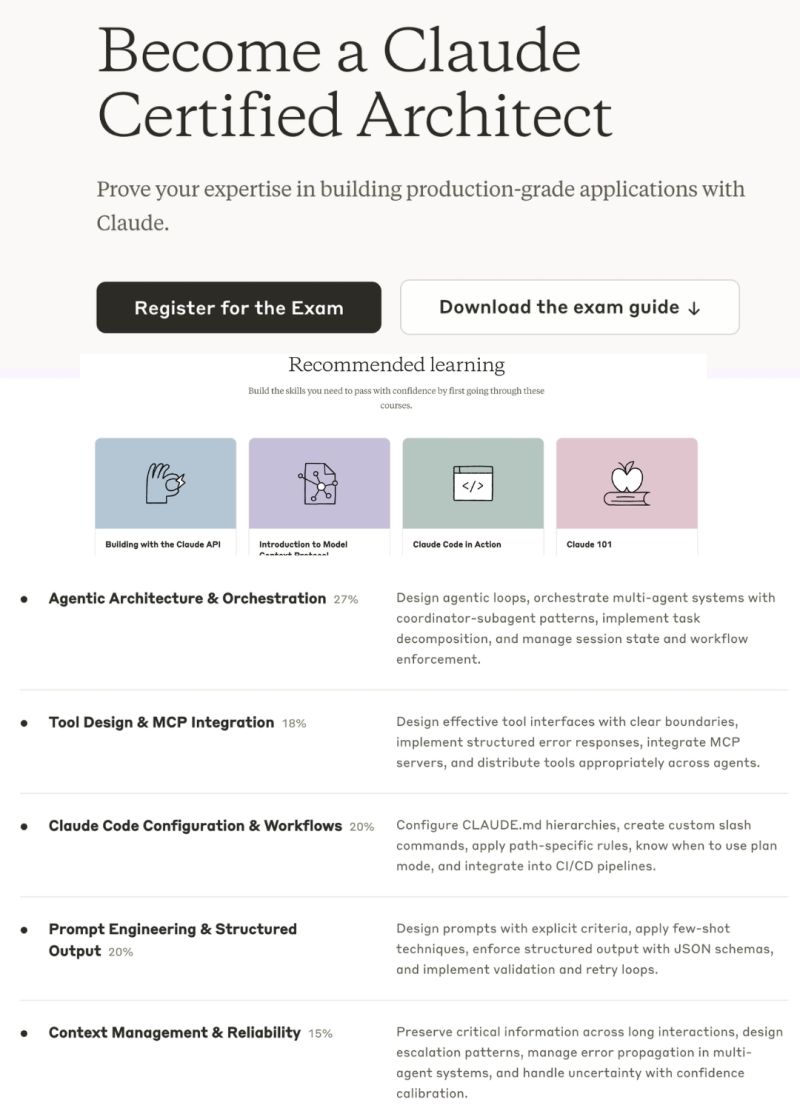

Anthropic just announced the "Claude Certified Architect" program.

And you can start today.

In 16 years of my professional career, I haven't done a single certification.

Not one.

Not AWS. Not Azure. Not Google Cloud. Not PMP. Not Scrum. Not any of the alphabet soup.

I learned by building. By breaking things. By shipping.

But I'm about to break that streak.

I'm going for my first-ever certification:

Claude Certified Architect — Foundations

Here's why this matters — especially if you're a developer, engineer, or any professional who feels like the AI wave is moving too fast.

Claude Code launched a few weeks ago.

And it feels like a paradigm shift.

Not an incremental upgrade. Not another chatbot wrapper.

A fundamentally different way of building software.

Agentic architecture. Tool orchestration. MCP integration. Context management at a systems level.

If those words sound intimidating — that's exactly why this certification exists.

It covers everything from agentic orchestration to prompt engineering to Claude Code workflows.

Not surface-level content.

And here's what got me:

It costs nothing.

Free. Zero. $0.

So if you've been feeling left behind... If you've been watching others ship AI agents while you're still figuring out where to start... If you've been telling yourself "I'll learn this next quarter"...

This is your sign.

Stop scrolling. Start building.

First certification in 16 years. Let's see how this goes.

Links in the comments 👇

Cc : Brij Pandey

English

Chris Adamczyk 리트윗함

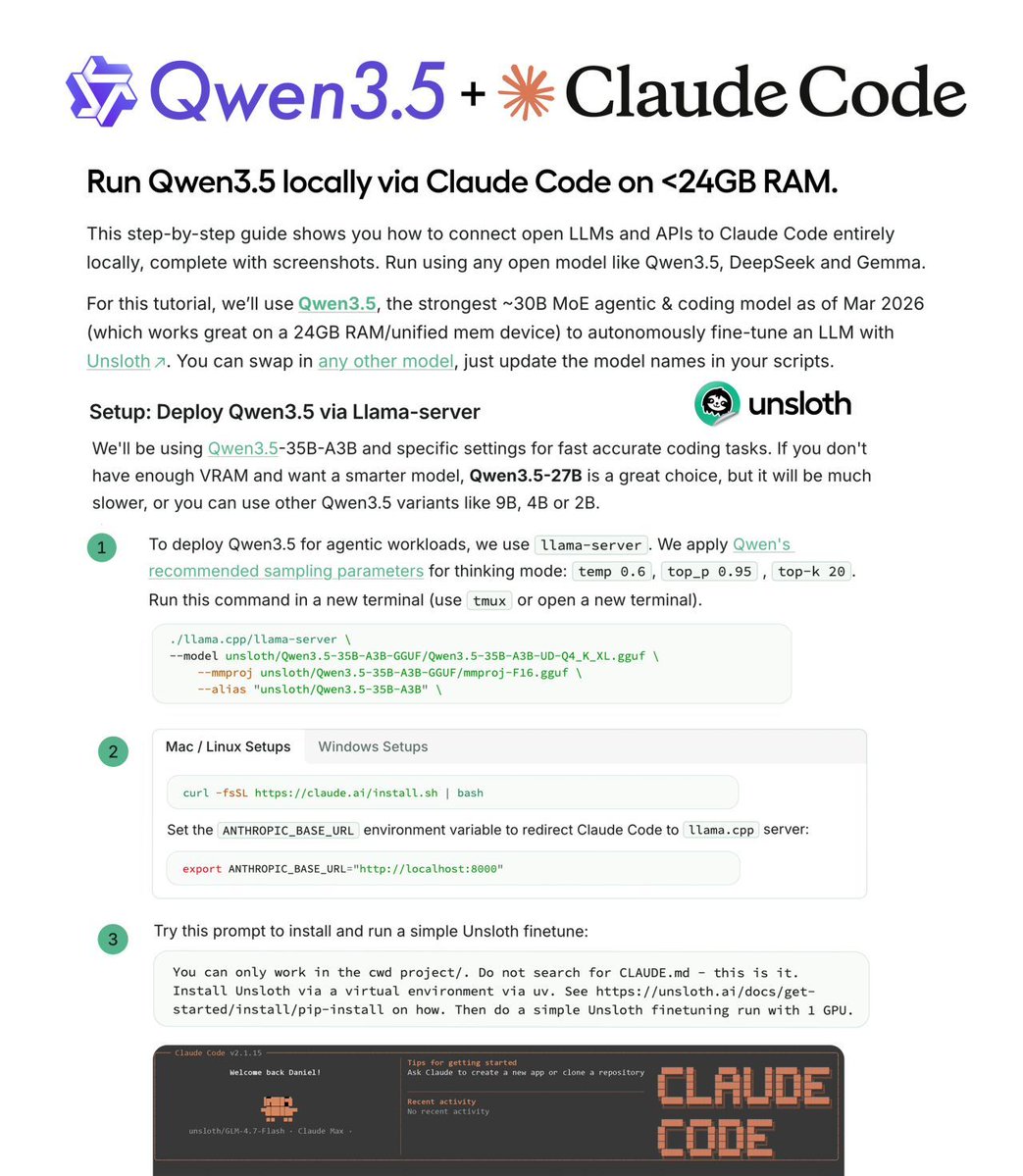

Claude Code can run entirely on your local GPU now.

Unsloth AI published the complete guide.

The setup itself is straightforward - llama.cpp serves Qwen3.5 or GLM-4.7-Flash, one environment variable redirects Claude Code to localhost.

But the guide is valuable because of what it explains beyond the setup:

Why local inference feels impossibly slow: Claude Code adds an attribution header that breaks KV caching. Every request recomputes the full context. The fix requires editing settings.json - export doesn't work.

Why Qwen3.5 outputs seem off: f16 KV cache degrades accuracy, and it's llama.cpp's default. Multiple reports confirm this. Use q8_0 or bf16 instead.

Why responses take forever: Thinking mode is great for reasoning but slow for agentic tasks. The guide shows how to disable it.

The proof it all works: Claude Code autonomously fine-tuning a model with Unsloth. Start to finish. No API dependency.

Fits on 24GB. RTX 4090, Mac unified memory.

English

Chris Adamczyk 리트윗함

Chris Adamczyk 리트윗함

Chris Adamczyk 리트윗함

Chris Adamczyk 리트윗함

Three.js is CPU limited by JavaScript. Bevy on the other hand…

> compiles to WASM

> cache optimized ECS game engine

> code first

> WebGPU + WebTransport

> also builds to native: windows, Linux, macOS, android, iOS

WASM has a way to go still, lacking multithreading and it needs to be glued by JavaScript, but this is being worked on.

Piers Kicks@pierskicks

Three.js + WebGPU = a modern Flash games boom > Ships to 5B+ users, near-native GPU performance > No platform rake, app store, or custom runtime > Devs own their distribution + monetisation > AI can now vibe code the games for you The only missing piece is the discovery layer

English

Saw @PlanetScale’s demo of video calls through Postgres and thought: could Postgres handle a simple online game too?

So this weekend I started building a prototype using @zero__ms and @threejs

English

Chris Adamczyk 리트윗함

Chris Adamczyk 리트윗함

Did you know macOS has a tool called `sandbox-exec` that provides sandboxing?

`sandbox-exec -f profile.sb ./program`

profile.sb:

```

(allow default)

; deny outbound network

(deny network-outbound (remote ip "*:*"))

; deny file writes except known-safe paths

(deny file-write*)

(allow file-write* (subpath "/tmp"))

```

English

Chris Adamczyk 리트윗함

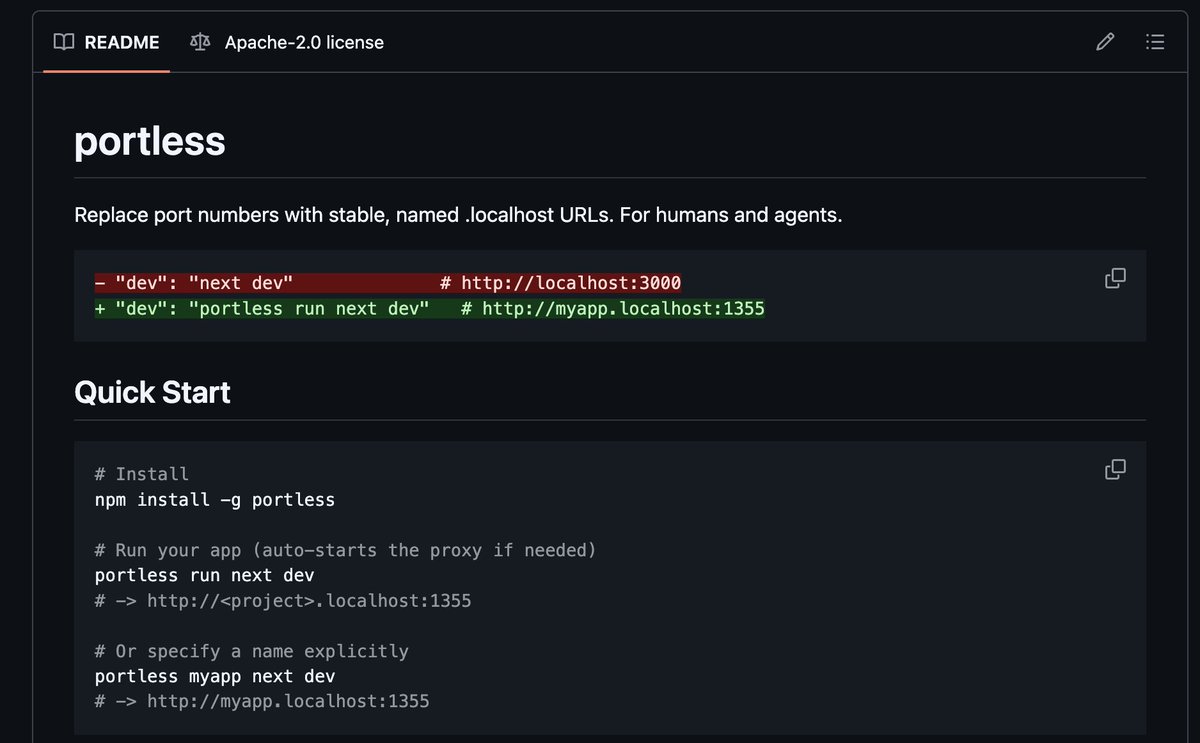

Running multiple projects locally is painful.

localhost:3000, localhost:3001, localhost:8080... which one is which?

One port conflict and your whole setup breaks.

Portless by Vercel Labs fixes this cleanly.

Instead of port numbers, you get stable named URLs:

http://myapp.localhost:1355

http://api.myapp.localhost:1355

http://docs.myapp.localhost:1355

What it solves:

• Port conflicts across projects

• Cookie and storage bleeding between apps on different ports

• "Wait, which tab is which?" confusion in monorepos

• Git worktrees: each branch gets its own subdomain automatically

Works with Next.js, Vite, Express, Nuxt, React Router, Angular, Expo.

There's also an AI angle.

Coding agents were hardcoding ports and getting them wrong. Named URLs mean your agent always knows exactly where to go.

3.8k stars.

v0.5.2.

Actively maintained by Vercel Labs.

npm install -g portless

portless run next dev

That's it.

github.com/vercel-labs/po…

English

Chris Adamczyk 리트윗함

👏 Just shipped: the #GrafanaCON 2026 agenda!

Highlights

• Grafana 13

• Loki’s new storage engine

• Alloy's new #OTel engine

• Use cases from LEGO, DIGI4ECO, Irish Rail, PSF, and more

Tickets AND hands-on labs are 30% off. See the agenda & register: grafana.com/events/grafana…

English

Chris Adamczyk 리트윗함

Chris Adamczyk 리트윗함

Spain's 1000th Supercharger stall - is now open 🇪🇸

Villagonzalo Pedernales, Spain (20 stalls)

tesla.com/en_eu/findus?l…

English

Chris Adamczyk 리트윗함

BOOM!

Apple’s Neural Engine Was Just Cracked Open, The Future of AI Training Just Change And Zero-Human Company Is Already Testing It!

In a jaw-dropping open-source breakthrough, a lone developer has done what Apple said was impossible: full neural network training– including backpropagation – directly on the Apple Neural Engine (ANE). No CoreML, no Metal, no GPU. Pure, blazing ANE silicon.

The project (github.com/maderix/ANE) delivers a single transformer layer (dim=768, seq=512) in just 9.3 ms per step at 1.78 TFLOPS sustained with only 11.2% ANE utilization on an M4 chip. That’s the same idle chip sitting in millions of Mac minis, MacBooks, and iMacs right now.

Translation? Your desktop just became a hyper-efficient AI supercomputer.

The numbers are insane: M4 ANE hits roughly 6.6 TFLOPS per watt – 80 times more efficient than an NVIDIA A100. Real-world throughput crushes Apple’s own “38 TOPS” marketing claims. And because it sips power like a phone, you can train 24/7 without melting your electricity bill or the planet.

At The Zero-Human Company, we’re not waiting. We are testing this right now on real ZHC workloads. This is the missing piece we’ve been chasing for our Zero Human Company vision: reviving archived data into fully autonomous AI systems with zero human overhead.

This is world-changing.

For the first time, anyone with a Mac can fine-tune, train, or iterate massive models locally, privately, and at a fraction of the cost of cloud GPUs.

No more renting $40,000 A100 clusters. No more waiting in queues. No more massive carbon footprints.

Training costs that used to run into the tens or hundreds of thousands of dollars? Plummeting toward pennies on the dollar – mostly just the electricity your Mac was already using while it sat idle.

The AI revolution just moved from billion-dollar data centers to your desk.

WE WILL HAVE A NEW ZERO-HUMAN COMPANY @ HOME wage for equipped Macs that will be up to 100x more income for the owner!

We’re only at the beginning (single-layer today, full models tomorrow), but the door is wide open. Ultra-cheap, on-device training is here.

The future isn’t coming. It’s already running on your Mac.

Welcome to the Zero-Human Company era.

English