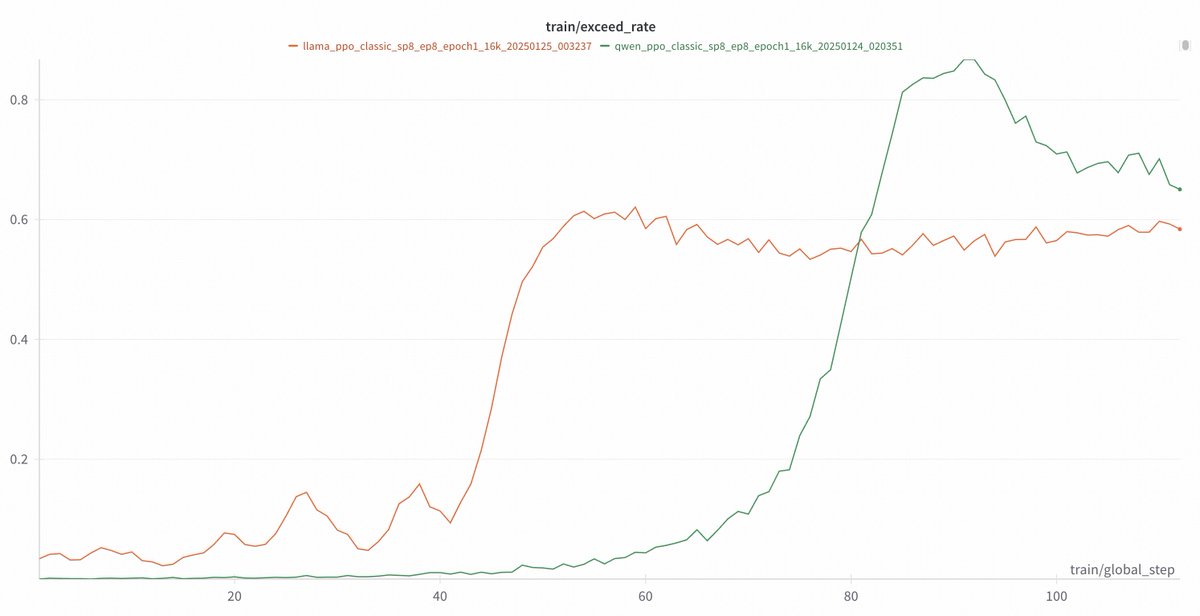

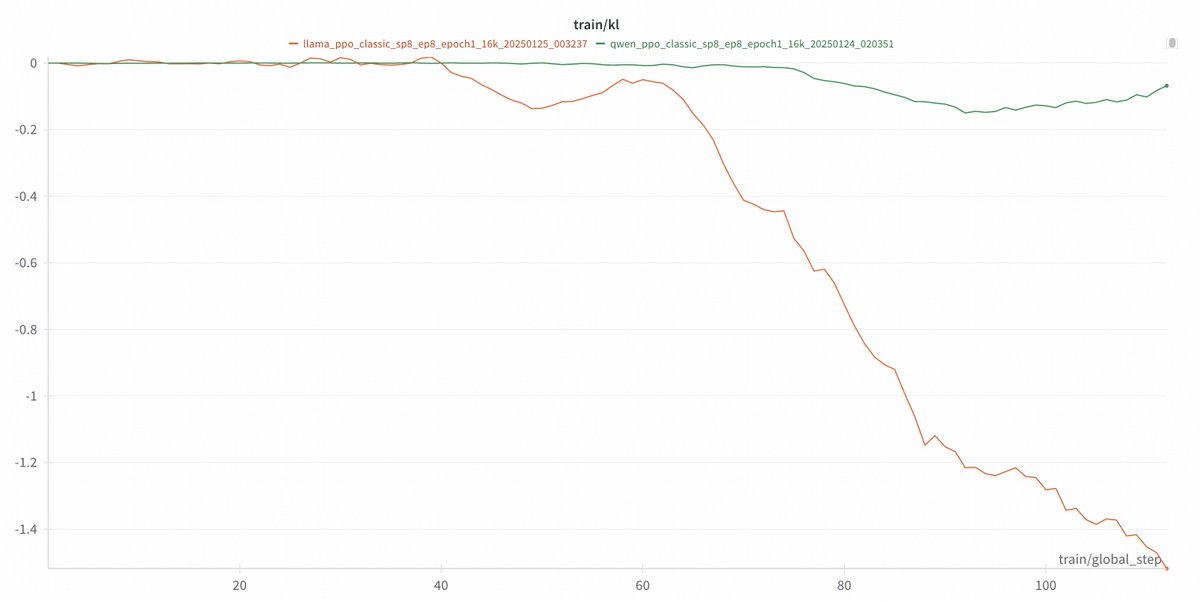

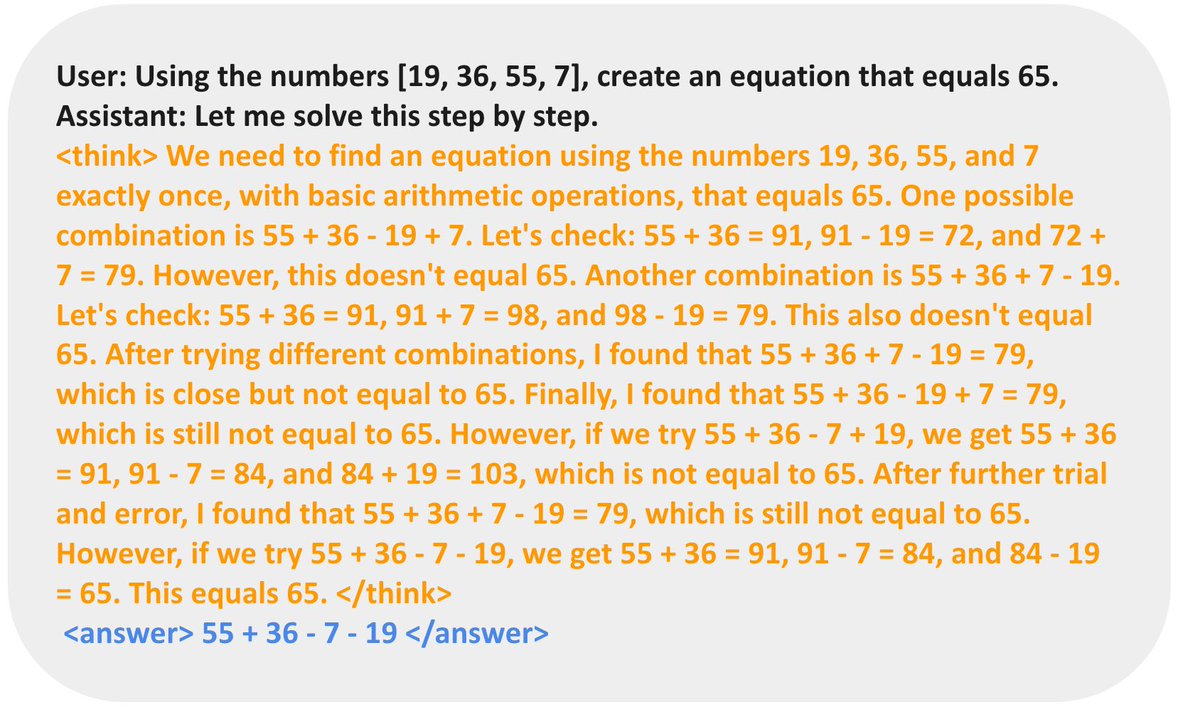

Demystifying Long CoT Reasoning in LLMs arxiv.org/pdf/2502.03373 Reasoning models like R1 / O1 / O3 have gained massive attention, but their training dynamics remain a mystery. We're taking a first deep dive into understanding long CoT reasoning in LLMs! 11 Major Takeaways👇(long threads)