Prompt

998 posts

@engineerrprompt

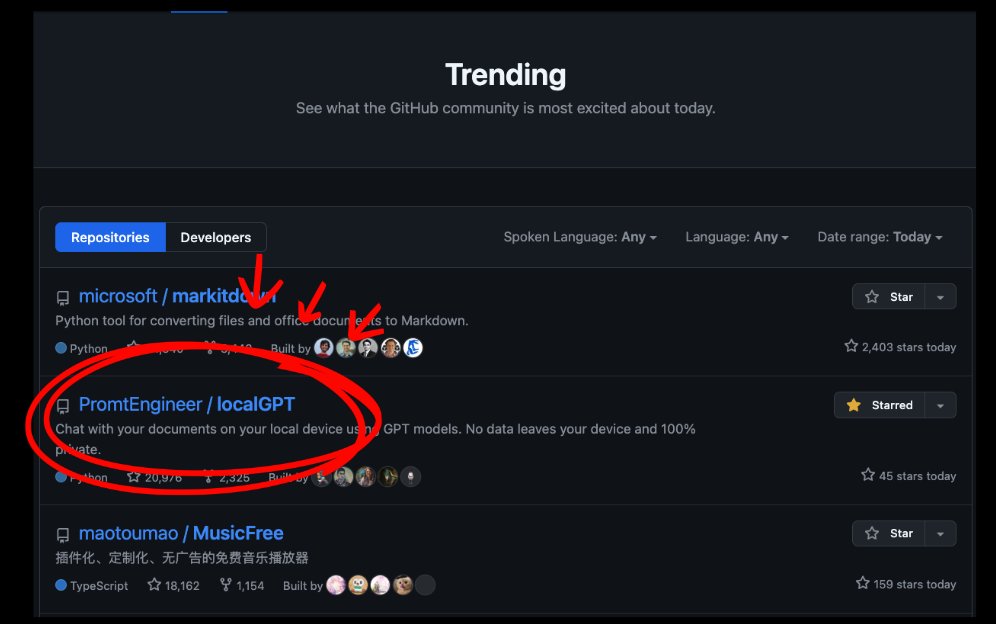

Building https://t.co/w2mi5UKxJv, Creator of localGPT | AI Educator

1 million context window: Now generally available for Claude Opus 4.6 and Claude Sonnet 4.6.

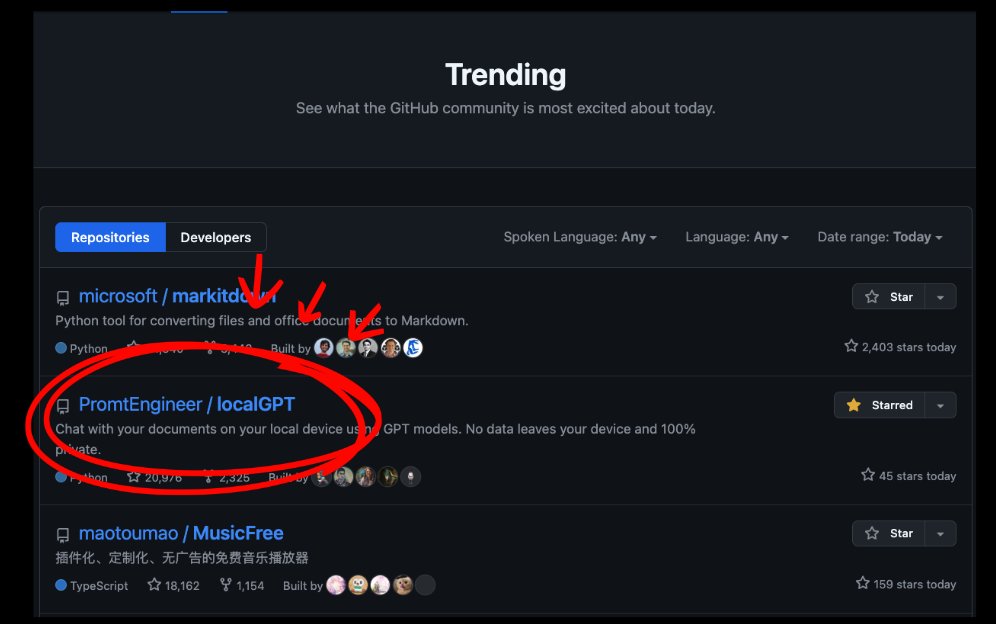

Announcing NVIDIA Nemotron 3 Super! 💚120B-12A Hybrid SSM Latent MoE, designed for Blackwell 💚36 on AAIndex v4 💚up to 2.2X faster than GPT-OSS-120B in FP4 💚Open data, open recipe, open weights Models, Tech report, etc. here: research.nvidia.com/labs/nemotron/… And yes, Ultra is coming!

We offered 5 people a Porsche 911 GT3 RS if they could get @WisprFlow to make a mistake It's the fastest and most accurate AI voice dictation app that's 3x more accurate than ChatGPT, Claude, or Siri. Today, we’re finally launching on Android. Download now: play.google.com/store/apps/det… As a part of the launch, we’re giving away 6 months of Wispr Flow Pro for free. Like, retweet and comment ‘Wispr Flow’ to get it. Enjoy. — Written with Wispr Flow

Introducing LM Link ✨ Connect to remote instances of LM Studio, securely. 🔐 End-to-end encrypted 📡 Load models locally, use them on the go 🖥️ Use local devices, LLM rigs, or cloud VMs Launching in partnership with @Tailscale Try it now: link.lmstudio.ai

Mercury 2 is live 🚀🚀 The world’s first reasoning diffusion LLM, delivering 5x faster performance than leading speed-optimized LLMs. Watching the team turn years of research into a real product never gets old, and I’m incredibly proud of what we’ve built. We’re just getting started on what diffusion can do for language.

We estimate that Claude Opus 4.6 has a 50%-time-horizon of around 14.5 hours (95% CI of 6 hrs to 98 hrs) on software tasks. While this is the highest point estimate we’ve reported, this measurement is extremely noisy because our current task suite is nearly saturated.

Gemini 3.1 Pro is here. Hitting 77.1% on ARC-AGI-2, it’s a step forward in core reasoning (more than 2x 3 Pro). With a more capable baseline, it’s great for super complex tasks like visualizing difficult concepts, synthesizing data into a single view, or bringing creative projects to life. We’re shipping 3.1 Pro across our consumer and developer products to bring this underlying leap in intelligence to your everyday applications right away. Rolling out now to: - Developers in preview via the Gemini API in @GoogleAIStudio - Enterprises in Vertex AI and Gemini Enterprise - Everyone through the @Geminiapp and @NotebookLM