Fred D. | 一铭

3.1K posts

Fred D. | 一铭

@freddmts

- Agent coding addict : https://t.co/ZwbyJ8ZbKQ - Co-organizer, Vibe Coding Community Paris : https://t.co/y20yrcs1nn

Meetup Vibe Coding Paris #2 : Controlled Autonomy le 14/04 chez @YesWeScale 🚀 🔹 Talk #1 : @titouan_benoit (@DotfileApp) : Comment adapter sa DX pour des agents autonomes ? Architecture de systèmes où le bottleneck n'est plus l'écriture, mais le cycle d'exécution. 🔹 Talk #2 : @freddmts (@Cometh ) : Sortir du test manuel : mise en place de benchmarks automatisés et évaluation de la résilience des prompts avec @promptfoo. meetup.com/vibe-coding-co…

🔊Introducing Voxtral TTS: our new frontier open-weight model for natural, expressive, and ultra-fast text-to-speech 🎭Realistic, emotionally expressive speech. 🌍Supports 9 languages and accurately captures diverse dialects. ⚡Very low latency for time-to-first-audio. 🔄Easily adaptable to new voices

Zhilin at GTC: Introducing Attention Residuals Learning selective memory, rather than mechanically accumulating everything, is the beauty of attention. Many of you have probably read Attention Is All You Need, the 2017 Transformer paper that brought “human-like” attention into the model’s field of view. From that point on, models no longer simply read everything in a mechanical way. Instead, they began to develop a sense of what matters more and what matters less across the text, choosing to retain the more important information. Recently, Kimi applied this idea of attention to the temporal dimension, then rotated it 90 degrees into the model’s depth dimension. This allows the model to have attention not only over time, but also throughout the process of information transmission across layers—giving it a more intelligent way to understand and process information.

One common issue with personalization in all LLMs is how distracting memory seems to be for the models. A single question from 2 months ago about some topic can keep coming up as some kind of a deep interest of mine with undue mentions in perpetuity. Some kind of trying too hard.

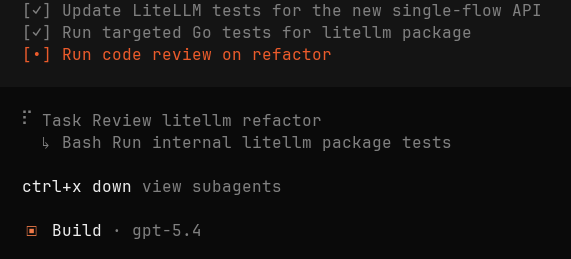

LiteLLM HAS BEEN COMPROMISED, DO NOT UPDATE. We just discovered that LiteLLM pypi release 1.82.8. It has been compromised, it contains litellm_init.pth with base64 encoded instructions to send all the credentials it can find to remote server + self-replicate. link below

M2.7 open weights coming in ~2 weeks. still actively iterating just updated a new version on yesterday — noticeably better on OpenClaw.

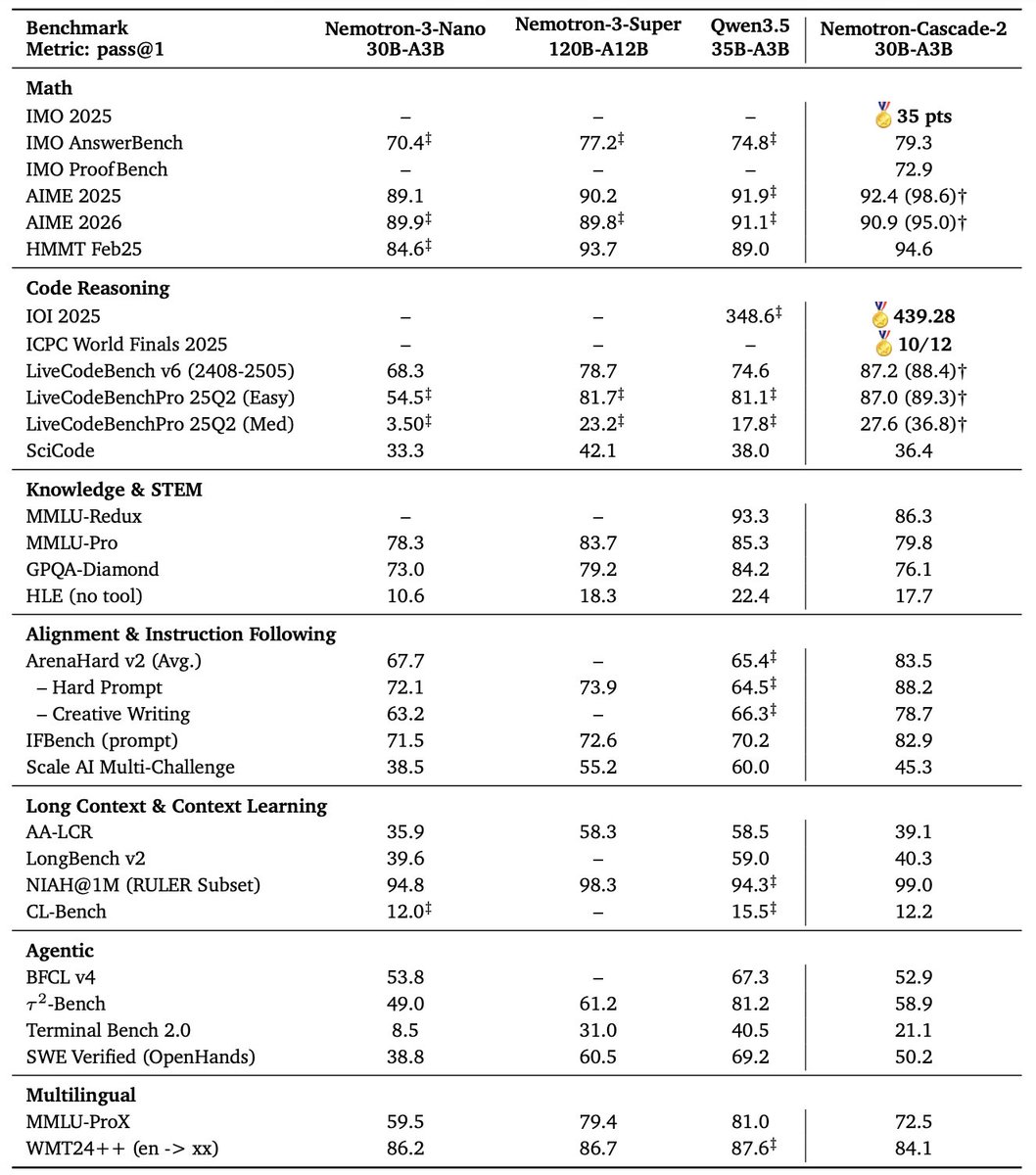

🚀 Introducing Nemotron-Cascade 2 🚀 Just 3 months after Nemotron-Cascade 1, we’re releasing Nemotron-Cascade 2: an open 30B MoE with 3B active parameters, delivering best-in-class reasoning and strong agentic capabilities. 🥇 Gold Medal-level performance on IMO 2025, IOI 2025, and ICPC World Finals 2025: • Capabilities once thought achievable only by frontier proprietary models (e.g. Gemini Deep Think) or frontier-scale open models (i.e. DeepSeek-V3.2-Speciale-671B-A37B). • Remarkably high intelligence density with 20× fewer parameters. 🏆 Best-in-class across math, code reasoning, alignment, and instruction following: • Outperforms the latest Qwen3.5-35B-A3B (2026-02-24) and even larger Qwen3.5-122B-A10B (2026-03-11). 🧠 Powered by Cascade RL + multi-domain on-policy distillation: • Significantly expand Cascade RL across a much broader range of reasoning and agentic domains than Nemotron-Cascade 1, while distilling from the strongest intermediate teacher models throughout training to recover regressions and sustain gains. 🤗 Model + SFT + RL data: 👉 huggingface.co/collections/nv… 📄 Technical report: 👉 research.nvidia.com/labs/nemotron/…

Love this place. Just noticed someone I'm following is called: "[Chinese] weight decay [Chinese]" lol

🧮 Today, we release Leanstral - the first open-source code agent for Lean 4, an efficient proof assistant capable of expressing complex mathematical objects and software specifications.

A quick teaser for the game I’m making, it's still on in its early stages. #indiedev #boomershooter #gameDev