guitarstring

12 posts

Are you a researcher at OAI/Anthropic/etc and tired of overhiring, the orgchart chaos, the lowered talent bar, want to move to NYC, or just want to do something different? Email me, DM me, mail a postcard. We've got a new datacenter full of B200s, tight team, and very successful.

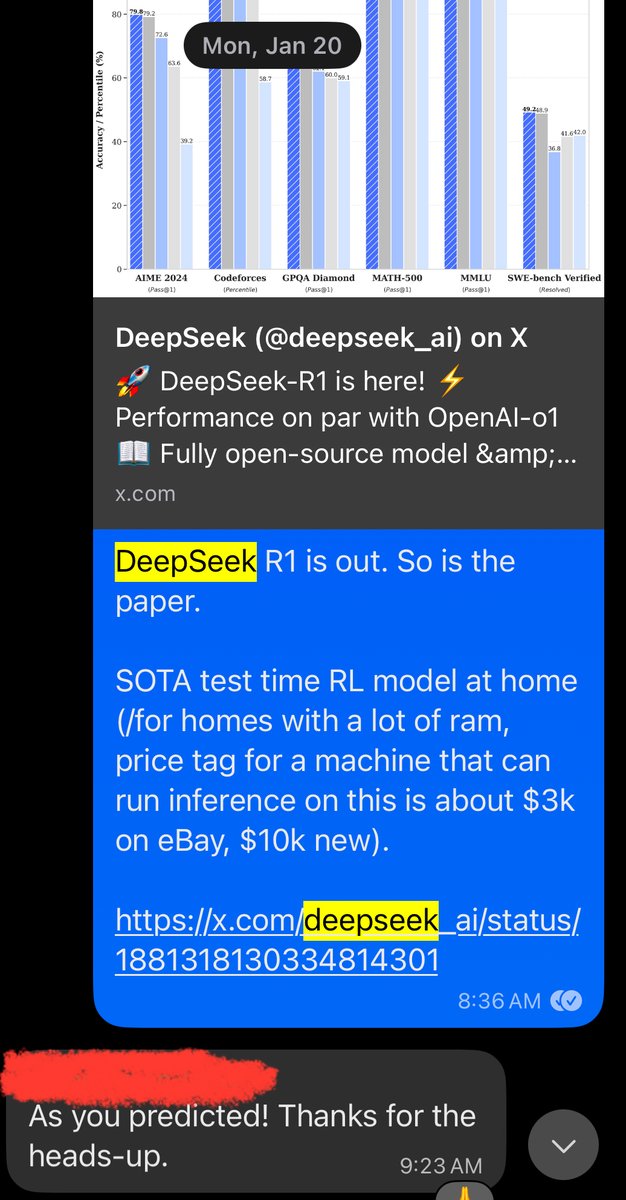

@teortaxesTex R1s success had relatively little to do with its actual capabilities and a lot to do with media hype. Not sure v2 will be able to replicate that hype even with very strong benchmarks and pricing.

RL/RLHF/LLM folks, is my reasoning correct? If we have two trajectories with sparse rewards (one traj with 0, one traj with 1), a single REINFORCE update step is equivalent to SFT with cross-entropy on the good trajectory with reward 1. Effectively, both of the methods want to go towards a policy that gives the probability of one to a good trajectory.

Dario Amodei says 2026-2027 is the critical window in AI and if you're ahead then, the models start getting better than humans at everything including AI design and using AI to make better AI, so export controls to prevent DeepSeek keeping up with US companies are worth continuing with