Se June Joo

155 posts

@joocjun

🤖🦾Research Engineer @RLWRLD_ai |M.S @kaist_ai| B.S in Math & CS @yonsei_u

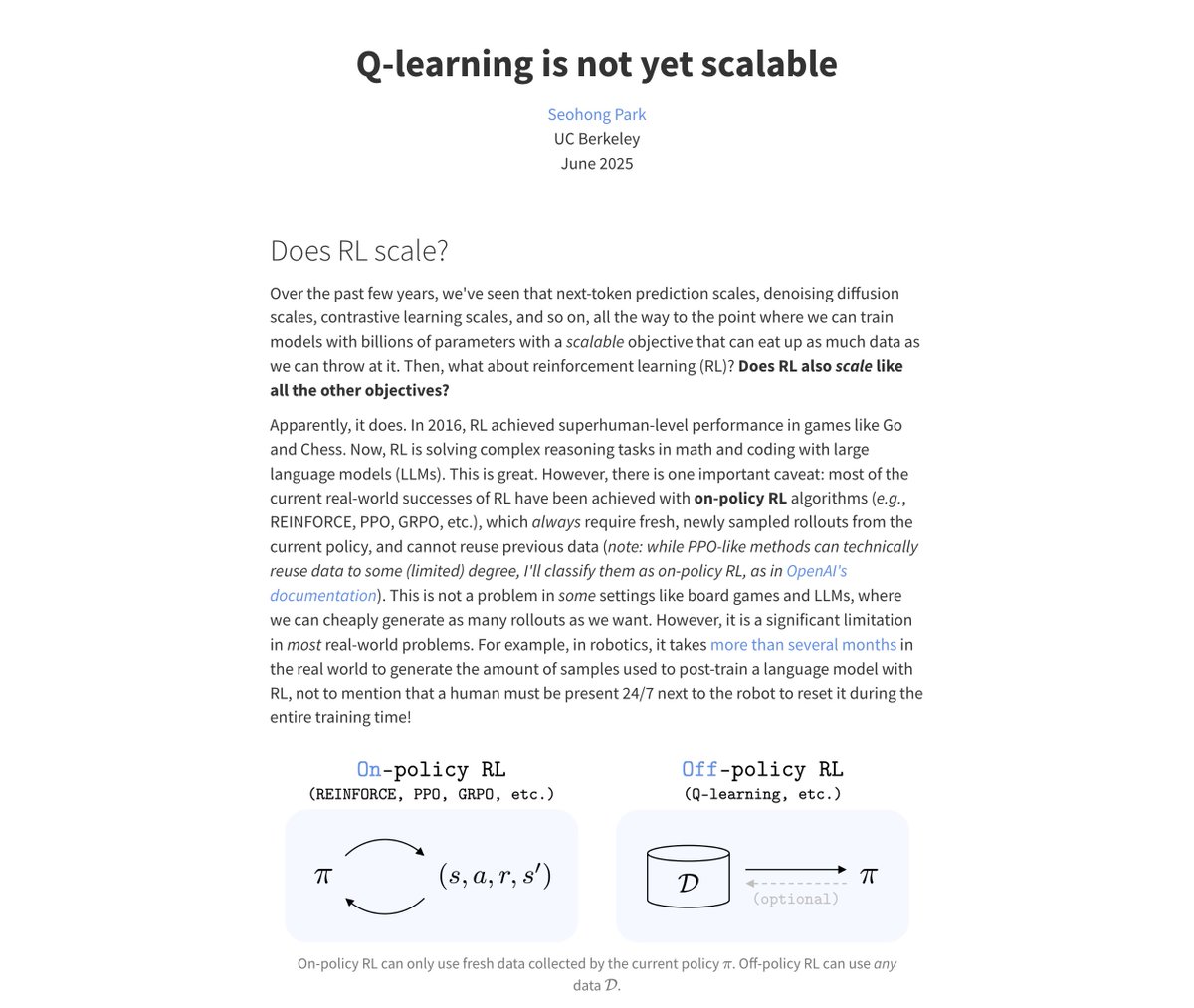

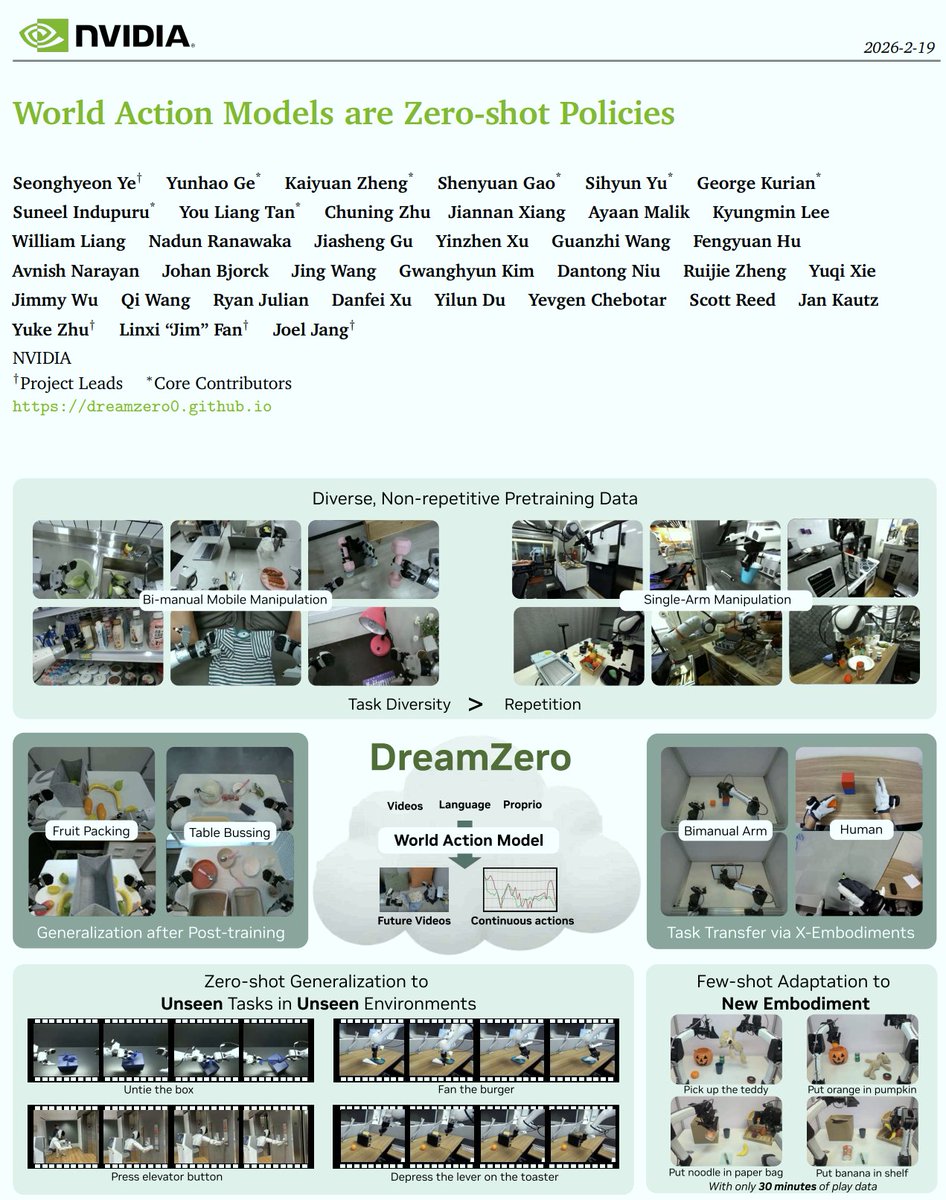

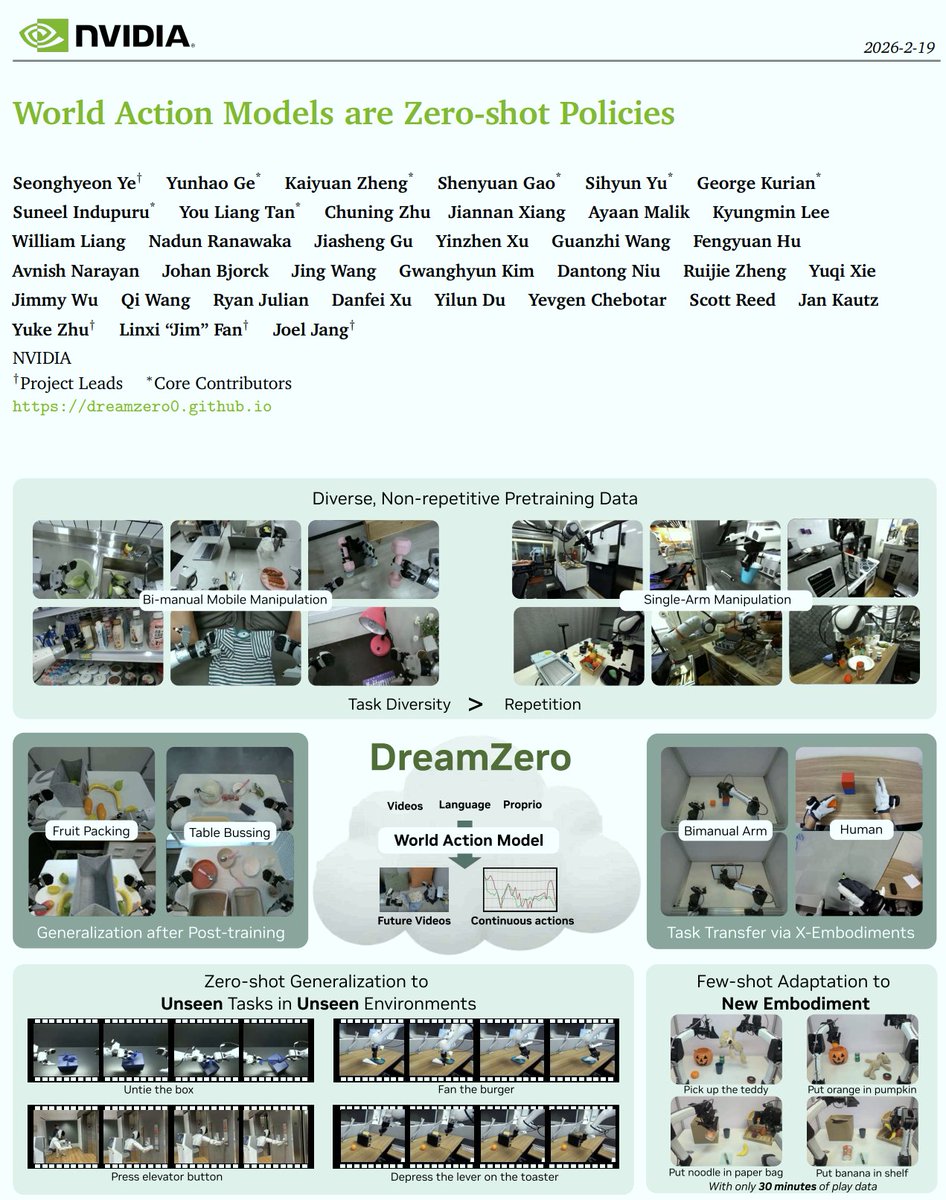

VLAs (from VLMs) ❌ => WAMs (from Video Models) ✅ Why WAMs? 1️⃣ World Physics: VLMs know the internet, but Video Models implicitly model the physical laws essential for manipulation. 2️⃣ The "GPT Direction": VLAs are like BERT (rely heavily on task-specific post-training). WAMs are like GPT (pre-train & prompt), unlocking incredible zero-shot transfer! What I want to see in 2026: 📈 Scaling Laws: We will see much clearer scaling laws for robotics compared to VLAs. 🤝 Human-to-Robot Transfer: Unlocking massive transfer capabilities using video as a shared representation space. 🤖 Zero-Shot Mastery: Moving from short-horizon tasks to long-horizon, dexterous manipulation without task-specific demonstrations. We recently open-sourced the checkpoints, training and inference code. Dive into the research! 👇 📄 Paper: arxiv.org/abs/2602.15922 💻 Code: github.com/dreamzero0/dre… 🤗 HF: huggingface.co/GEAR-Dreams/Dr…

In Seoul tonight for the @OpenAI Korea launch event. Sora installations, robot high fives, imagegen photobooths, and the amazing Korean founders and artists behind them all.