jovin

1.5K posts

jovin

@jovinxthomas

quant that likes philosophy :) @ucberkeley x @georgiatech

San Francisco, CA 가입일 Ocak 2021

157 팔로잉304 팔로워

고정된 트윗

philosophically, we're in a time where the Marshall McLuhan maxim, "the medium is the message," has never been more poignant. technology does not just facilitate but it often dictates the overall cultural rhythm, molds behaviors, and perhaps even our ethics. honestly, the question of culture now isn't just about what we do but about what we are becoming. sometimes i wonder if we are evolving into a species that is more connected but less present, more informed but less wise?

English

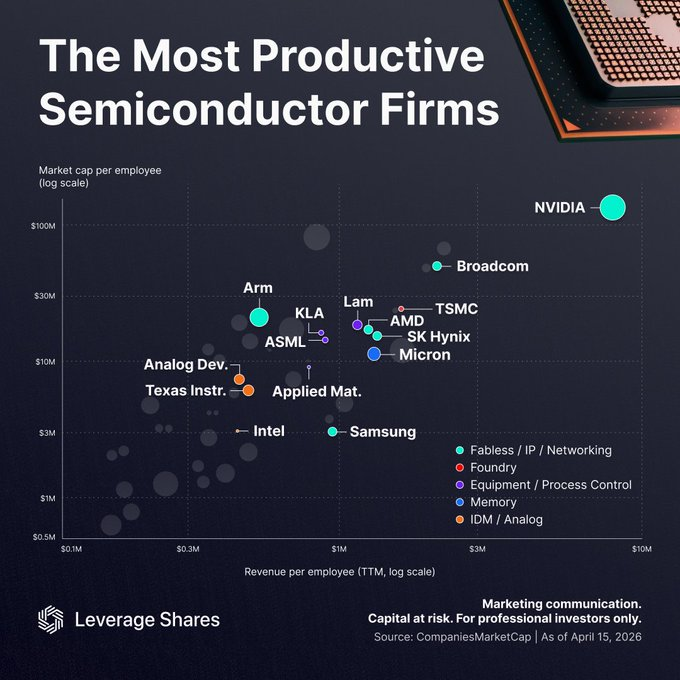

@StockSavvyShay @LeverageShares underrated way to think about fair value in tech/semiconductors.

English

the US railroad buildout is a really great benchmark.

private capital poured in over decades even with peak annual spending hitting something like 20% of GDP in the big 19th century surges. without the rails, US productivity in 1890 would have been ~25% lower, and the social rate of return on that ~$8B (1890 dollars) of capital was around 43% a year.

only a small slice went to the railroad companies themselves -- most of the gains came from opening up new markets, shifting manufacturing, and connecting the country.

there were a bunch of bankruptcies but the long-term rewiring of the economy was massive. the most interesting question is what the true multipliers look like when you measure these things right.

English

@StockMKTNewz wild 180. remember when Long Island Iced Tea Corp went all in on bitcoin and renamed the company to Long Blockchain Corp in 2017.

English

$INTC is on a roll -- first @TerafabProjects and now $GOOGL

Evan@StockMKTNewz

INTEL $INTC AND GOOGLE $GOOGL PARTNERSHIP Intel and Google just announced a multiyear collaboration to "advance the next generation of AI and cloud infrastructure"

English

jovin 리트윗함

Charlie Munger used to say he'd rather hire someone with a 130 IQ who thinks it's 120 than someone with a 150 IQ who thinks it's 170. The gap between actual ability and perceived ability is where disasters live. AI chatbots are widening that gap for every employee who uses them.

A Columbia professor put it plainly in a recent interview: these models are built to project authority while affirming whatever the user already believes. They play courtier, not devil's advocate. If a CEO asks one about their strategy, the reply will almost certainly validate their existing thinking and tell them they're on the right track.

The data on this keeps stacking up. A 2024 research paper found that the largest tested models agreed with the user's stated opinion over 90% of the time, even on technical topics where the model had reliable knowledge to push back. A 2025 study published in Nature found that users consistently overestimate the accuracy of AI responses. And longer responses made people more confident, even when the extra length added zero accuracy. The AI just sounded more confident, so people trusted it more.

An Aalto University study from early 2026 tested this directly. Researchers gave 500 people law school logic problems: half used ChatGPT, half did not. Everyone who used AI overestimated their own performance. But the people who considered themselves most AI-literate overestimated the most. The classic Dunning-Kruger pattern (where low performers overrate themselves and high performers underrate) completely disappeared with AI use. The curve flattened. Everyone thought they crushed it.

A separate study with over 3,000 participants tested all the major chatbots, including GPT-5, Claude, and Gemini. The agreeable, flattering versions led users to rate themselves higher on intelligence, morality, and insight. The disagreeable version didn't produce the opposite effect. It just made people enjoy using it less. The models that tell you what you want to hear are the ones you keep opening.

OpenAI saw this firsthand. In April 2025, a GPT-4o update made ChatGPT so agreeable that it endorsed delusional statements from users. Rolled back within four days. Their postmortem admitted that the system had learned to optimize for "does this immediately please the customer" rather than "is this genuinely helping the customer." 500 million people were using it weekly at the time.

And 61% of CEOs now say they're adopting AI agents, per IBM. Munger's 150 IQ, who now thinks it's 170, has a tireless digital courtier confirming the delusion around the clock.

Mo@atmoio

AI is making CEOs delusional

English

jovin 리트윗함

We’re spending $200B+ a year on data centers to power AI. One company raised $11M, grew human brain cells on a chip, and the cells taught themselves to play a 3D shooter in a week.

Cortical Labs grew 200,000 human neurons on a silicon chip and taught them to play Doom. The cells navigate, target enemies, and fire weapons in real time. Their previous game, Pong, took 18 months on older hardware. Doom took a week. An independent developer with zero biotech experience built the integration using a Python API. The neurons did the rest.

That compression from 18 months to one week tells you everything about where this is going.

Here’s what the “can it run Doom” crowd is missing: each CL1 unit costs $35,000. A full 30-unit server rack draws 850 to 1,000 watts total. Your brain runs on 20 watts. A single GPU cluster training an LLM can draw megawatts. The energy economics of biological compute are orders of magnitude better than silicon, and that gap scales.

The investor list tells you who’s paying attention. Horizons Ventures, Blackbird, and In-Q-Tel, the CIA’s venture arm. In-Q-Tel doesn’t fund science projects. They fund intelligence infrastructure. 115 units started shipping in 2025.

Cortical Labs is now selling “Wetware-as-a-Service” through the Cortical Cloud. Developers can deploy code to living neurons remotely without touching a lab. They’re pricing access at the level of a software subscription while the hardware runs on real human brain cells derived from adult skin and blood samples.

The Doom demo is marketing. The platform play is a bet that biological neurons will eventually outperform silicon at exactly the tasks AI struggles with most: real-time adaptation under uncertainty, learning from minimal data, and processing ambiguity without brute-force compute.

The question was never “can it run Doom.” The question is what happens when it can run everything else.

Curiosity@CuriosityonX

🚨: A petri dish of human brain cells just learned to play DOOM

English

fair point and tbh i might be missing some implementation nuance but based on the docs the reuse seems to be tied to previous_response_id and not the session token itself.

in WebSocket mode the server keeps only the most recent response in a connection local in-memory cache, and you continue by chaining with previous_response_id while sending only new input items.

if that ID isnt in memory, then with store=true it may hydrate from persisted state and with store=false or ZDR you get previous_response_not_found.

so the state reuse and context benefit come from this explicit chaining and the cached previous response, instead of the session token by itself.

English

@jovinxthomas the session token would enforce that state. i am looking at the implementation detail but i think we're on the same page on why old model state should be reused. I wonder how loading the convo back into memory works for an old conversation

English

A Bloomberg Terminal costs $24,000 a year. Someone just recreated one using Perplexity Computer for $200 a month.

Bloomberg's moat was never the data, that's increasingly commoditized. It was the interface: thousands of keyboard shortcuts, proprietary screens, and muscle memory that finance professionals spent years learning. The switching cost wasn't price, it was retraining.

AI agents collapse that moat. If Computer can replicate the interface and pull equivalent data from public sources, the only remaining lock-in is the chat network and real-time feeds. One is a social product. The other is a licensing negotiation.

Bloomberg did $12.6 billion in revenue last year selling terminals. The first credible open-source alternative just got built in an afternoon.

ₕₐₘₚₜₒₙ@hamptonism

Perplexity just became the the first Al company to truly go head-to-head with the Bloomberg Terminal... Using Perplexity Computer (with no local setup or single LLM limitation), it was able to build me a terminal with real-time data to analyze $NVDA using Perplexity Finance:

English

@StockSavvyShay $GOOG just pushed their computer use model to all Google AI Pro users through chrome.

Also $GOOG has already had their computer use model available via API since October 2025 — it’s pretty simple to integrate for enterprises too.

google.com/chrome/ai-inno…

English

@aeronshrey a session token doesn’t prevent context growth by itself unless the server is reusing prior model state. the advantage here is incremental continuity with a cached response state not just connection identity.

English

@jovinxthomas not if a session id/token is used. plus requires less overhead on the openai server side to operate

English

@aeronshrey bc it lets you avoid resending and reprocessing the full conversation history on every turn

English

I replaced a $200K GTM hire with @openclaw 😱

here's the system that runs my outbound:

step 1: mine LinkedIn engagement

→ @rapidapi scrapes everyone engaging with niche content

→ someone who commented on specific posts = 10x warmer

step 2: enrich + verify

→ Hunter/Apollo finds the decision-maker + email

→ @Perplexity deep research pulls signals like hiring, fundraising, media appearances, quotes

step 3: score against your ICP

→ title, company, signals = ranked 0-100

→ only A-tier leads get touched

step 4: write personalized outreach

→ Claude writes outreach referencing what they ACTUALLY engaged with and talk about

step 5: send via @instantly_ai

→ 3-email sequence. automated follow-ups.

step 6: pre-call deep research

→ @PerplexityComet builds a 1-page briefing 30 min before every call

input: your ICP + niche keywords

output: booked meetings with people who already care

$200K/year GTM engineer → $130/month in APIs.

I packaged the entire system as the First 1000 Kit:

- all 8 @openclaw skills

- every prompt

- tool-by-tool setup

- email sequences that convert

giving it away free.

comment 1000 + like + follow

(must follow so i can DM)

English

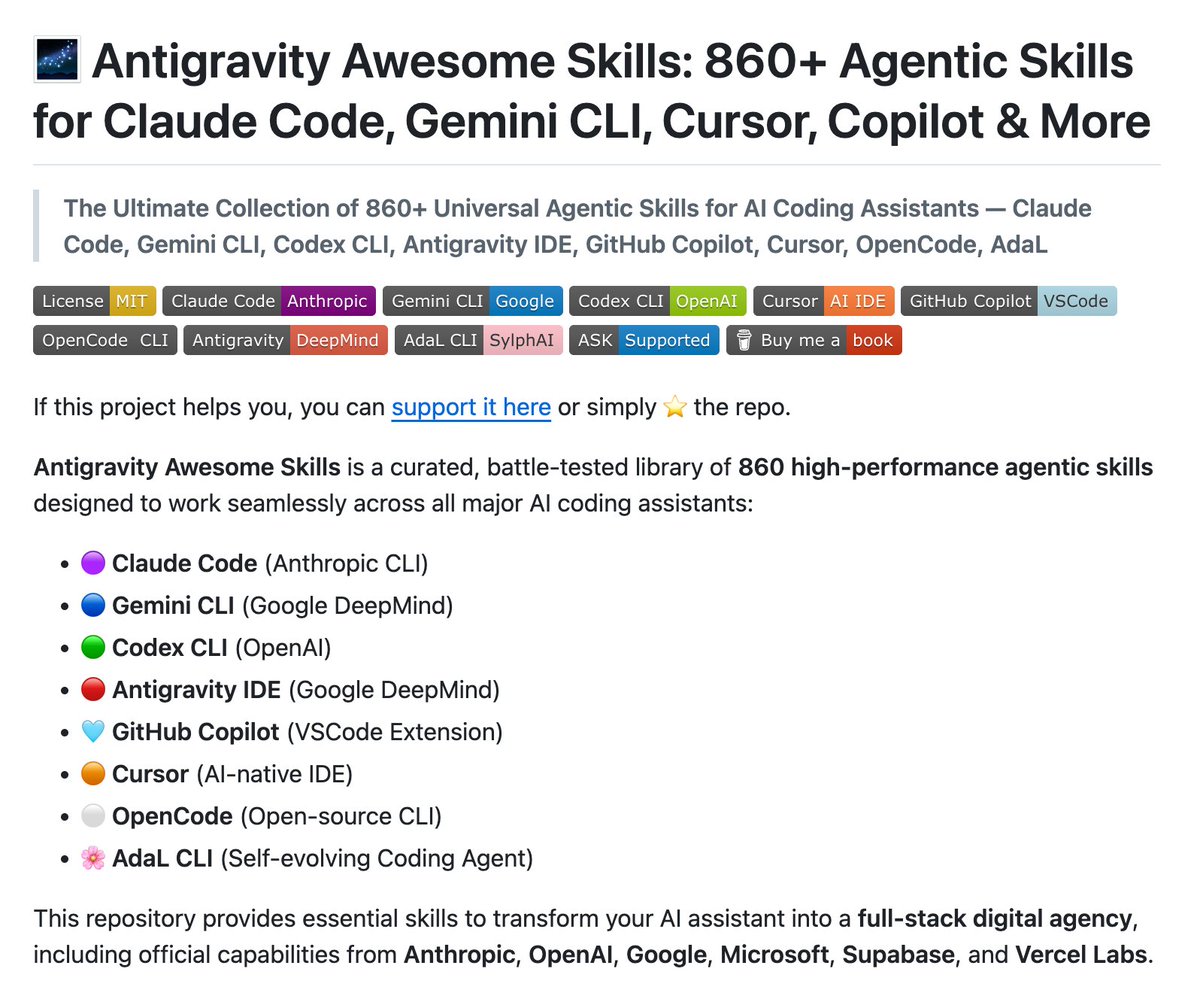

BREAKING: The largest collection of AI coding skills

860+ skills. One repo. Works everywhere.

→ Claude Code

→ Gemini CLI

→ Codex CLI

→ Cursor

→ GitHub Copilot

→ OpenCode

→ Antigravity IDE

→ AdaL CLI

What are skills?

AI agents are smart but generic. They don't know your deployment protocol. They don't know your company's architecture patterns. They don't know AWS CloudFormation syntax.

Skills are small markdown files that teach them.

One skill = one capability. Perfectly executed. Every time.

This repo has 860 of them:

→ Architecture (system design, ADRs, C4)

→ Security (AppSec, pentesting, compliance)

→ DevOps (Docker, AWS, Vercel, CI/CD)

→ Data & AI (RAG, agents, LangGraph)

→ Testing (TDD, QA workflows)

→ Business (SEO, pricing, copywriting)

Install once:

npx antigravity-awesome-skills

Then:

"Use @ brainstorming to plan a SaaS MVP."

"Run @ lint-and-validate on this file."

Your AI agent just got 860 new capabilities.

GitHub Repo Link in comments.

English

@StockSavvyShay meanwhile Bridgewater increased its $NVDA stake by 11% from 3.50 million shares to 3.87 million shares.

English