MoonMath.ai

33 posts

MoonMath.ai 리트윗함

Making models run fast at inference requires optimizing the entire AI stack.

It was great partnering with MoonMath to take @bria_ai_ 's Fibo to the next level of speed. Unlike standard models, Fibo consists of a Reasoner (VLM) and a Renderer (Flow Matching), requiring both to be optimized at the algorithm, deployment, and kernel levels.

And most importantly it was great to work with @moonmathai

Read more in the new blog post

MoonMath.ai@moonmathai

English

MoonMath.ai 리트윗함

We accelerated Fibo on Hopper, Instinct & DGX Spark (Blackwell)!

Awesome model and awesome team.

MoonMath.ai@moonmathai

English

@OPatashnik @DecartAI @ingeration @Lightricks Explore talks from last event: youtube.com/playlist?list=…

English

📢Announcing the 2nd Generative Media TLV meetup, April 15th. Tel Aviv.

Speakers:

@OPatashnik Tel Aviv Uni

Essam Qeys , @DecartAI

@ingeration , @Lightricks

no fluff !

Sign up: luma.com/pnrjjkyf

English

MoonMath.ai 리트윗함

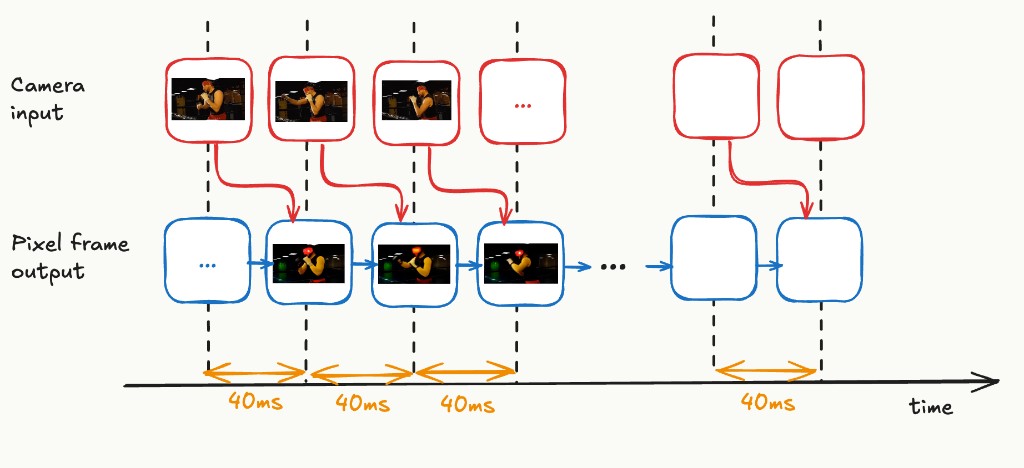

“24 FPS” ≠ real-time

Example (seaweed-apt.com/2):

- 24 FPS (this is throughput!)

- ~160ms latency → ~4 frames delay

That’s not interactive!

FrameCommit moves latent video models in that direction. True frame-by-frame real-time a-la @DecartAI

MoonMath.ai@moonmathai

1/ New post: FrameCommit: Journey From Wan to Decart LSD, Part1 The target for live-stream video is not just high FPS but ~40 ms input-to-output latency per visible pixel frame. moonmath.ai/posts/framecom…

English

1/

New post: FrameCommit: Journey From Wan to Decart LSD, Part1

The target for live-stream video is not just high FPS but ~40 ms input-to-output latency per visible pixel frame.

moonmath.ai/posts/framecom…

English

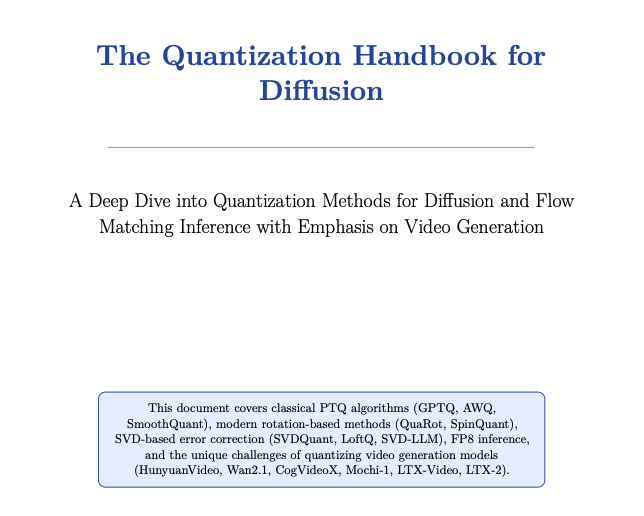

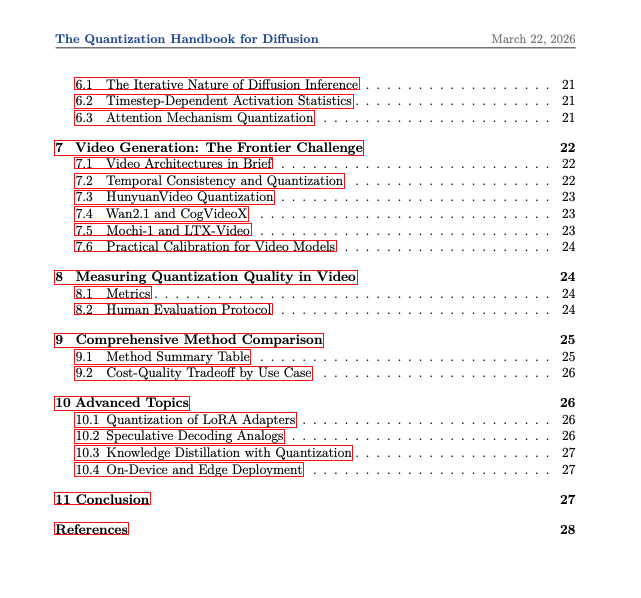

The Quantization Handbook 🪄

The first SoK for diffusion quantization

📘Read: moonmath.ai/assets/posts/q…

English

MoonMath.ai 리트윗함

This week we lost Chuck Norris, or so reality claims. In his honor, here are 10 facts about Chuck Norris and AI. Feel free to add your own

1. Chuck Norris won all reward models

2. AI trains on Nvidia because it cannot keep up training with Chuck Norris

3. When a model looks at Chuck Norris, it backpropagates

4. Chuck Norris defeated AlphaGo in Go

5. RLHF was invented by Chuck Norris, feedback originally was his kick

6. Your AI agent will go to sleep before Chuck Norris

7. Chuck Norris models don't need training, they have zero loss

8. Chuck Norris can get as many H100 as he want

9. AI never hallucinates when speaking with Chuck Norris, it’s just sometimes afraid to tell the truth

10. Chuck Norris can compile flashattention so quickly, nvcc asks him for advice

h/t my team, Thank you Chuck, we'll always remember♥️

English

MoonMath.ai 리트윗함

had to integrate Recraft the moment v4 dropped.

more coming soon 👀

Recraft@recraftai

Your story. Your exact color palette. 🎨✨ Design-grade visuals now powering the next generation of storytelling over at @storynote_ai. Curious to see the results!

English

MoonMath.ai 리트윗함

🧑🏭 LiteRunner 🧑🏭

MLOps-Style Tracking Without Touching the Code (New Tool)

TL;DR: LiteRunner adds lightweight tracking to any CLI command without changing the model, saving params, outputs, and metrics locally and in W&B so every run stays reproducible and organized.

Code (open source!): github.com/moonmath-ai/Li…

Blog: moonmath.ai/posts/literunn…

Contributions are welcome 🙌

More background:

When running video generation experiments with diffusion models, the workflow quickly turns into bookkeeping. Every run starts with hand-editing long CLI commands, quoting paths, swapping flags manually, and each run produces a different combination of config, output videos, metrics, and debug data. Output files end up scattered across multiple folders and machines with no central record, sometimes even overwriting each other. Moving those files and recording runs becomes tedious, and inevitably the one run that wasn’t properly recorded turns out to be the one that matters. Revisiting an old experiment often means digging through notes just to figure out whether it used seed 10 or 42.

When you own the code, you can wire in an MLOps tool to solve this. But often you’re just a user of someone else’s model, and modifying their source just to get proper tracking isn’t practical. That’s when the idea comes up: instead of changing the model code, bring MLOps-style logging to arbitrary CLI commands, so experiments can be tracked without touching the original implementation.

English