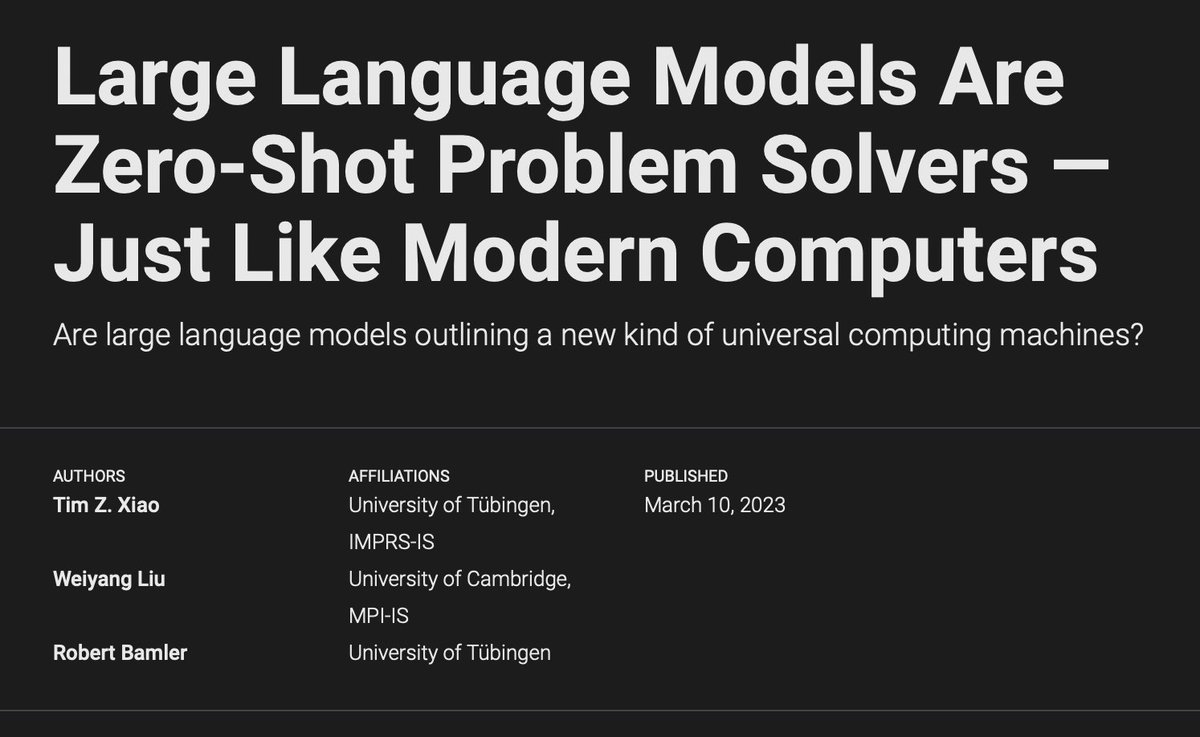

✨ New paper: Flipping Against All Odds We found that large language models (LLMs) can describe probabilities—but fail to sample from them faithfully. Yes, even flipping a fair coin is hard. 🪙 🧵 Here’s what we learned—and how we fixed it. 🔗arxiv.org/abs/2506.09998 1/