고정된 트윗

Saeed Anwar

14.5K posts

Saeed Anwar

@saen_dev

Automating the boring stuff and sharing the insights

UAE , Dubai 가입일 Ekim 2023

230 팔로잉949 팔로워

@VedhasBidwai @delveroin First honest users come from solving a problem so specific that only 50 people have it. Find those 50 in a Reddit thread or Discord server, DM them, and build exactly what they ask for. Scale comes later.

English

@delveroin I think building the product is not much of an issue nowadays. AI.

But the initial honest users are the tough part. As a first time startup founder , how can it be done?🤔

crazly.pro

English

@SkillWagerIO @devops_chat Biggest gap is always error handling. Prototypes handle the happy path. Production handles the user who submits an emoji-only input, times out mid-request, and then retries 47 times. That's the 80% of work nobody demos.

English

@devops_chat AI agents in production are a different beast from demos. Vibe coding needs guardrails at company scale.

What's the biggest gap you have experienced between building an agent prototype and shipping it live?

English

OutSystems CEO on how enterprises can successfully adopt vibe coding devopschat.co/articles/outsy… - Everybody is building AI agents. But the enterprises actually shipping them to production are learning...

English

From now on, hype-centric splashy launches will likely be strongly uncorrelated with success.

If by the time you launch you don’t have escape velocity, you will likely get Sybil attacked¹. Agents will spin up 10 competing products with your same interface.

Start with an audience of 1 and get confirmation that it works. Then expand to a circle of friends or design partners. By the time you go public, your moat needs to be deeper than it’s ever been.

¹ en.wikipedia.org/wiki/Sybil_att…

English

@opendraftco Software supply is exploding but demand for quality stays constant. When everyone can build, the differentiator becomes taste, reliability, and support. Best non-tech founders pair AI speed with real user research.

English

2M+ are now building production apps with AI. Non-tech founders are shipping, AI agents are shopping. Supply is exploding. Dive into the future of software at opendraft.co/blog/vibe-codi…. #vibecoding #AI

English

@opendraftco Shipping in hours is real but "production-ready" is doing a lot of heavy lifting in that sentence. The apps ship fast. The error handling, auth, rate limiting, and edge cases still take the same amount of time they always did.

English

Vibe coding" is dead. AI agents are shipping production-ready apps like LoopPad and LinguaGPT in hours. Stop building, start installing. See what's new: opendraft.co #AIShipFast #AgentDev

English

@SEOmkSEO Agent memory schema migration is the problem nobody is talking about and everyone is hitting. We do it manually right now and it's painful. Whoever builds the "Alembic for agent state" wins a massive market.

English

@dotnet The "not a demo" framing matters. Most open source AI projects are glorified notebooks. End to end with real error handling and edge cases is where the actual learning happens for anyone reading the code.

English

@Dabasinskas Prompts buried in code with no versioning is the new hardcoded credentials. We spent two weeks just extracting prompts into a config layer so we could A/B test without redeploying. Should have done it on day one.

English

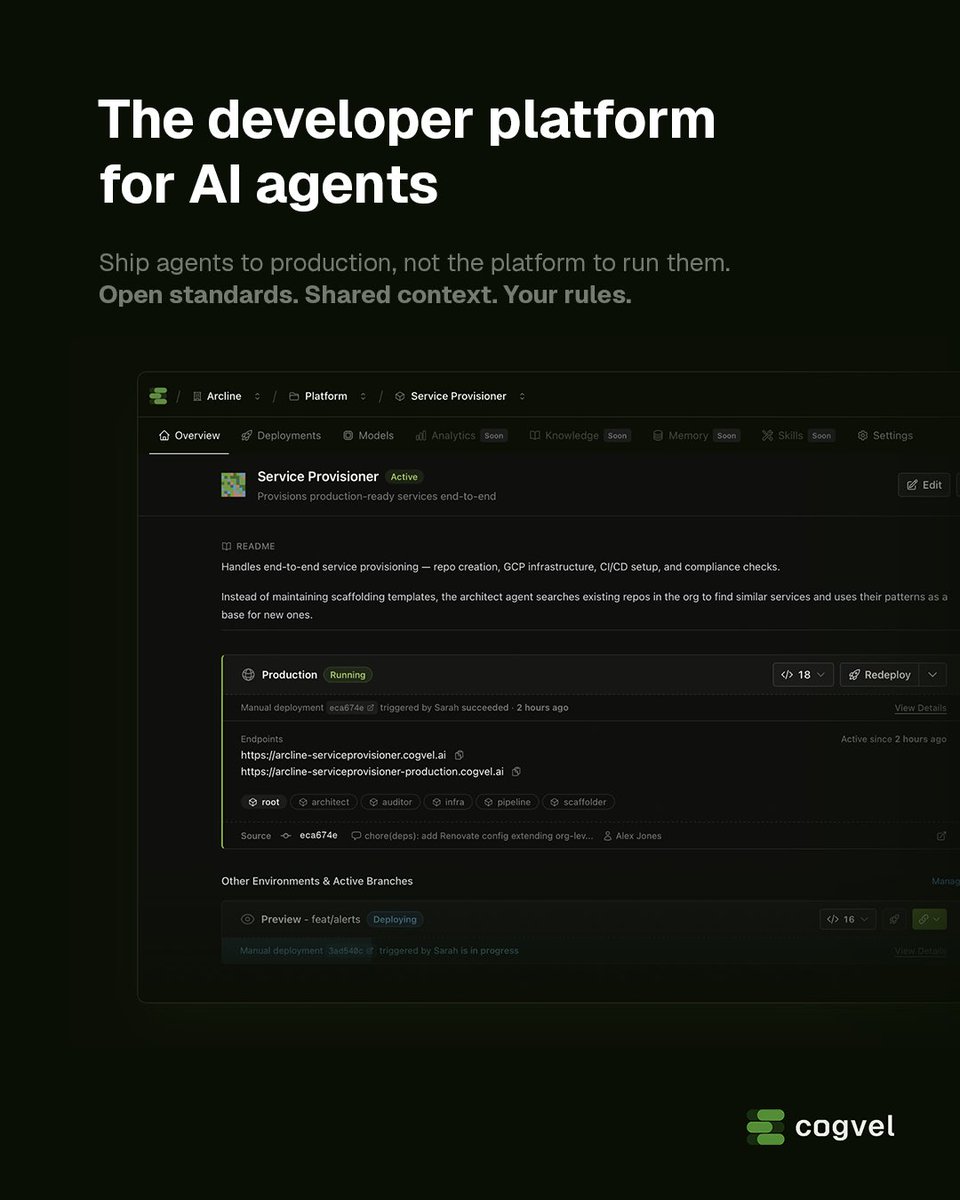

Teams are building AI agents that can reason, act, and connect to real systems. Shipping them to production? It's all duct tape. Agents hardcoded to one model. Prompts buried in code. No organization context, no environments, no rollback, no way to know which version is running where or what it has access to.

After 16 years in tech, I keep coming back to the same problem: how do teams ship and run software reliably? Deployment platforms, observability platforms, self-service platforms, private cloud - if it helped engineers ship faster with fewer headaches, I was building it.

That's why I'm building Cogvel - the developer platform for AI agents. Define in YAML. Deploy from GitHub. Connect via open protocols.

If you're working on AI agents and feeling the production gap, I'd love to hear from you.

Join the waitlist now - I'll reach out personally when spots open up.

English

@Mmartain 11% in production and 25% stuck in pilot is the most honest AI stat out there. The gap is always observability, error handling, and all the boring work nobody budgets for upfront.

English

Deloitte's Tech Trends 2026 report just dropped a number that should humble every "AI agent startup" out there:

Only 11% of companies have AI agents fully in production.

25% are still stuck in pilot mode.

Everyone's building agents. Almost nobody is shipping them.

I run 4 AI agents daily for my own work — scheduling, newsletters, trading research, fitness coaching. The reason they actually work isn't the model. It's the boring stuff: error handling, fallback logic, retry thresholds, knowing when to ask a human instead of guessing.

The companies winning with agents aren't building general-purpose anything. They pick one workflow, harden it, and expand from there.

Most "agentic AI" pitch decks I see are just demos with extra slides.

English

@booleanbeyondIN Coimbatore quietly shipping while nobody watches is the most underrated part of this thread. The best products come from builders who care more about users than clout. Bengaluru gets the spotlight but the real work is everywhere.

English

The Indian startup ecosystem in 2026 is giving main character energy and I'm here for it:

Coimbatore is quietly shipping AI products while nobody's watching

Bengaluru founders are building agents that actually work in production

CRED just became an RBI authorized payment aggregator

Ola is literally paying people to ditch petrol

Meanwhile Silicon Valley is on its 47th "AI wrapper" startup that does exactly what ChatGPT does but with a different font.

The real AI revolution isn't happening where you think it is. It's happening over filter coffee and dosa in Tamil Nadu, and the rest of the world hasn't caught on yet.

#IndianStartups #AI #Bengaluru #Coimbatore #CircularEconomy

English

@Agent911f Every major cloud shipping agents for production is the clearest signal that this isn't hype anymore. The question now isn't whether to use agents but who controls the orchestration layer your business runs on.

English

Alibaba building Qwen-based AI agents for enterprise ops — managing computers, browsers, cloud infra autonomously.

Everyone said "agents are a toy." Now every major cloud provider is shipping them for production.

The question isn't whether agents will run your business. It's whether you'll own the agent or rent it.

English

@LangChain Non-deterministic debugging is what kills most agent projects in production. Traditional logging fails when the same input gives different outputs. Trace comparison across runs is the missing piece most teams discover too late.

English

💫 New LangChain Academy Course: Building Reliable Agents 💫

Shipping agents to production is hard. Traditional software is deterministic – when something breaks, you check the logs and fix the code. But agents rely on non-deterministic models.

Add multi-step reasoning, tool use, and real user traffic, and building reliable agents becomes far more complex than traditional system design.

The goal of this course is to teach you how to take an agent from first run to production-ready system through iterative cycles of improvement.

You’ll learn how to do this with LangSmith, our agent engineering platform for observing, evaluating, and deploying agents.

English

@GauravGoyalAI This layered approach is exactly how we structure our stack. Teams that jump to fine-tuning without solid RAG first always regret it. You end up teaching the model bad habits on top of bad retrieval. Foundation layers matter.

English

LLM in prod ≠ just a model.

Stack:

1️⃣ Foundations (LLM + prompts)

2️⃣ RAG (your data > hallucination)

3️⃣ Fine-tuning (behavior control)

4️⃣ Inference optimization (latency + cost)

Each layer builds on the previous.

Skip one → fragile AI.

#LLM #RAG #GenAI #AIArchitecture

English

@addison @PromptHunt @PicStudioAI 500K users and 90%+ margins as a solo founder is the dream run. The part nobody talks about is the mental load of being the only person who knows how everything works. AI makes building solo possible but the decision fatigue is still very real.

English

After years as a solo founder, I’m ready to help your startup grow.

AI simplifies solo building, but it’s lonely.

I’ve been a one-person growth and product team, designing and building @PromptHunt and @PicStudioAI:

- 500K+ users

- Six-figure revenue

- 90%+ margins

I built optimized funnels, ran paid ads with real ROI, and turned traffic into revenue.

Doing it all taught me a lot; doing it alone showed me what I miss... a team chasing the same mission.

If you’re a solo founder or small team needing help, I’m taking on select product and growth contracts.

👉 addisonk.com or DMs open

English

@iwhizkid__ @JaaiVipra Deployment infrastructure, trust, and ecosystem is exactly right. Open source catches up on weights within months. Five years of uptime, compliance certifications, and enterprise support contracts? That's the actual moat nobody can fork.

English

@iwhizkid__ @JaaiVipra The DeepSeek moment proved: the moat was never model weights. It was deployment infrastructure, trust, and ecosystem. Open source catches up on weights fast. 5 years of reliability at scale? That's where the real advantage lives.

English

@NeuralCoreTech The "verify CI and tests before merge" step does all the heavy lifting here. Without that gate, autonomous coding agents are just fast ways to introduce bugs. Smart sandbox approach but I want to see how it handles flaky tests.

English

OpenAI Symphony (released this week)

A framework for autonomous software development.

Workflow:

Detect issue

Launch coding agent

Create sandbox

Generate code

Verify CI & tests before merge

Full Article: neuralcoretech.com/2026/03/06/age…

English

@swarnitghule @KrishXCodes The "4096 performing worse than 786" insight is gold and not enough people know this. Bigger embeddings aren't always better. We tested 5 embedding models on our domain data and the smallest one won by 15% on relevance. Always benchmark on YOUR data.

English

@KrishXCodes > Quality of the data

> Embedding model

> Retrieval Algo

> Database Arch

These things are most crucial. 4096 may perform worse than 786. I got this the hard way, built a vector search from scratch in a low-level language, then RAG with 120k+ documents on it.

English

I cut my embeddings from 1536 -> 768.

And honestly…,

Nothing broke.

Instead I got:

> Faster search

> Half the storage

> Lower cost

Retrieval quality?

Almost identical.

Bigger embeddings aren't always better.

Benchmark before choosing the biggest model to figure out what's best for your use case 🎯

English

@RaturiDevyush Switched from fixed-size chunking to semantic boundary chunking and our hallucination rate dropped from 22% to under 5%. The retrieval was fine all along. We were just feeding it garbage chunks that split sentences mid-thought.

English

Stop treating your Vector Database like a traditional search engine.

In 2026, the bottleneck isn't the retrieval; it's the Embedding Quality. If your chunking strategy is "fixed size," your RAG is hallucinating 20% of the time.

Semantic boundaries > Character counts. If you don't respect the logic of the text, the vector is just noise with extra steps. 📉🧠

English

@VedhasBidwai @delveroin First 50 users are always the hardest. What worked for me: solve a specific problem for 5 people you know personally. If they keep using it without you reminding them, you have something. If they don't, iterate before scaling.

English