Sabitlenmiş Tweet

Hari Prasad

168 posts

Hari Prasad

@booleanbeyondIN

Building AI tools in public | Systems thinker breaking down how tech actually works | Writing about AI from Bengaluru

bengaluru Katılım Şubat 2026

595 Takip Edilen107 Takipçiler

@MorningBrew $2.5B in NVIDIA chips smuggled to China through a US server company. This is why the real AI bottleneck isn't manufacturing — it's geopolitics. The more people circumvent export controls, the tighter they get for everyone else building AI infrastructure globally.

English

The biggest unlock with Codex isn't the code generation. It's that non-engineers can now validate technical ideas without waiting 2 weeks for a sprint cycle.

Product managers prototyping features. Researchers testing hypotheses. Scientists automating analysis.

The gap between "idea" and "working prototype" just collapsed from weeks to hours. That changes who gets to build, not just how fast things get built.

English

he read-memories skill in DuckDB is the sleeper feature. Most people treat Claude Code memories as flat text. Querying them with SQL means you can build retrieval logic on top of project context. Composable skills > monolithic IDEs. DuckDB as the local data layer for Claude is a natural fit.

English

We're excited to announce duckdb-skills, a DuckDB plugin for Claude Code!

We think the embedded nature of DuckDB makes it a perfect companion for Claude in your local workflows.

The skills supported include:

+ read-file and query – uses DuckDB's CLI to query data locally, unlocking easy access to any file that DuckDB can read.

+ read-memories – a clever idea to store your Claude memories in DuckDB and query them at blazing speed.

These are powered by two additional skills:

+ attach-db – gives Claude a mechanism to manage DuckDB state through a .sql file linked to your project.

+ duckdb-docs – uses a remote DuckDB full-text search database to query the DuckDB docs and answer all of your (and Claude's own) questions.

github.com/duckdb/duckdb-…

English

The real story isn't that Cursor used Kimi K2.5. It's that the entire "AI coding tool" market is converging on the same playbook: take an open-weight model, run RL on coding benchmarks, wrap it in UX, call it proprietary.

The moat was never the model. It's distribution + habit.

This is why Anthropic and OpenAI are racing to ship their own IDEs. They know the wrapper companies are margin-arbitraging their R&D. The question is whether Cursor's 2B ARR makes them too big to displace or if this leak accelerates the "just use Claude Code directly" movement.

English

Cursor is raising at a $50 billion valuation on the claim that its “in-house models generate more code than almost any other LLMs in the world.” Less than 24 hours after launching Composer 2, a developer found the model ID in the API response: kimi-k2p5-rl-0317-s515-fast.

That’s Moonshot AI’s Kimi K2.5 with reinforcement learning appended. A developer named Fynn was testing Cursor’s OpenAI-compatible base URL when the identifier leaked through the response headers. Moonshot’s head of pretraining, Yulun Du, confirmed on X that the tokenizer is identical to Kimi’s and questioned Cursor’s license compliance. Two other Moonshot employees posted confirmations. All three posts have since been deleted.

This is the second time. When Cursor launched Composer 1 in October 2025, users across multiple countries reported the model spontaneously switching its inner monologue to Chinese mid-session. Kenneth Auchenberg, a partner at Alley Corp, posted a screenshot calling it a smoking gun. KR-Asia and 36Kr confirmed both Cursor and Windsurf were running fine-tuned Chinese open-weight models underneath. Cursor never disclosed what Composer 1 was built on. They shipped Composer 1.5 in February and moved on.

The pattern: take a Chinese open-weight model, run RL on coding tasks, ship it as a proprietary breakthrough, publish a cost-performance chart comparing yourself against Opus 4.6 and GPT-5.4 without disclosing that your base model was free, then raise another round.

That chart from the Composer 2 announcement deserves its own paragraph. Cursor plotted Composer 2 against frontier models on a price-vs-quality axis to argue they’d hit a superior tradeoff. What the chart doesn’t show is that Anthropic and OpenAI trained their models from scratch. Cursor took an open-weight model that Moonshot spent hundreds of millions developing, ran RL on top, and presented the output as evidence of in-house research. That’s margin arbitrage on someone else’s R&D dressed up as a benchmark slide.

The license makes this more than an attribution oversight. Kimi K2.5 ships under a Modified MIT License with one clause designed for exactly this scenario: if your product exceeds $20 million in monthly revenue, you must prominently display “Kimi K2.5” on the user interface. Cursor’s ARR crossed $2 billion in February. That’s roughly $167 million per month, 8x the threshold. The clause covers derivative works explicitly.

Cursor is valued at $29.3 billion and raising at $50 billion. Moonshot’s last reported valuation was $4.3 billion. The company worth 12x more took the smaller company’s model and shipped it as proprietary technology to justify a valuation built on the frontier lab narrative.

Three Composer releases in five months. Composer 1 caught speaking Chinese. Composer 2 caught with a Kimi model ID in the API. A P0 incident this year. And a benchmark chart that compares an RL fine-tune against models requiring billions in training compute without disclosing the base was free.

The question for investors in the $50 billion round: what exactly are you buying? A VS Code fork with strong distribution, or a frontier research lab? The model ID in the API answers that.

If Moonshot doesn’t enforce this license against a company generating $2 billion annually from a derivative of their model, the attribution clause becomes decoration for every future open-weight release. Every AI lab watching this is running the same math: why open-source your model if companies with better distribution can strip attribution, call it proprietary, and raise at 12x your valuation?

kimi-k2p5-rl-0317-s515-fast is the most expensive model ID leak in the history of AI licensing.

Harveen Singh Chadha@HarveenChadha

things are about to get interesting from here on

English

The nuance everyone's missing: AI coding tools don't make devs worse. They make devs who DON'T understand fundamentals worse. A senior dev using Copilot ships faster because they can evaluate the output. A junior dev using Copilot skips the learning that MAKES them senior. The tool isn't the problem. The shortcut is.

English

JUST DROPPED: Anthropic's research proves AI coding tools are secretly making developers worse.

"AI use impairs conceptual understanding, code reading, and debugging without delivering significant efficiency gains." -- That's the paper's actual conclusion.

17% score drop learning new libraries with AI.

Sub-40% scores when AI wrote everything.

0 measurable speed improvement.

→ Prompting replaces thinking, not just typing

→ Comprehension gaps compound — you ship code you can't debug

→ The productivity illusion hides until something breaks in prod

Here's why this changes everything:

Speed metrics look fine on a dashboard.

Understanding gaps don't show up until a critical failur and when they do the whole team is lost.

Forcing AI adoption for "10x output" is a slow-burning technical debt nobody is measuring.

Full paper: arxiv.org/abs/2601.20245

English

Google is quietly building the most dangerous product in tech. Think about it: AI Studio now does what took a full dev team 3-6 months. Auth, databases, APIs, all from one prompt. The $150K/yr full-stack engineer job description just got rewritten. Builders who learn to leverage this will 10x their output.

English

icymi: NVIDIA just shipped NemoClaw at GTC last week.

OpenClaw became the fastest-growing open source project in history. But enterprises wouldn't touch it b/c ppl called it "a security nightmare."

so, NemoClaw = Openclaw + security layer

I'll be testing it when it's live and will share what I find after then.

unusual_whales@unusual_whales

BREAKING: Nvidia, $NVDA, is planning to launch an open-source AI agent platform called NemoClaw, allowing enterprises to deploy AI agents for their workforces, per WIRED.

English

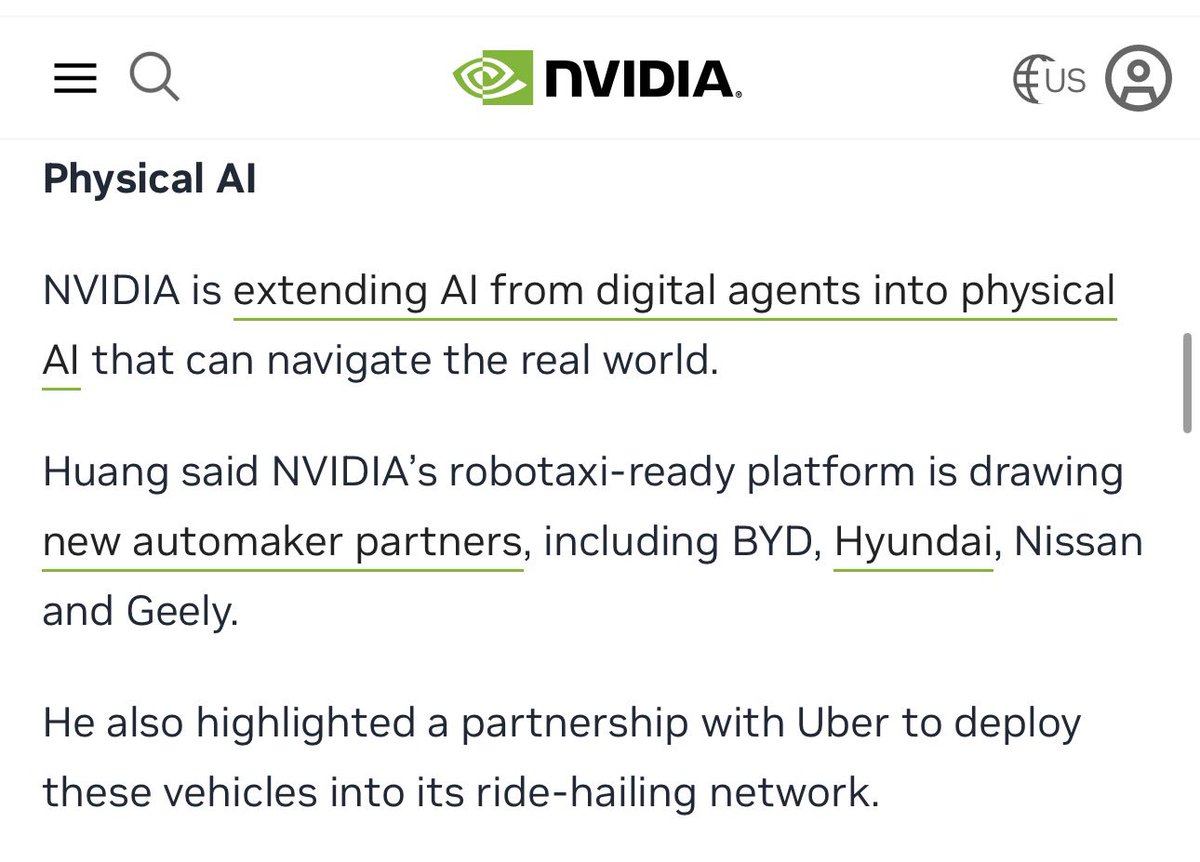

Great breakdown. The real play here is that Jensen doesn't need autonomous driving to work TODAY. He needs Nissan, BYD, Geely, and Hyundai to BELIEVE it will work tomorrow. Every dollar they spend on Drive Hyperion is NVIDIA hardware revenue regardless of L4 timelines. Classic picks-and-shovels.

English

The 8.76 Billion Mile Shortfall

Let’s separate plans from reality, shall we?

At GTC, Jensen Huang referenced the “ChatGPT moment” autonomous driving and announced sweeping partnerships with Uber and others.

♦️ What Nvidia’s Alpamayo is: An open-source suite of reasoning VLA models, tools, and datasets for AVs. It mirrors key parts of Tesla’s FSD architecture and is built to sell a ton of Nvidia hardware. Nvidia’s VLA architecture and open-source tools are genuinely valuable for startups.

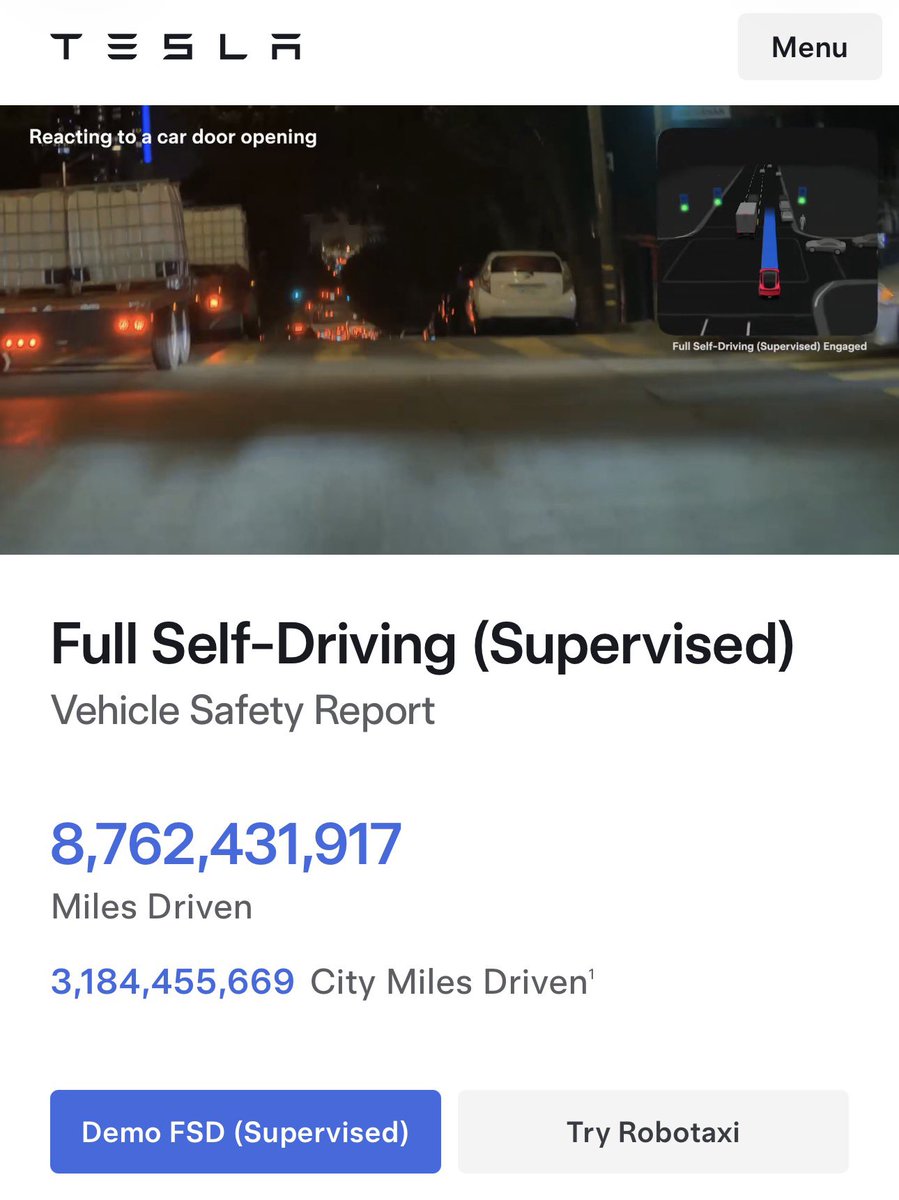

♦️ What it is not: On par with Tesla - or instantly scalable worldwide via overnight software updates. Tesla is already at 8.76 billion real-world FSD miles (and growing ~20 million miles per day). Tesla’s are running without safety drivers in Austin, and CyberCab mass production starts in a few weeks. Alpamayo partners are billions of miles short on the long tail — and sourcing that data is their job, not Nvidia’s.

Nvidia’s new Physical AI Data Factory Blueprint (with Cosmos Curator, Transfer, and Evaluator) generates synthetic data. However, it is no substitute for doing the real work & training at fleet scale.

Tesla has been running its driving AI (FSD) inside a physics-accurate world model for years — now massively supplemented with billions of miles of real-world data. Truth will always be stranger than fiction.

“We’ve been able to generate physics-accurate, real-time video for self-driving training & testing at @Tesla_AI for a long time. The compute required for this (roughly one H100 per HD camera) is still far too expensive for consumer use, but probably becomes affordable in 2 to 3 years.” — Elon Musk

Tesla’s unmatched real fleet data + vertical integration + rapid OTA updates still puts Tesla years ahead.

Elon Musk@elonmusk

We’ve been able to generate physics-accurate, real-time video for self-driving training & testing at @Tesla_AI for a long time. The compute required for this (roughly one H100 per HD camera) is still far too expensive for consumer use, but probably becomes affordable in 2 to 3 years.

English

The AI infrastructure race isn't coming. It's here. And NVIDIA just put a $1 trillion price tag on it. If you're building with AI, follow @booleanbeyondIN. I break down what's actually happening in tech so you don't have to read 47 articles. Bookmark this thread.

English

@RoundtableSpace Good framework but missing the most important one:

0. Problem-first thinking — pick a real painful workflow BEFORE choosing which AI category to use.

Most people fail at AI not because they picked the wrong model. They picked the wrong problem.

English

I'm about to make AI stupidly simple for you.

This is ALL you need. Stop overcomplicating it.

1. Agentic AI – your daily driver (autonomous agents that plan + execute real work)

2. Multimodal Models – the real power (text + image + video + audio in one brain)

3. Vertical AI – where the money is made (industry-specific models: healthcare, finance, legal)

4. Efficient / On-Device AI – the future backbone (smaller, faster, private, runs anywhere)

5. Multi-Agent Systems – exponential productivity (swarms of specialized agents collaborating)

That's all.

Pick 1-2, go deep, ignore the rest.

Most people chase everything and win nothing.

English

There's a guy in Indore who built an AI tool that reads WhatsApp voice notes from kirana store owners and auto-generates purchase orders.

No fancy UI. No pitch deck. No VC money.

Just a Python script, a Whisper API, and 4,000 paying users at ₹299/month.

Meanwhile in Koramangala, someone's raising a seed round for "AI-powered grocery analytics."

India's best AI companies won't come from demo days. They'll come from people who've actually stood inside a kirana store.

English

I replaced a 14-person QA team's workflow with a Claude pipeline that costs $47/month.

Not their jobs. Their repetitive work.

Now those 14 people catch bugs that require actual human judgment — the stuff AI hallucinates on.

The real threat of AI isn't replacing people. It's companies using it as an excuse to fire people instead of upskilling them.

Same output. Half the burnout. Zero layoffs. That's the right way to deploy AI.

English

I interviewed 50 developers in Bengaluru this month.

Here's the uncomfortable truth nobody's saying:

The ones mass-applying to jobs? Using ChatGPT to write resumes, cover letters, and even take-home assignments.

The ones getting hired? Building side projects with AI and showing the GitHub.

The job market hasn't gotten harder. The bar for "proving you can actually build" just went up 10x.

Stop applying. Start shipping.

English

Controversial opinion:

90% of "AI startups" in India are just API wrappers with a landing page.

They raise money. They post on LinkedIn. They die in 6 months.

The 10% that survive? They never called themselves "AI startups." They just solved a problem that happened to need AI.

Agree or disagree?

English

@sachinyadav699 You missed the real 2026 plot twist:

The devs who survive aren't the ones who code the fastest. They're the ones who understand problems AI can't even frame yet.

AI can write code. It can't understand why your users rage-quit on step 3 of onboarding.

English

Software Engineer journey

2019 - learn to code

2020 - automate everything

2021 - AI will assist developers

2022 - AI is just a tool bro

2023 - only bad devs will be replaced

2024 - ok Cursor is actually insane

2025 - Claude writes the whole codebase

2026 - fine… I’ll become a farmer

2027 - robots farming 24/7

2028 - what left ??

China live@ChinaliveX

This might be what socialized production in China will look like in the future:

English