Kevin

1.5K posts

Kevin

@typedarray

Engineering @monad @ponder_sh. EVM, TypeScript, open-source enjoyer

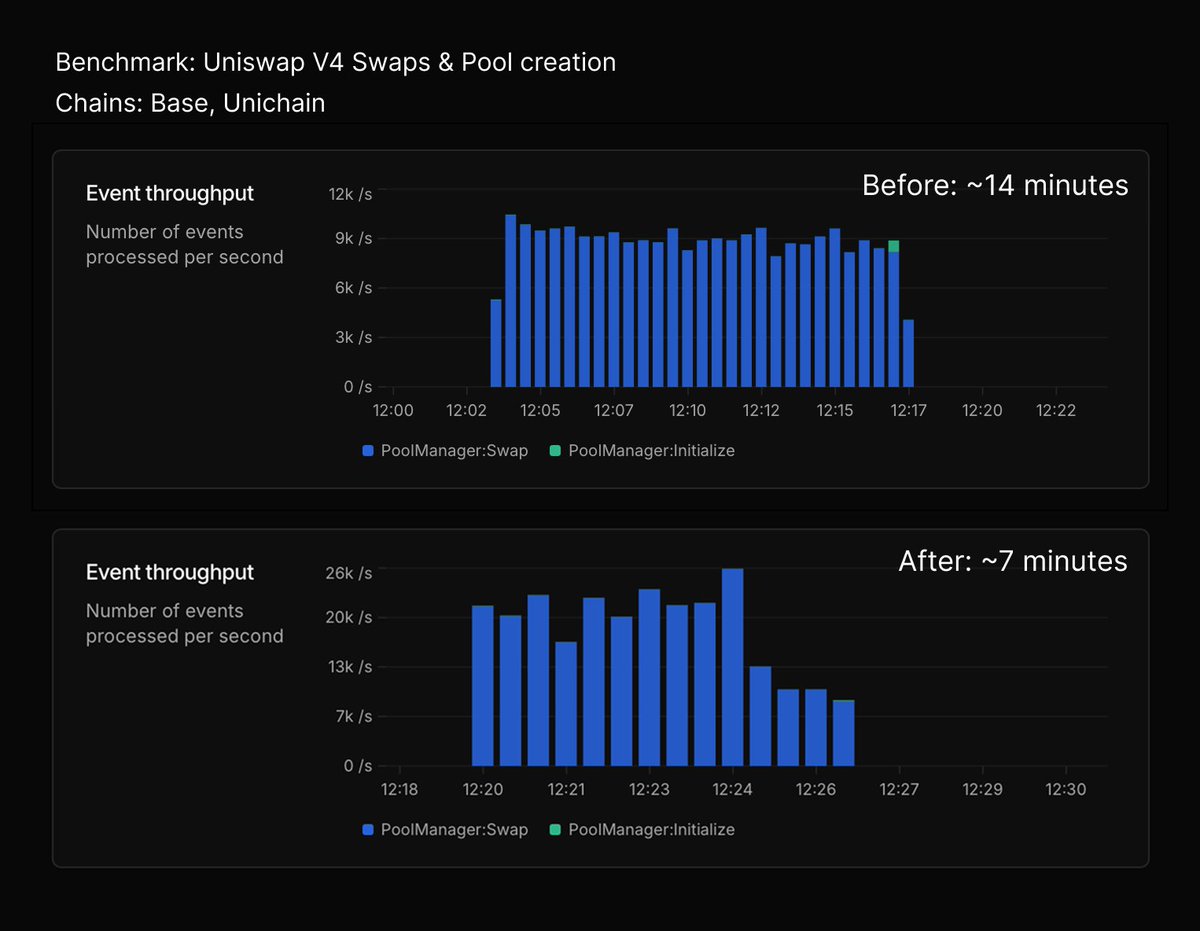

Your app (and indexer) is only as reliable as your RPC. The scary part is you usually don't know it's failing until your users do. Introducing Goldsky Edge: fault-tolerant RPC built for billions of requests, backed by a global CDN 1/

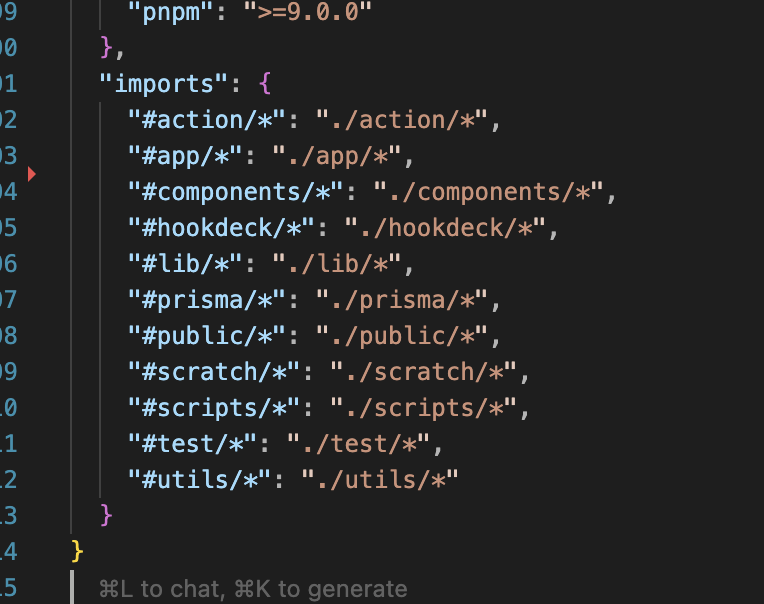

Node.js added support for path rewrites for #/ wildcard. This means you don't need typescript voodoo to use project relative imports. Thanks to @hybristdev github.com/nodejs/node/pu…

13 days later, on the same $160/month bare metal, we've gone from 90k to 111k peak TPS. Extremely stable without a single block lag. Ceiling still nowhere to be found. Modern hardware is amazingly fast; we just need to write much better software!