Yuning Mao

25 posts

@yuning_pro

TBD @AIatMeta, 🦙Post-training since Llama 2 https://t.co/OUjOWWA8kL

👀

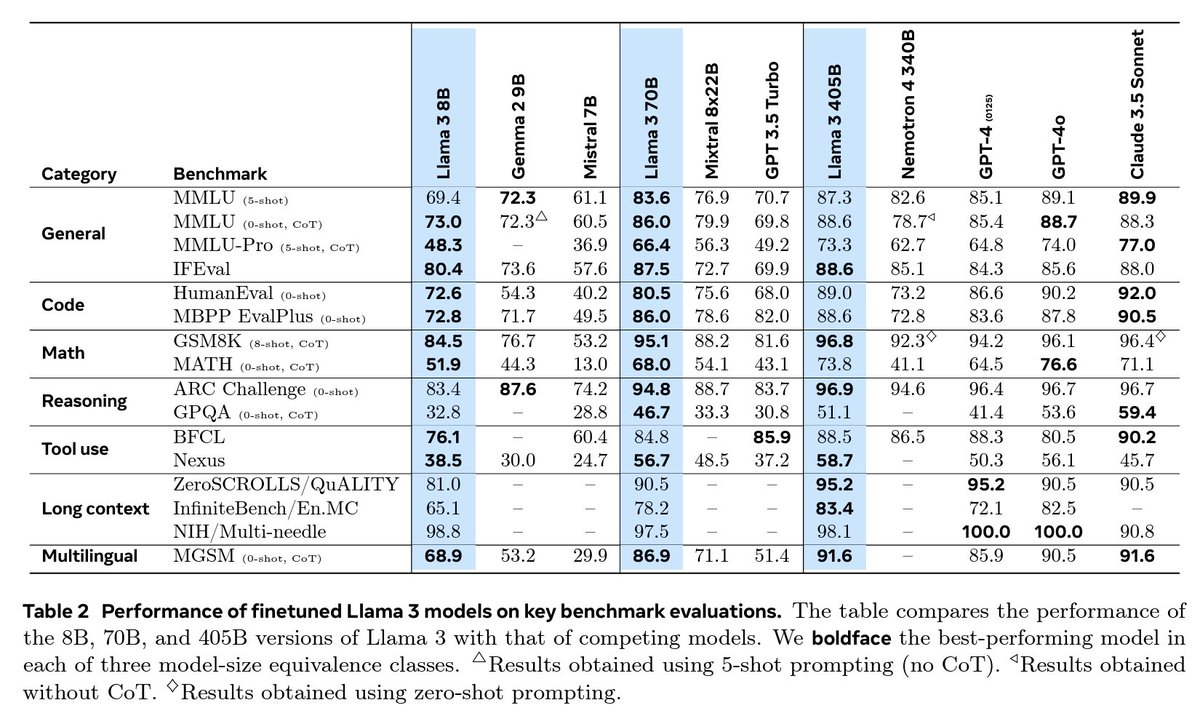

Starting today, open source is leading the way. Introducing Llama 3.1: Our most capable models yet. Today we’re releasing a collection of new Llama 3.1 models including our long awaited 405B. These models deliver improved reasoning capabilities, a larger 128K token context window and improved support for 8 languages among other improvements. Llama 3.1 405B rivals leading closed source models on state-of-the-art capabilities across a range of tasks in general knowledge, steerability, math, tool use and multilingual translation. The models are available to download now directly from Meta or @huggingface. With today’s release the ecosystem is also ready to go with 25+ partners rolling out our latest models — including @awscloud, @nvidia, @databricks, @groqinc, @dell, @azure and @googlecloud ready on day one. More details in the full announcement ➡️ go.fb.me/tpuhb6 Download Llama 3.1 models ➡️ go.fb.me/vq04tr With these releases we’re setting the stage for unprecedented new opportunities and we can’t wait to see the innovation our newest models will unlock across all levels of the AI community.

New AI research paper from Meta — MART, or Multi-round Automatic Red-Teaming is a framework for improving LLM safety that trains an adversarial and target LLM through automatic iterative adversarial red-teaming. Details in the paper ➡️ bit.ly/40H1l2z

Hearing community feedback & following internal research & analysis, we've pushed two new updates to the Llama repo to reduce false refusal rates seen with Llama 2-Chat models & improve token sanitization. Full details ➡️ bit.ly/3QuRNER

We believe an open approach is the right one for the development of today's Al models. Today, we’re releasing Llama 2, the next generation of Meta’s open source Large Language Model, available for free for research & commercial use. Details ➡️ bit.ly/3Dh9hNp

Progressive Prompts: Continual Learning for Language Models abs: arxiv.org/abs/2301.12314