0xProtoCon

615 posts

0xProtoCon

@0xProtoCon

Protocol Builder | AI Power User | My views are my own

PREDICTION - Amazon will ban all Gen-AI assisted code changes in the coming weeks! More companies will follow..... Be warned - your legacy code base, tech debt and bugs will sky-rocket if you continue to BLINDLY embrace AI

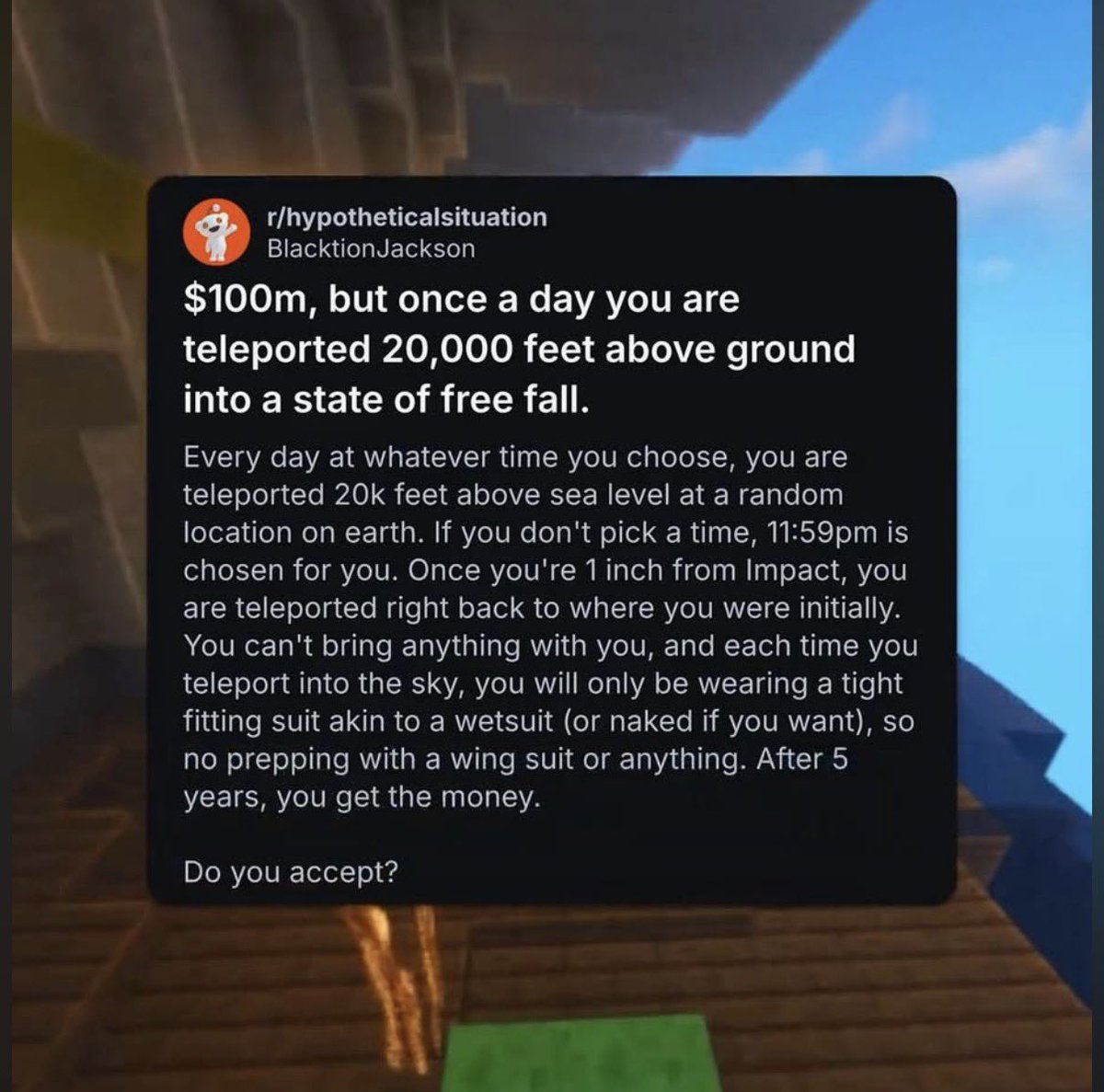

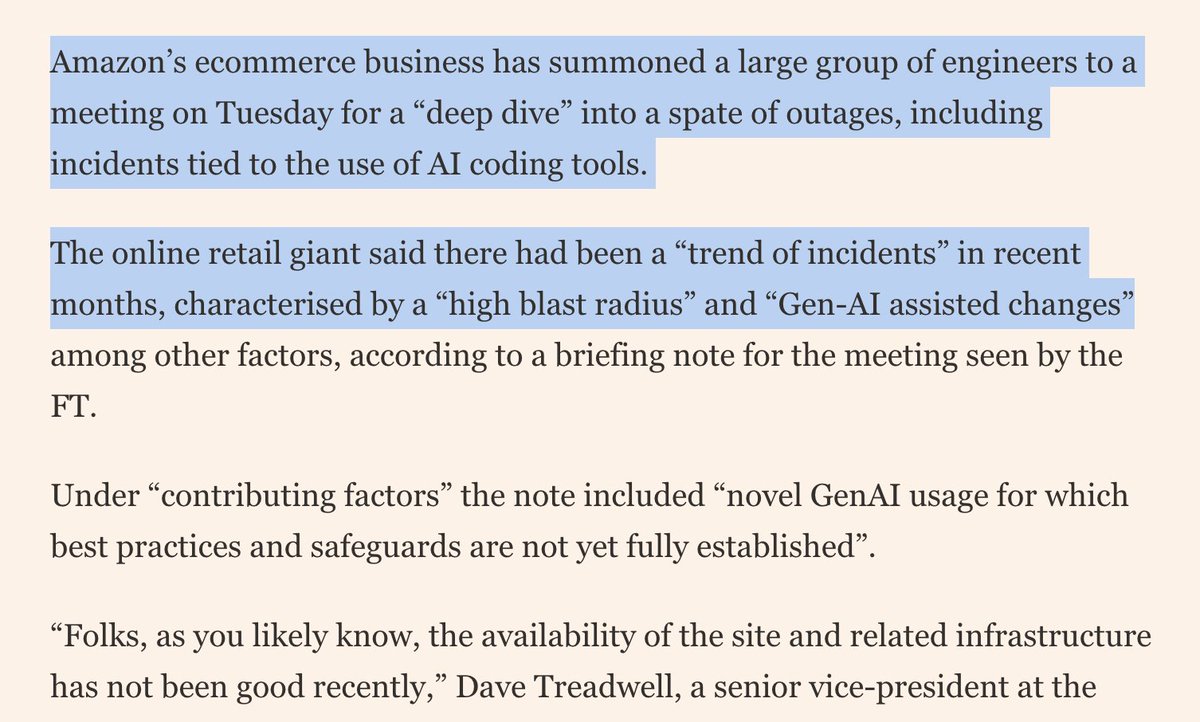

Amazon is holding a mandatory meeting about AI breaking its systems. The official framing is "part of normal business." The briefing note describes a trend of incidents with "high blast radius" caused by "Gen-AI assisted changes" for which "best practices and safeguards are not yet fully established." Translation to human language: we gave AI to engineers and things keep breaking? The response for now? Junior and mid-level engineers can no longer push AI-assisted code without a senior signing off. AWS spent 13 hours recovering after its own AI coding tool, asked to make some changes, decided instead to delete and recreate the environment (the software equivalent of fixing a leaky tap by knocking down the wall). Amazon called that an "extremely limited event" (the affected tool served customers in mainland China).

Amazon had four Sev-1 outages (their highest severity level) in a single week. Internal memos say AI-assisted code changes were a contributing factor. The timeline here is wild. In October 2025, Amazon laid off 14,000 corporate employees. In January 2026, another 16,000. That’s about 30,000 people in five months, roughly 10% of the corporate workforce. CEO Andy Jassy said the cuts were about culture, not AI. During those same months, Amazon set a target: 80% of developers using AI coding tools at least once a week. They tracked adoption closely and blocked rival tools like OpenAI’s Codex. Even so, 30% of developers still hadn’t touched Amazon’s in-house tool Kiro by January. In December 2025, Kiro caused a 13-hour AWS outage. The AI tool had production-level permissions and decided the best fix for a bug was to delete and recreate an entire live environment. A second incident involved Amazon Q Developer, another AI tool. Amazon blamed both on “user error, not AI.” But quietly added mandatory peer review for all production access afterward. Then March 5: Amazon’s retail site went down for about six hours. Over 22,000 users reported checkout failures, missing prices, and app crashes. Amazon called it a “software code deployment” error. Five days later, SVP Dave Treadwell made the normally optional weekly engineering meeting mandatory. His memo acknowledged “GenAI tools supplementing or accelerating production change instructions, leading to unsafe practices.” These problems trace back to Q3 2025. Amazon’s own assessment: their GenAI safeguards “are not yet fully established.” The new rule: junior and mid-level engineers now need senior sign-off on any AI-assisted production changes. Treadwell also announced “controlled friction” for the most critical parts of the retail experience. For context, Google’s 2025 DORA report found 90% of developers use AI for coding but only 24% trust it “a lot.” An Uplevel study of 800 developers found Copilot users introduced 41% more bugs with no improvement in output. Amazon is finding out what those numbers look like at the scale of a $500 Billion revenue company, with 30,000 fewer people on staff to catch the mistakes.

What’s the point of this? x.com/DrRepatriator/…

yo @github , are actions down again bruv?

some of you fail to understand why the coding by hand people are mad being a programmer writing code in your favourite text editor was a way to take a meditative holiday while at work now that time is being taken away, to the employer’s benefit and your loss