Sabitlenmiş Tweet

In case you were wondering why there hasn't been a new @BedsideRounds in a while ...

magazine.hms.harvard.edu/articles/can-a…

English

Adam Rodman

16.2K posts

@AdamRodmanMD

Physician, educator, historian, author, podcaster, researcher @BIDMC_IM @HarvardMed @HarvardDBMI, host of @BedsideRounds, AE @NEJM_AI, studies 🤖+🧠. 🖖🚲

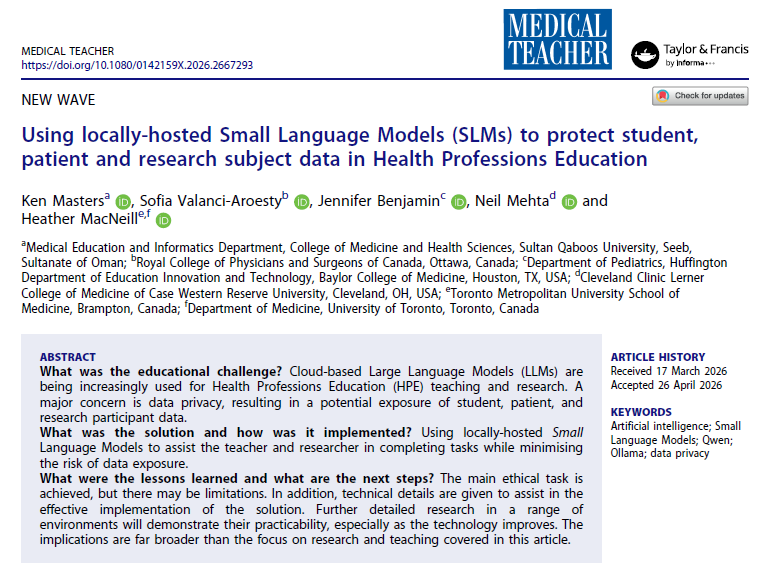

📄 Excited to share our latest preprint: the first cross-field audit of LLM-hallucinated citations in science ⚠️ Across arXiv, bioRxiv, SSRN & PMC, we estimate 147K fake citations in 2025 alone — threatening both the quality and equity of scientific work.