Aditya Kulshrestha

474 posts

Aditya Kulshrestha

@Aditya_kul02

GenAI & Solutions @ Intel | Ex https://t.co/Ted7LL3YNQ, https://t.co/oDR2AwJqny | Part time sasta Philosopher

🚨BREAKING: On Friday afternoon, an artificial intelligence coding agent powered by Anthropic's Claude Opus 4.6 deleted a company's entire production database in nine seconds. The company is called PocketOS. It is a software platform that powers car rental businesses. The database contained months of customer bookings, vehicle records, and operational data that small rental car companies relied on to run their businesses. When the database was deleted, all of the backups were deleted with it. Three months of customer reservations evaporated.

#Udemy data breach confirmed. After refusing to pay the ransom, hackers released data of 1.4M users, including personal and financial details. We @DarkEntryAms launched a lookup tool so you can check if you’re affected: darkentry.net/latest-breache… #DataBreach #Ransomware

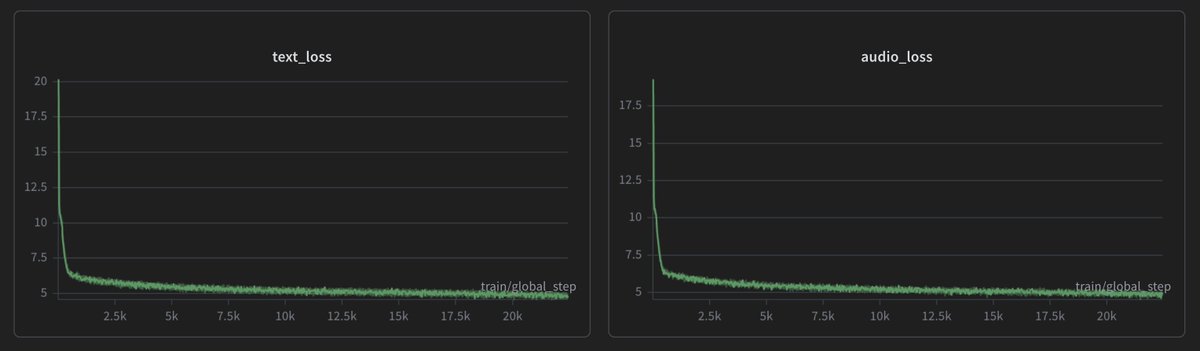

This is a reminder for me to start building a model that can write good kernels. Challenge is low availability of data and less pretraining corpus for current coding models.