Wrote another essay over a month ago. I hope it ages well. Has aged okay so far. Read it on my website advait(dot)live/weavers tldr: Bitter Lesson Pilled

Advait Raykar

960 posts

@AdvaitRaykar

Wrangling LLMs to help create more ethical supply chains. Cofounder and CEO at Elm AI | @Cornell CS

Wrote another essay over a month ago. I hope it ages well. Has aged okay so far. Read it on my website advait(dot)live/weavers tldr: Bitter Lesson Pilled

we also released swe bench and terminal bench this time. pls no more obama medal memes

Cursor is taking on Anthropic and OpenAI with a new AI coding model bloomberg.com/news/articles/…

so many ai products i hear about aren't based in sf what's crazier is all of these products have less successful competitors based in sf makes you think

Interesting stat - our enterprise customers have already done more Devin sessions (and more merged Devin PRs) in 2026 than in all of 2025. Not bad for 2-ish months into the year!

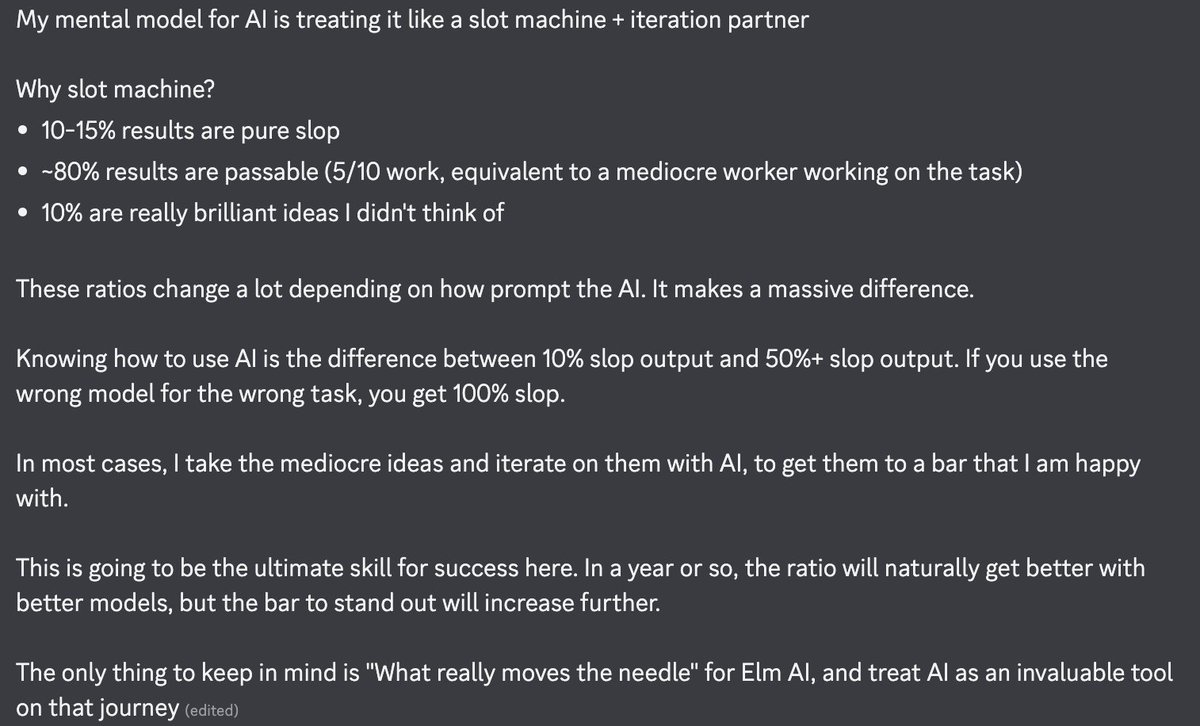

I think we have lost some sense of judgment and moderation when it comes to product building currently. The moment you turn something into a universally celebrated metric, whether that is token burn, prototype count, or percentage of agent-written code, you start losing sight of what actually matters. I have felt the same way for a long time about overusing data and A/B testing to build products. The moment you reduce product quality or productivity to a metric, you stop shipping value and start shipping numbers. A lot of what people are doing with AI makes directional sense. The missing piece is counterbalance: 1. AI should help engineers build better products. Leaderboards and adoption metrics can be useful as directional signals. They do not tell you what is being built, whether it is good, or whether it should exist at all. 2. Users do not care what percentage of your code was written by agents. They care about the outcome. Faster output is useful. Like usually, faster doesn't seem to add to quality, clarity, or stability of products. Power to build should not become an excuse to lower quality bars. 3. LLM-generated prototypes can feel like late-night whiteboarding sessions. They look exciting in the moment and feel productive very quickly. Then a few days later you realize the idea was shallow, distracting, or simply wrong. The same trap shows up in jumping straight to code and solutions more broadly. You may just be building the wrong thing more efficiently. Prototyping has its place. So do clear thinking, good design, and a real understanding of the user’s problem. In terms of activities or momentum, the main quest and the side quest can both feel productive but only one actually moves the mission forward. 4. Adding more to products is still dangerous as ever even if time or effort to add it has gone down. Every addition creates complexity, maintenance cost, and user confusion. New features should be pushed back unless they clearly show it should exist and how it improves the product. 5. Not everything needs to be an agent shaped. A simple scheduled task does not need a full LLM sandbox. Making something agentic because it feels current or impressive does not make it right-sized, correct, or effective. The core ideas are: - even if you can, maybe you should not. - more power we have to build should not reduce our need to think, it should increase it.

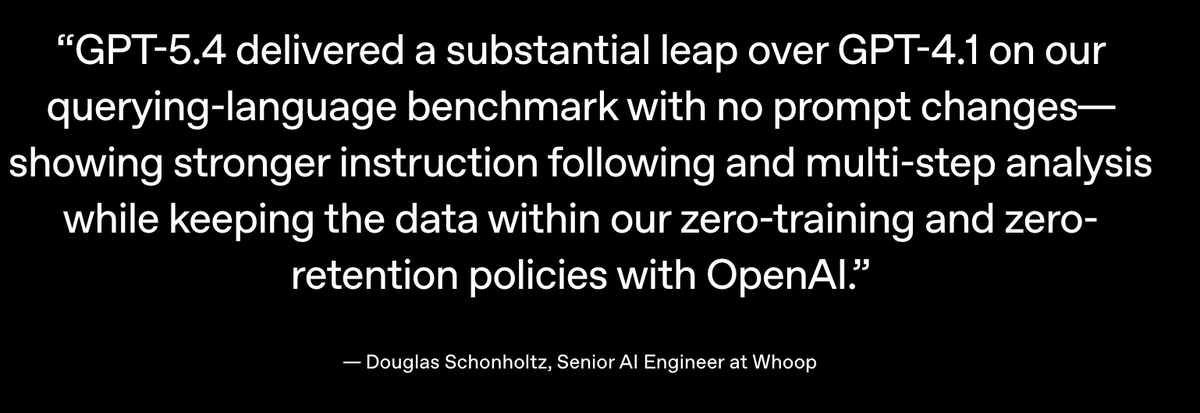

GPT-5.4, WebSockets, /fast mode, oh my Codex Update now for the latest goodies openai.com/index/introduc…

Product Idea: There should be a shared "Claude Code" instance that can be passed around different people. It's dumb to have the software repo as an intermediate step.

We are sharing an early preview of our ongoing SWE-1.6 training run. It significantly improves upon SWE-1.5 while being post-trained on the same pre-trained model - and it runs equally as fast at 950 tok/s. On SWE-Bench Pro it exceeds top open-source models. The preview model still exhibits some undesirable behaviors like overthinking and excessive self-verification, which we aim to improve. We are rolling out early access to a small subset of users in Windsurf.