Sabitlenmiş Tweet

Alberto Fuentes (e/acc)

59.1K posts

Alberto Fuentes (e/acc)

@AlberFuen

Founder of @daertml. Training LLaMAs as a hobby (and no profit yet).

Madrid, Comunidad de Madrid Katılım Nisan 2018

2.8K Takip Edilen759 Takipçiler

Alberto Fuentes (e/acc) retweetledi

Alberto Fuentes (e/acc) retweetledi

Alberto Fuentes (e/acc) retweetledi

🥩 Cinco consejos para comprar en la carnicería si te da vergüenza (o no sabes cómo pedir)

El mostrador puede llegar a intimidar y la mayoría acaba yendo al supermercado a comprar la carne en bandejas. Error. El carnicero de tu barrio es un gran aliado para tu cocina.

Quédate con estas claves: 👇

1️⃣ Pide por raciones, no por gramos. Calcular "300 gramos de lomo" es un lío. Pide "4 filetes finos" o "2 contramuslos". Tú sabes cuánto se come en tu casa y él sabe el grosor exacto para que quede bien.

2️⃣ Habla de recetas, no de anatomía. No hace falta que sepas lo que es la babilla o la tapilla de ternera. Dile al carnicero: "Quiero hacer un guiso para tres personas" o "algo a la plancha que quede jugoso". Déjale hacer su trabajo.

3️⃣ Aprovecha la mano de obra (es gratis). La carnicería no es el súper. ¿Quieres la carne en tiras para un wok? ¿Picada fina? ¿Sin un gramo de grasa? Pídelo. Te vas a casa con la mise en place hecha.

4️⃣ El 'truco' del pollo entero. Realmente no es un truco, pero es algo que puede que no sepas: comprar un pollo entero es mucho más barato que comprar las bandejas sueltas. Pídele que te lo despiece: pechugas fileteadas, muslos para asar, alitas...

5️⃣ No te dejes el oro líquido (los huesos). Cuando te despiecen ese pollo o te limpien una carne, pide siempre que te guarden los huesos y carcasas. Son la base gratuita para los mejores caldos y guisos que vas a hacer en tu vida.

💡 Solo hay que perderle el miedo al mostrador.

¿Cuál es vuestro truco más útil cuando compráis en la carnicería? 👇

Español

Alberto Fuentes (e/acc) retweetledi

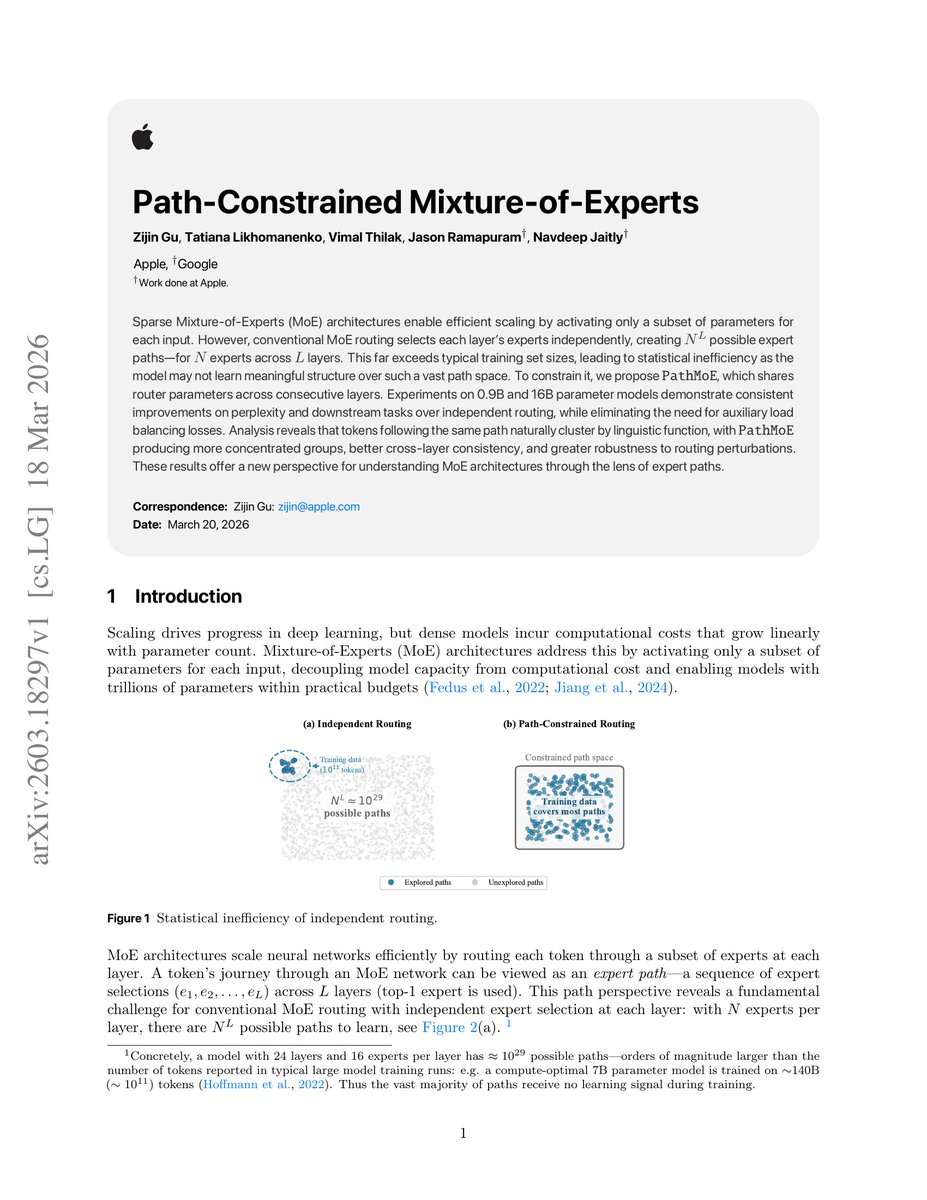

Here's a common misconception about RAG!

When we talk about RAG, it's usually thought: index the doc → retrieve the same doc.

But indexing ≠ retrieval

So the data you index doesn't have to be the data you feed the LLM during generation.

Here are 4 smart ways to index data:

1) Chunk Indexing

- The most common approach.

- Split the doc into chunks, embed, and store them in a vector DB.

- At query time, the closest chunks are retrieved directly.

This is simple and effective, but large or noisy chunks can reduce precision.

2) Sub-chunk Indexing

- Take the original chunks and break them down further into sub-chunks.

- Index using these finer-grained pieces.

- Retrieval still gives you the larger chunk for context.

This helps when documents contain multiple concepts in one section, increasing the chances of matching queries accurately.

3) Query Indexing

- Instead of indexing the raw text, generate hypothetical questions that an LLM thinks the chunk can answer.

- Embed those questions and store them.

- During retrieval, real user queries naturally align better with these generated questions.

- A similar idea is also used in HyDE, but there, we match a hypothetical answer to the actual chunks.

This is great for QA-style systems, since it narrows the semantic gap between user queries and stored data.

4) Summary Indexing

- Use an LLM to summarize each chunk into a concise semantic representation.

- Index the summary instead of the raw text.

- Retrieval still returns the full chunk for context.

This is particularly effective for dense or structured data (like CSVs/tables) where embeddings of raw text aren’t meaningful.

👉 Over to you: What are some strategies that you commonly use for RAG indexing?

English

Alberto Fuentes (e/acc) retweetledi

Alberto Fuentes (e/acc) retweetledi

Alberto Fuentes (e/acc) retweetledi

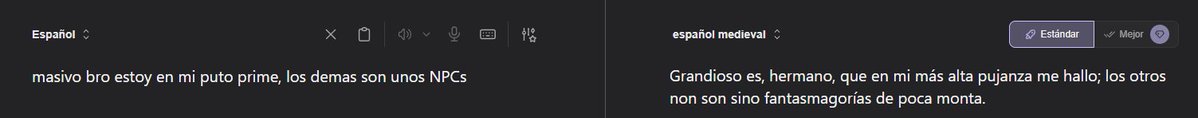

este traductor es la polla, también os digo

maite figueroa (la cuqui)@AsiGarSan2

un traductor nunca me ha definido tan bien hasta ahora

Español

Alberto Fuentes (e/acc) retweetledi

Seems to be a systematic scaling study for diffusion language model 👍 Not surprised that the exponents are still similar to Chinchilla. But the origin of 21.8x speedup? So far I can imagine diffusion models enable better hyperparameter choices.

Chen-Hao (Lance) Chao@chenhao_chao

(1/7) We introduce MDM-Prime-v2 which scales 21.8× better than autoregressive models (ARMs) in compute-optimal comparisons. 📎 Paper: arxiv.org/abs/2603.16077 🌟 Blog: chen-hao-chao.github.io/mdm-prime-v2 ⌨️ Github: github.com/chen-hao-chao/… Here’s how we did it👇:

English

RT @victormustar: Forgot about this qwen3.5-0.8B experiment, the results:

- 0% -> 26.5% DOOM action prediction, 16 autonomous experiments…

English

Alberto Fuentes (e/acc) retweetledi

3DreamBooth- high-fidelity 3D subject-driven video generation.

AI just made running an e-commerce brand ridiculously easy.

- view-consistent videos from multi-view references;

- snap a few photos of your product

- turns them into cinematic 3D videos

- perfect 360-degree rotation in any scene

- zero warped logos or lost textures

it's HunyuanVideo-1.5 + LoRA.

ko-lani.github.io/3DreamBooth/

English

Alberto Fuentes (e/acc) retweetledi

🤌 It didn't even touch the green

😍 This angle of @brysondech's slam dunk...

#LIVGolfSouthAfrica | @Crushers_GC

English

Alberto Fuentes (e/acc) retweetledi

Alberto Fuentes (e/acc) retweetledi

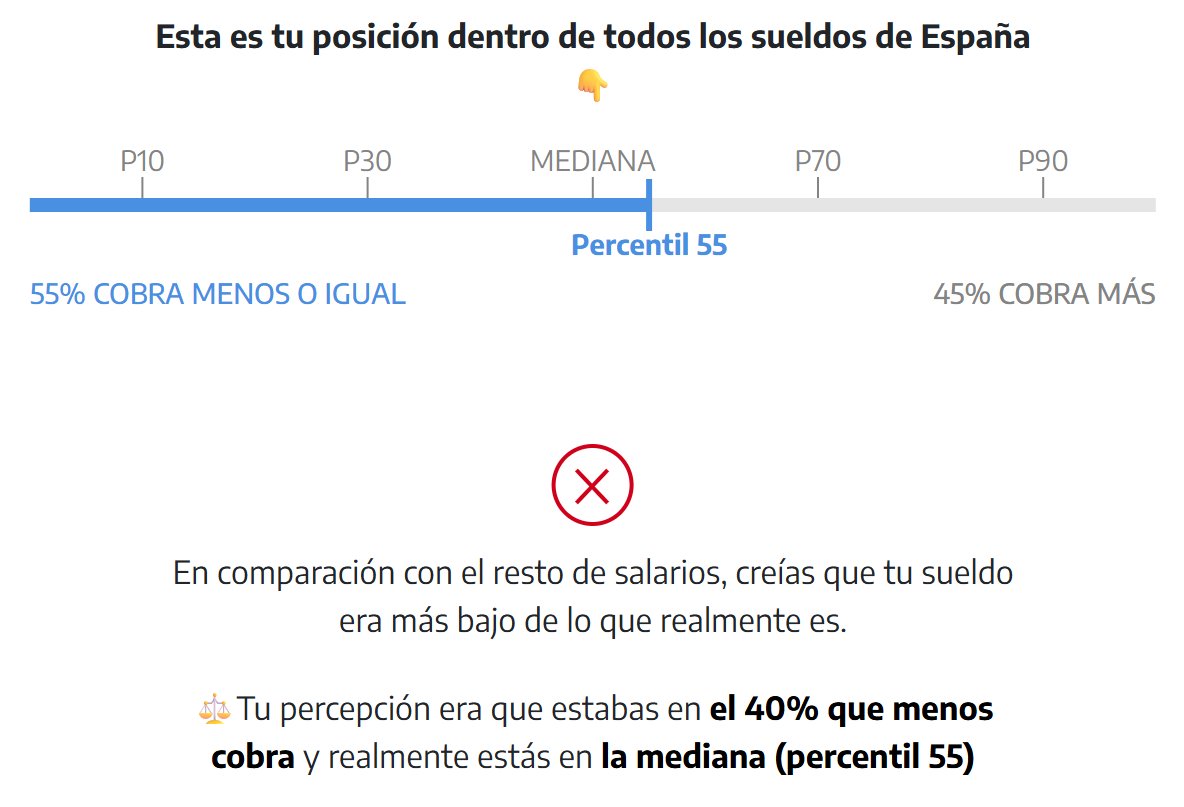

🆕Hoy en @elDiarioes volvemos a actualizar nuestra calculadora de salarios con los datos de la EES

💵⚖️¿Cres que cobras poco? Te proponemos un baño de realidad para que conozcas tu posición en la escala salarial de España

Español

Alberto Fuentes (e/acc) retweetledi

Alberto Fuentes (e/acc) retweetledi

Alberto Fuentes (e/acc) retweetledi

Alberto Fuentes (e/acc) retweetledi

Alberto Fuentes (e/acc) retweetledi