Alex Bodner

3.7K posts

Alex Bodner

@AlexBodner_

AI engineering at @UdeSA🇦🇷 | open source @roboflow Posting on AI progress and my own projects

CÓMO CARAJO TERMINÓ GASLY EN ESA POSICIÓN 😭😭

Introducing ml-intern, the agent that just automated the post-training team @huggingface It's an open-source implementation of the real research loop that our ML researchers do every day. You give it a prompt, it researches papers, goes through citations, implements ideas in GPU sandboxes, iterates and builds deeply research-backed models for any use case. All built on the Hugging Face ecosystem. It can pull off crazy things: We made it train the best model for scientific reasoning. It went through citations from the official benchmark paper. Found OpenScience and NemoTron-CrossThink, added 7 difficulty-filtered dataset variants from ARC/SciQ/MMLU, and ran 12 SFT runs on Qwen3-1.7B. This pushed the score 10% → 32% on GPQA in under 10h. Claude Code's best: 22.99%. In healthcare settings it inspected available datasets, concluded they were too low quality, and wrote a script to generate 1100 synthetic data points from scratch for emergencies, hedging, multilingual etc. Then upsampled 50x for training. Beat Codex on HealthBench by 60%. For competitive mathematics, it wrote a full GRPO script, launched training with A100 GPUs on hf.co/spaces, watched rewards claim and then collapse, and ran ablations until it succeeded. All fully backed by papers, autonomously. How it works? ml-intern makes full use of the HF ecosystem: - finds papers on arxiv and hf.co/papers, reads them fully, walks citation graphs, pulls datasets referenced in methodology sections and on hf.co/datasets - browses the Hub, reads recent docs, inspects datasets and reformats them before training so it doesn't waste GPU hours on bad data - launches training jobs on HF Jobs if no local GPUs are available, monitors runs, reads its own eval outputs, diagnoses failures, retrains ml-intern deeply embodies how researchers work and think. It knows how data should look like and what good models feel like. Releasing it today as a CLI and a web app you can use from your phone/desktop. CLI: github.com/huggingface/ml… Web + mobile: huggingface.co/spaces/smolage… And the best part? We also provisioned 1k$ GPU resources and Anthropic credits for the quickest among you to use.

SpaceXAI and @cursor_ai are now working closely together to create the world’s best coding and knowledge work AI. The combination of Cursor’s leading product and distribution to expert software engineers with SpaceX’s million H100 equivalent Colossus training supercomputer will allow us to build the world’s most useful models. Cursor has also given SpaceX the right to acquire Cursor later this year for $60 billion or pay $10 billion for our work together.

At the @AnthropicAI hackaton we've been testing a way of adding muscle memory to LLMs on top of the @aisdk. You follow a recipe the first 10 times, then you don't need it. Same with repetitive workflows, tool calls get automated, skip the LLM entirely. Less cost & latency

a moving man will meet his luck 🥀

Se acuerdan de esto? Bueno.. I did it

🔍🇦🇷 Estos son los principales detalles de la NUEVA CAMISETA SUPLENTE de la Selección Argentina de cara a la Copa del Mundo 2026: ◉ Predomina el negro con logotipos blancos y detalles en azul cielo. ◉ Está inspirada en el fileteado porteño que decora carteles y colectivos urbanos. ◉ Utilización del logo de Adidas con el trébol. ◉ Parche de campeón del mundo. ◉ Sol de Mayo en la nuca junto a la palabra ‘Argentina’.

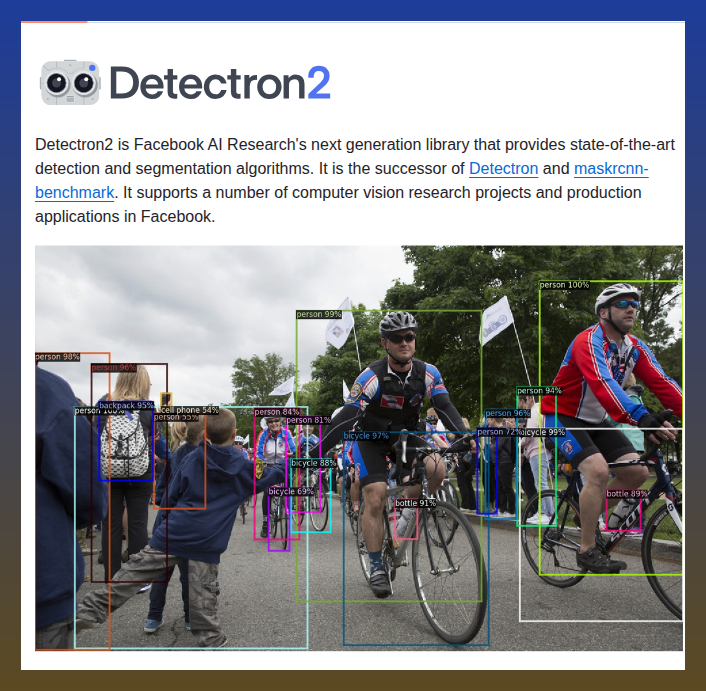

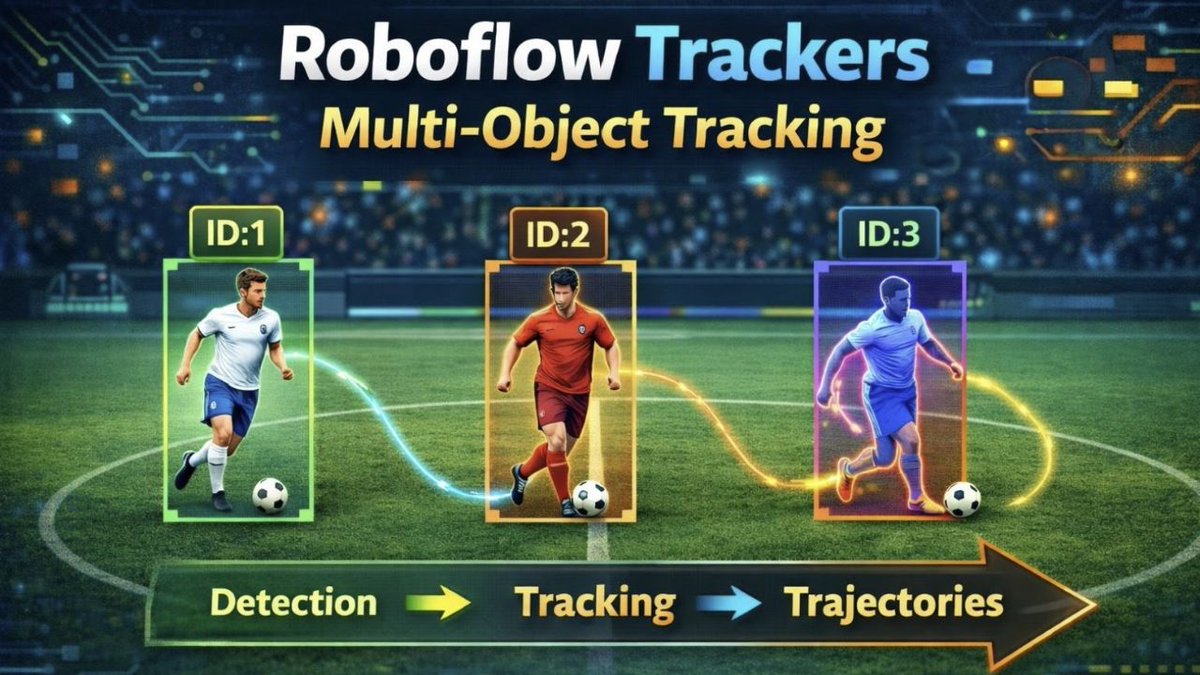

🔥𝗡𝗲𝘄 𝗧𝗿𝗮𝗰𝗸𝗲𝗿 in @roboflow 𝗧𝗿𝗮𝗰𝗸𝗲𝗿𝘀 library 🚀 After ByteTrack, we are adding 𝗢𝗖-𝗦𝗢𝗥𝗧: a tracker built for what most trackers fail at: non-linear motion + occlusion Think: dancers, athletes, animals, chaos. SORT losses track when occluded, OC-SORT doesn’t.👇