Alex

234 posts

Alex

@AlexFromAtomic

head of marketing at @atomic_chat_hq

California, USA Katılım Ocak 2026

180 Takip Edilen100 Takipçiler

Alex retweetledi

Anthropic has opened Claude Security in public beta for Claude Enterprise customers, turning Claude[.]ai into a codebase scanner that finds vulnerabilities, checks them in context, and drafts patches for review.

Traditional security scanners mostly match patterns, but many serious bugs depend on how data, permissions, and control flow move across files, which is why teams often get both missed issues and piles of noisy alerts.

Claude Security is trying to handle that gap by scanning a repo, validating whether a suspected issue actually holds up, and then returning the severity, affected file and line, explanation, and a suggested fix.

The product is packaged as a built-in workflow rather than a custom security stack, so teams do not need a separate API integration or agent build if they already run Claude Code on the Web inside Claude Enterprise.

The setup is tightly bounded to enterprise controls, including the Anthropic GitHub App, GitHub[.]com repositories, premium user seats, and consumption billing with configurable spend limits.

Teams can scope scans to a branch or directory, run parallel projects, choose Regular or Extended effort, and schedule recurring scans, with Anthropic explicitly recommending narrower scope for large repos and monorepos to improve reliability.

Each finding can be exported to CSV or Markdown, pushed through webhooks or email, opened in a remediation session that generates a candidate patch, or dismissed with a reason that carries forward across future scans.

Claude@claudeai

Claude Security is now in public beta for Claude Enterprise customers. Claude scans your codebase for vulnerabilities, validates each finding to cut false positives, and suggests patches you can review and approve.

English

@therealbifkn @kimmonismus @atomic_chat_hq I like Qwen version ;) Let's host it and make our own B2B AI SaaS with 10k MRR))

English

Alex retweetledi

/1 Gemma 4 31B just crushed Qwen 3.6 27B in a local LLM gamedev contest inside @atomic_chat_hq (prompt is below)

Device: MacBook Pro M5 Max, 64GB RAM

Results:

Qwen 3.6 27B: 32 tokens/sec · 18m 04s · 33,946 tokens

Gemma 4 31B: 27 tokens/sec · 3m 51s · 6,209 tokens

So what is more important: tokens per second, or the quality of the final answer?

Qwen made a very long response and showed more creativity and visual style. But Gemma gave a shorter, clearer, and more logical answer in much less time. In this one-shot Pac-Man gamedev contest, Gemma 4 31B was the clear winner. Its game logic was stronger: click reactions were smoother, and it handled interactions with elements like walls, ghosts, and particle effects better.

But this was only one test. Maybe Qwen 3.6 27B can show better results with better settings. Open the comments, try our prompt, and share your result below.

English

Alex retweetledi

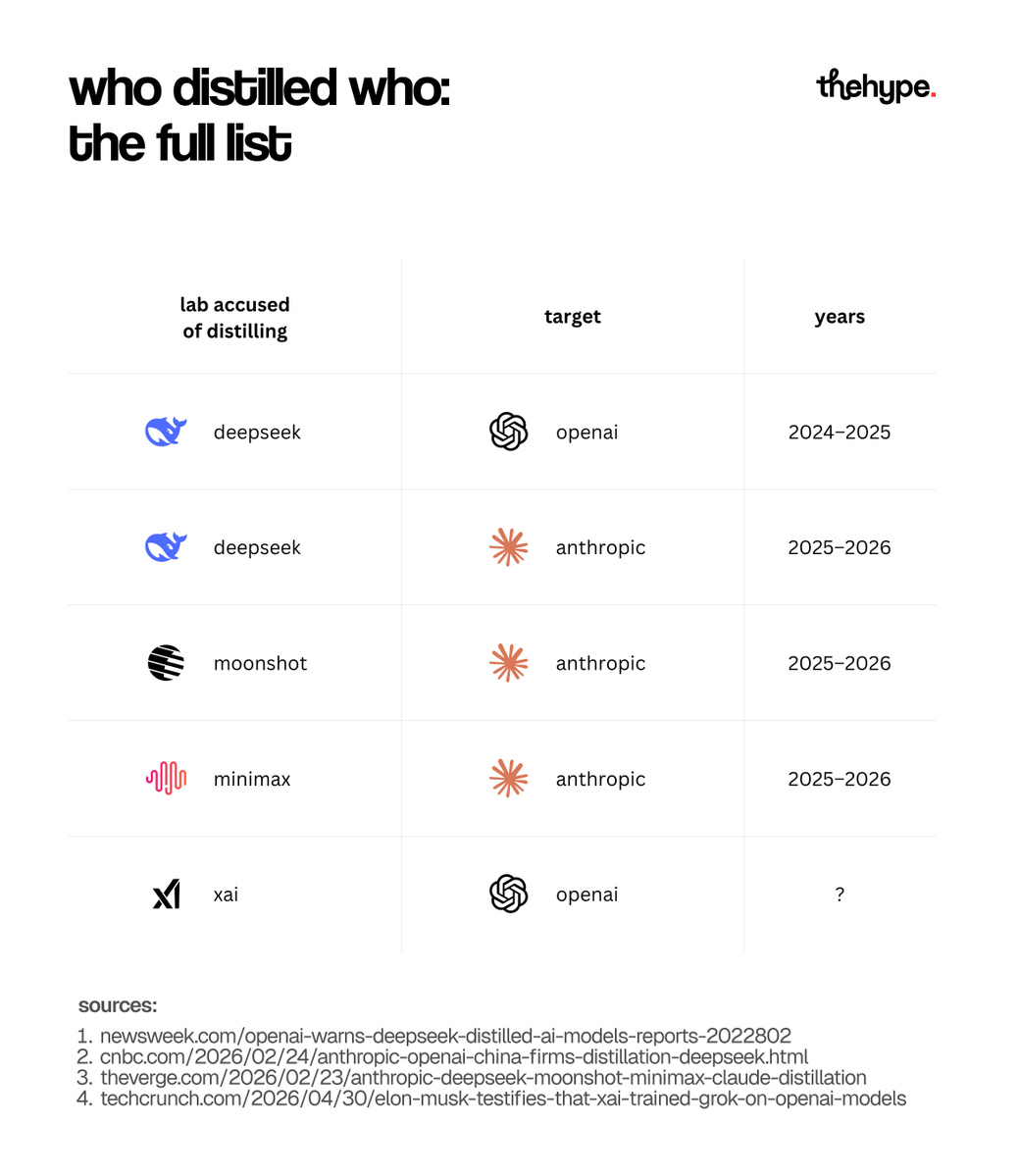

elon musk just admitted in federal court that xai trained grok using openai's models. his defense? "all ai companies do it."

he's not wrong. the practice is called model distillation – and it's quietly become the most controversial technique in the ai industry

here's how it works, who got caught, and why nobody is clean

what is it? a smaller "student" model learns from a larger "teacher" model's outputs – ending up nearly as capable, at a fraction of the cost. instead of training on raw human-labeled data, the student simply learns to mimic how the teacher responds. done with permission, it's a standard technique. done covertly against a competitor, it's where things get ugly

how is it done? you query the teacher at scale with thousands or millions of prompts, collect the outputs, and train the student on those pairs. the most powerful version captures full chain-of-thought reasoning – not just answers, but the step-by-step thinking behind them. the student doesn't copy answers. it absorbs a way of thinking

how many examples do you need? for narrow tasks – tone, formatting, domain-specific responses – 50k is fine. stanford's alpaca proved this in 2023 with 52k gpt-3 outputs: decent instruction-following, but noticeably weaker reasoning than the teacher.

for genuine capability transfer, you need millions. and quality beats quantity: 920 carefully chosen reasoning traces can outperform brute-force datasets many times larger. deepseek's alleged campaign ran 16 million+ queries. that's the real scale.

what does it cost? 50k examples from gpt-5.5 runs $75–150 via the batch api (openai's async mode, 50% off in exchange for 24hr turnaround).

gpt-5 nano brings that to $3.63. the real bill is training the student afterward – thousands to tens of thousands depending on size.

open-source teachers like deepseek v4, kimi k2.6, and qwen 3.6 plus are free to download – you only pay for gpu compute: $15-45 on rented hardware, or just electricity if you run it on your own machine

is it legal? nobody knows yet. every major lab bans it in their terms of service. no court has ruled. in april 2026, the white house issued memorandum nstm-4 formally designating large-scale distillation attacks as a national security threat

who did it? microsoft detected large-scale data extraction from openai accounts linked to deepseek in late 2024. openai says it has evidence. deepseek has never confirmed or denied it.

moonshot ai and minimax were accused by anthropic in early 2026 of running 16M+ queries across 24,000 fake accounts using vpn rotation and jailbreak prompts.

and now musk has confirmed xai did it too – at least partly. this is industry-wide

the counterpoint – mistral's magistral hit competitive benchmarks in 2025 through pure reinforcement learning, zero distillation. the honest path exists – just harder

the irony: the companies crying foul trained their own models on scraped internet data without consent 😎 the debate over whose copying is legitimate is very much still ongoing

follow @thehypedotnews for daily analysis and breakdowns in ai

The Verge@verge

Elon Musk confirms xAI used OpenAI’s models to train Grok theverge.com/ai-artificial-…

English

@JakeKAllDay @kimmonismus @atomic_chat_hq I ve tried GGUF. How much TpS do you have? Perhaps you have opened windows like safari etc...? My test was clear and opened was only Atomic Chat

English

@AlexFromAtomic @kimmonismus @atomic_chat_hq MLX or GGUF? That shouldn’t make the difference but just trying to match as much as I can

English

@thehypedotnews I've simply bought MacBook Pro M5Max without any research, so Mac is hype ;)

English

should you spend $5K on a Mac Studio or an RTX 5090 for local AI?

in this short guide you'll learn:

1. more memory vs more speed – what to choose

2. why faster isn't always better

3. which option to pick based on how you build

follow @thehypedotnews for daily analysis and breakdowns in ai

contributor: @digitalnoah

English

@ZerieMythicElf @kimmonismus @atomic_chat_hq great idea. have you tried the same feature? I guess Qwen’s output time is longer mainly because of the thinking step. But what would the result be without the same level of thinking?

English

@kimmonismus @atomic_chat_hq well, they could have turn off the thinking of both

English

@kimmonismus @atomic_chat_hq qwen game is much more realistic and correct!

English

@bygregorr @kimmonismus @atomic_chat_hq I think the correct way to measure output is to summarize all factors. In that sense, Gemma 4 definitely wins. It used fewer tokens, produced output faster, and the game quality is the same as Qwen’s. You get the same result at a lower cost.

English

@kimmonismus @atomic_chat_hq The real story isn't which model won, it's that Gemma did more with 5x fewer tokens. Speed is a red herring if you're measuring the wrong thing. What were the actual output quality scores?

English

@RanjYousif @kimmonismus @atomic_chat_hq Agreed. Gemma 4 is better for logical tasks and coding. It has a more rational way of solving problems.

English

@kimmonismus @atomic_chat_hq 6K tokens vs 34K for a better result. Gemma understood the assignment while Qwen wrote an essay about understanding the assignment. efficiency is the real benchmark for local

English

@kimmonismus @atomic_chat_hq What configs/quants are you using? I am getting at most 10 tok/s for Gemma 31b on m4 max, wouldn’t expect that large of a jump to m5

English

@davidpwalter @kimmonismus @atomic_chat_hq I like the Gemma version for its animation when you kill ghosts. screen shakes, and it’s stunning ;)

English

@kimmonismus @atomic_chat_hq The gemma4 results doesn't look nearly as good as the qwen3.6 result. ghosts overlapping with walls, dot (food) placement is weird. wall placement is less coherent.

English

@RamonVi25791296 @kimmonismus @atomic_chat_hq i guess yes - it is a single test. one shot one prompt :)

English

@kimmonismus @atomic_chat_hq Baseado em um único teste? No geral o Qwen3.6-27B é superior.

Português

@kimmonismus @atomic_chat_hq I need to include 31B back into my tests. Time to have it do octopus invaders again :)

English

@0z1_x @NousResearch well done ozi! thanks for making the best agent ever better) also thanks to @Teknium for supporting his contributors)

English

lately i have been spending my free time contributing to large open source projects like @NousResearch

reading complex code, finding and fixing bugs and seeing my PRs get merged... honestly i really enjoy this.

i am learning while contributing to the hermes agent community

shoutout to @Teknium for great reviews and guidance

English