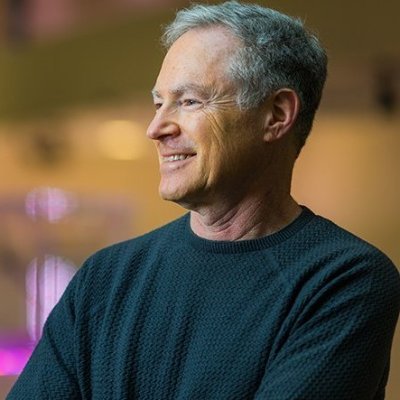

Alex Dimakis

4.5K posts

Alex Dimakis

@AlexGDimakis

Professor, UC berkeley | Founder @bespokelabsai |

We are excited to welcome Rohan Rao as Head of Data Operations in Bespoke Labs! Rohan joins us after spending 7 years in Grammarly as General Manager and Chief of Staff, where he supported transformations and helped support 7x growth in team size and revenue.

How does prompt optimization compare to RL algos like GRPO? GRPO needs 1000s of rollouts, but humans can learn from a few trials—by reflecting on what worked & what didn't. Meet GEPA: a reflective prompt optimizer that can outperform GRPO by up to 20% with 35x fewer rollouts!🧵

A really excellent book. A few people independently told me this was one of their favorite books over a decade ago. I bought it, and it became one of the textbooks on my shelf I revisit from time to time to spark the joy of holding ideas to a different light. It brings to life the elegance of information theory. A good day to recognize 10 years from the passing of David McKay.

Very excited that Microsoft is using our dataset OpenThoughts and summarizes it in this super-clever way to make reasoning much more efficient. Most people do not understand how verbose reasoning models can get: They often produce 30k tokens to answer one math question (that is 1/3 of a Harry Potter novel). In earlier research, we tried summarizing the reasoning traces and SFTing on that, but it killed reasoning performance. Microsoft did many clever tricks to break the reasoning traces in pieces (with dynamic programming!) and summarized them separately in self-contained nuggets they called mementos . They release these compactified reasoning traces in a new dataset called OpenMementos that is 6 times more compact on average. Very cool work on efficient reasoning.

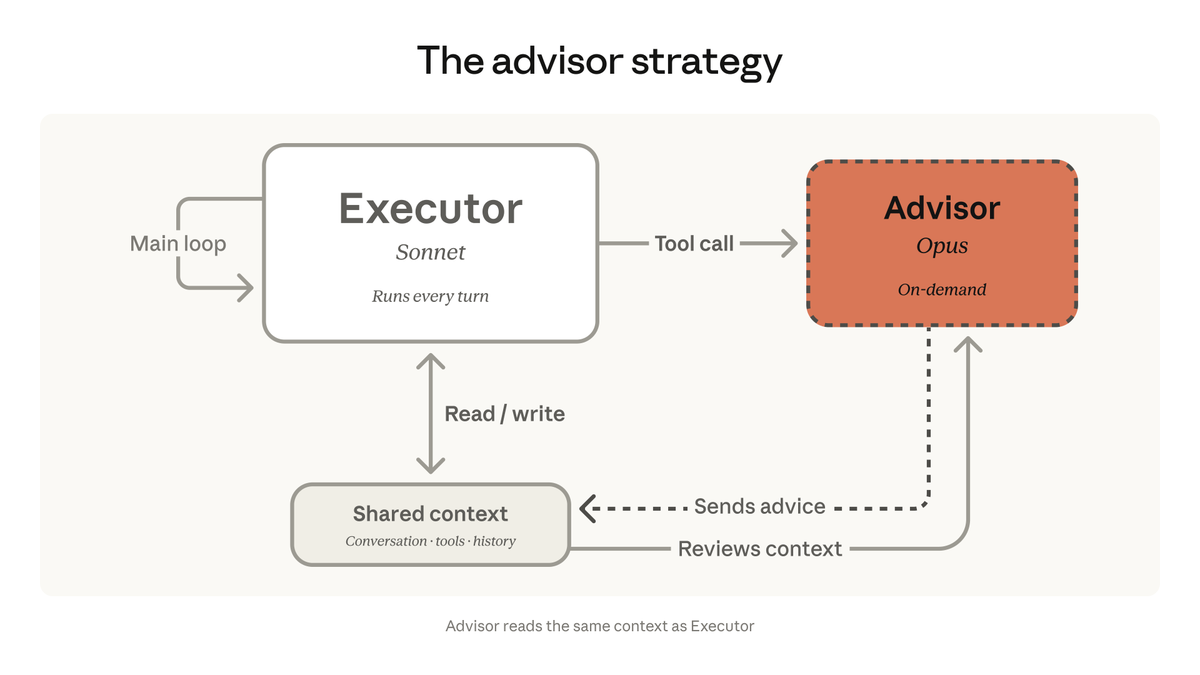

We're bringing the advisor strategy to the Claude Platform. Pair Opus as an advisor with Sonnet or Haiku as an executor, and get near Opus-level intelligence in your agents at a fraction of the cost.

A core dimension of intelligence is learning how to optimize learning and thinking under constraints of architecture, compute, and data resources. There are numerous challenges to solve in the pursuit of such “bounded optimality.” One question and opportunity is: “What should be remembered and recalled?” We’ve just published our paper on one piece of the memory challenge—on the effective compression of test-time reflection to reduce the size of context while keeping an eye on the coherence of the string of contextual memory. Read more here about our Memento project. Enjoyed the collaboration! @MSFTResearch @vkontonis @DimitrisPapail

We're bringing the advisor strategy to the Claude Platform. Pair Opus as an advisor with Sonnet or Haiku as an executor, and get near Opus-level intelligence in your agents at a fraction of the cost.