Alexander Lavin

1.7K posts

Alexander Lavin

@AlexLavin_C137

AI ∩ physics → *Simulation Intelligence* founder @simai4science + Digital Twin Earth @nasa @fdl_ai + 🦾 🛰 🧠 @vicariousai @numenta @cmu_robotics @cornelleng

For the last decade, it has been hard to stray off the beaten path of accepted wisdom that scaling training parameters drives innovation. However, the relationship between training compute + performance is uncertain + rapidly changing.

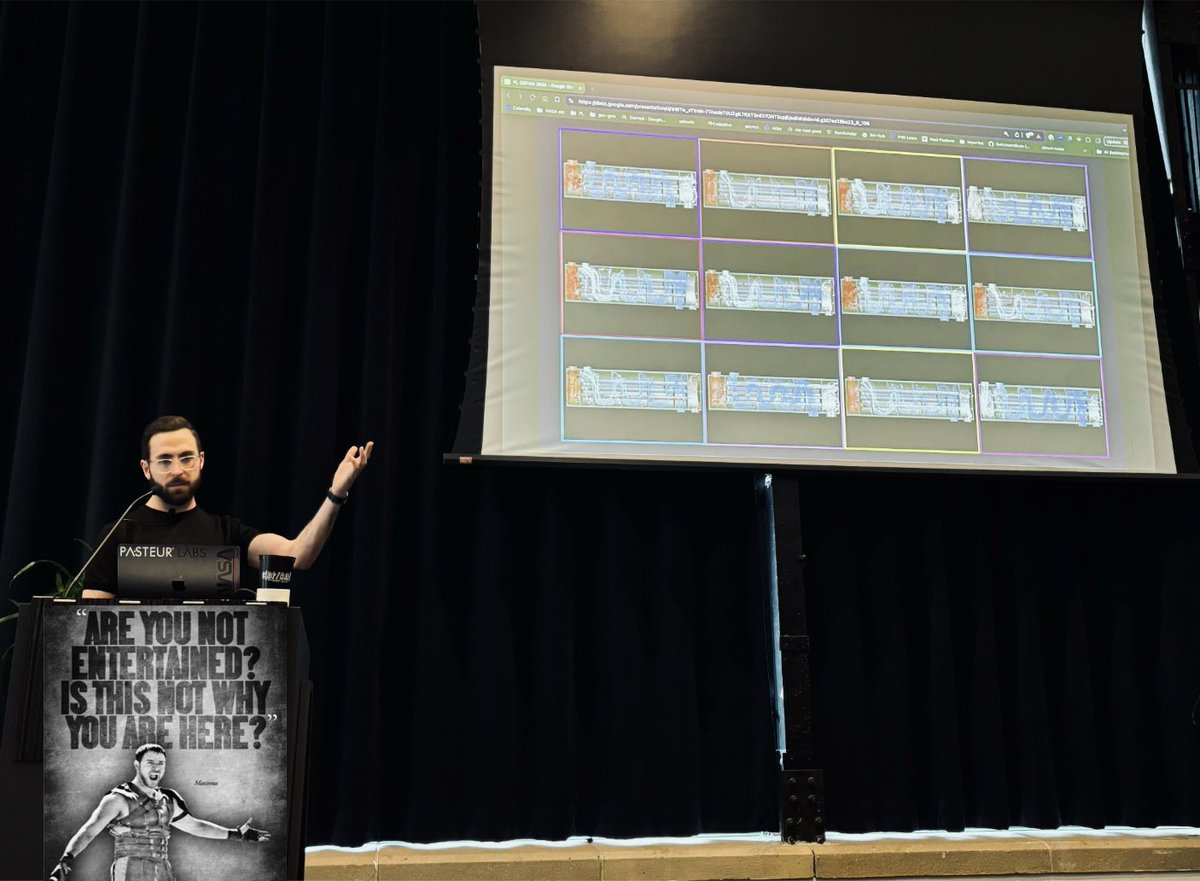

New in-depth blog post time: "Inside NVIDIA GPUs: Anatomy of high performance matmul kernels". If you want to deeply understand how one writes state of the art matmul kernels in CUDA read along. (Remember matmul is the single most important operation that transformers execute both during training and inference. Most of NVIDIA compute is spent on it. Gaining 1% in efficiency translates to massive savings in the order of many nuclear reactors :P) I, yet again, realized i underestimated the effort. 😅 Here is one more booklet (lol). 47 figures! I covered: * The fundamentals of the GPU architecture with an emphasis on the memory hierarchy, building mental models for GMEM, SMEM, and L1/L2, and then connecting them to the CUDA programming model. Along the way we also looked at the "speed of light," how it's bounded by power, with hardware reality leaking into our model. * PTX/SASS, and how to steer the compiler into generating what we actually want (is that loop being unrolled, are we using vectorized loads like LDG.128, etc.). I've annotated one PTX/SASS example for a simple matmul kernel in excruciating detail. Even if you're new to compilers you should find this useful. (i actually found various inefficiencies in both compilers - fun!) * Many core concepts such as tile/wave quantization, occupancy, ILP (instruction-level parallelism), roofline model, etc. Also building intuition around fundamental equivalences: dot product as a sum of partial outer products, why square tiles are the right shape for high arithmetic intensity, etc. * The warp tiling method - which is near SOTA assuming you can't use tensor cores, TMA, async mem instructions, and bf16. Just maximizing GPU's performance using nothing but CUDA cores, registers and shared memory. * Finally, we step into Hopper (H100): TMA, swizzling, tensor cores and the wgmma instruction, async load/store pipelines, scheduling policies like Hilbert curves, clusters with TMA multicast, faster PTX barriers, and more. As always lots of examples, lots of visuals. This is the first time i could see warp tiling kernel and be like "oh i get it completely". I just needed my mental image transformed into an actual image. A few years ago I was really inspired by @Si_Boehm's excellent blog post on how matmul works, but I also found it had several errors, some unclear explanations, and it was quite outdated. Building on @pranjalssh amazing work (who did a great job building sota kernels for H100) and my own research, this is the final result. --- Again a huge thank you to @Hyperstackcloud (GPU cloud) for giving me an H100 (PCIe) node to run some of the experiments and analysis that i needed to write this up. Also a big thank you to my friends Aroun (who did a very thorough review of the post; Aroun's doing cool GPU/AI stuff at Magic and was previously GPU architect at Apple and Imagine, he's one of the best GPU people i know and we worked together on llm.c w/ @karpathy) and the amazing @marksaroufim! (PyTorch) for taking the time during weekend when they didn't have to. :)

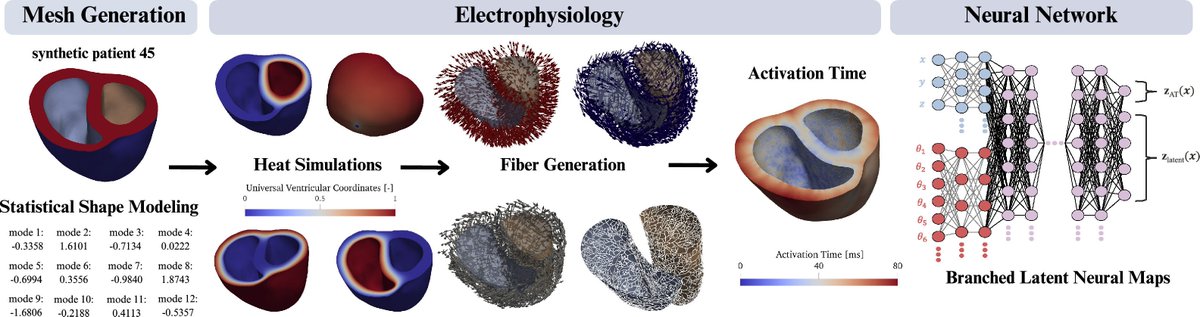

Journal of Open Source Software just published our "Tesseract Core: Universal, autodiff-native software components for Simulation Intelligence", @dionhaefner @AlexLavin_C137 🎉 doi.org/10.21105/joss.…

@AlexLavin_C137 @SimAI4Science Dope! 🔥

Today, I had the chance to present my #NeurIPS paper "APEBench" @SimAI4Science . You can find the recording on YouTube: youtu.be/wie-SzD6AJE