Alex Veshev

2.3K posts

Some of his Instagram stories:

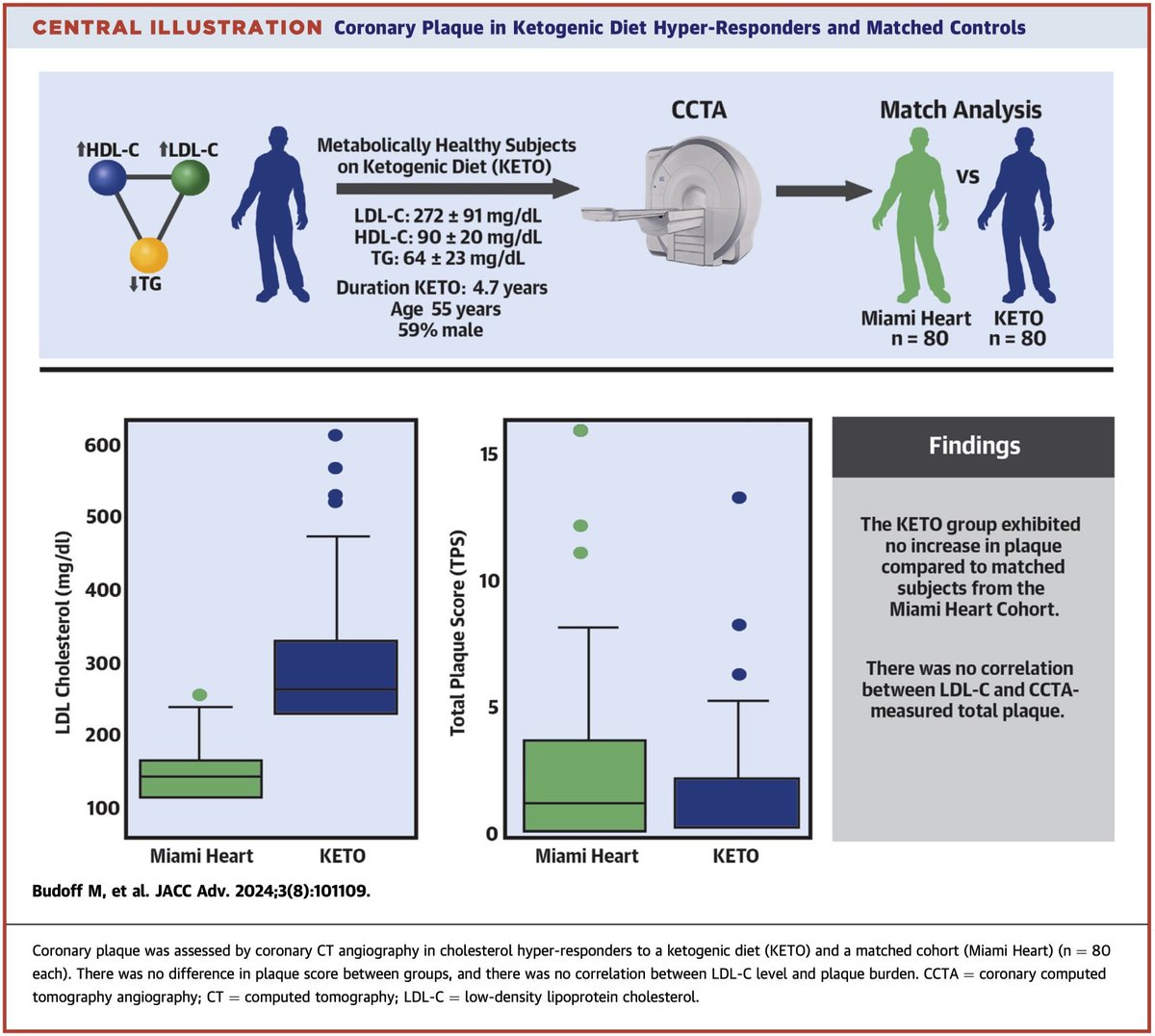

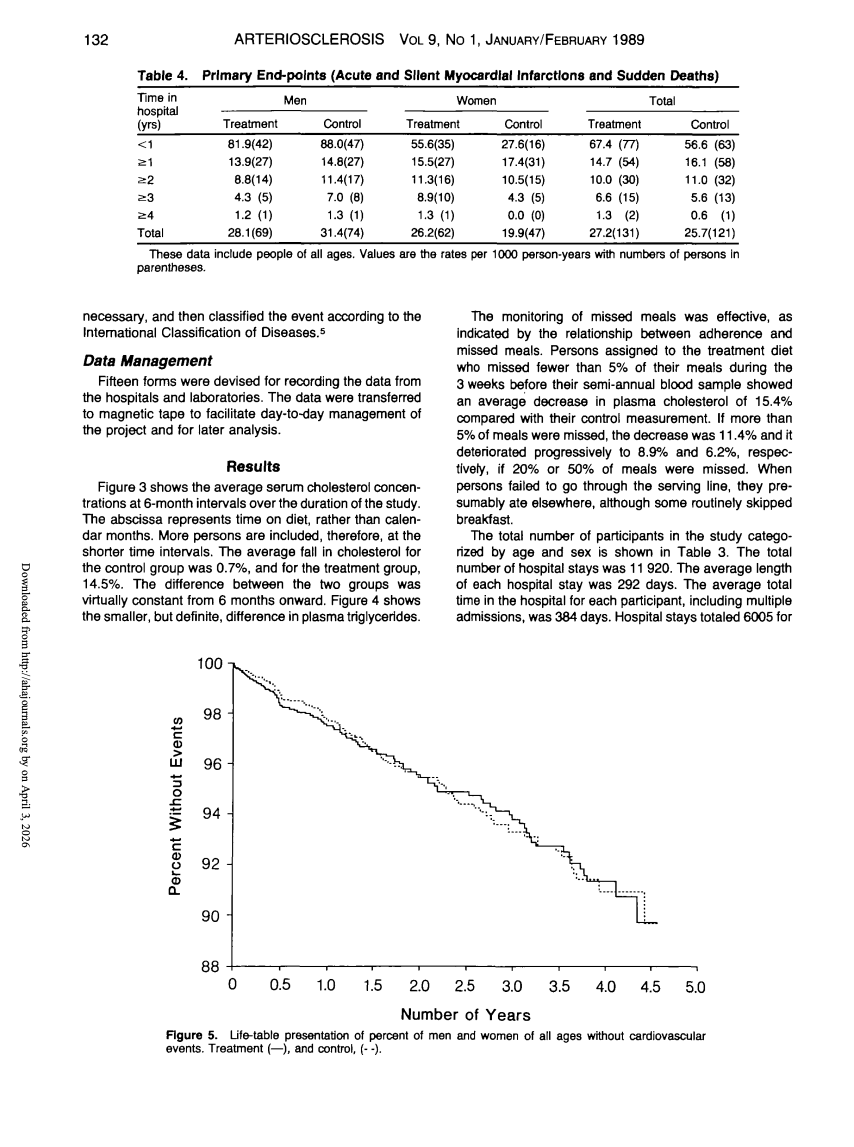

Your veins and arteries carry the same blood. Same LDL. Same ApoB. Same everything. Yet veins almost never get plaque. Arteries constantly do. Maybe you've seen the recent discussions about this. It's an interesting question that provides clues in cardiovascular science, and could challenge how we think about LDL and ApoB. 🧵

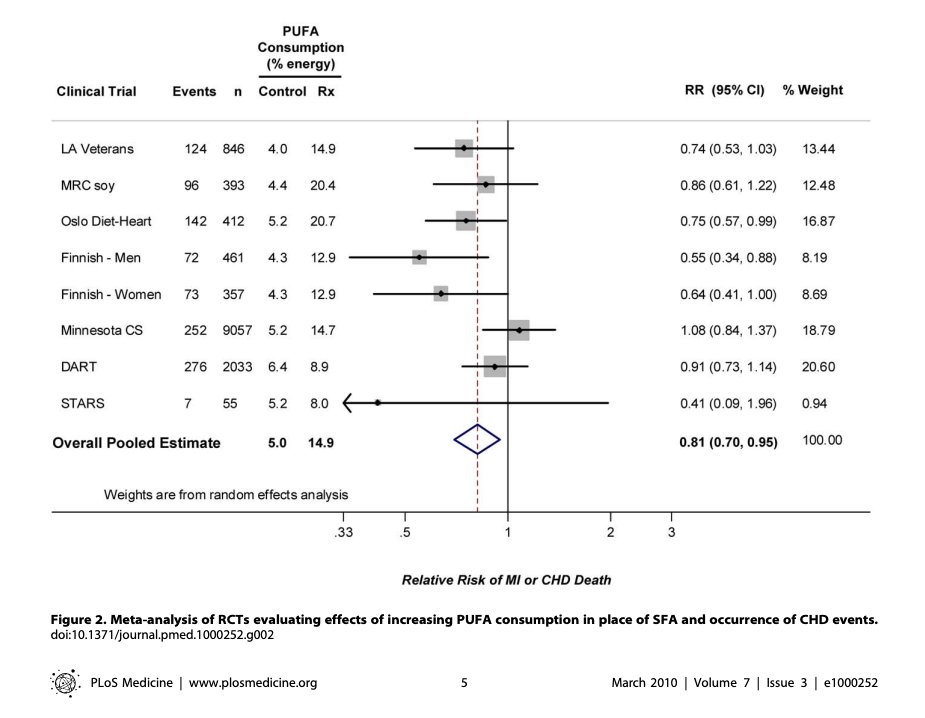

@trikomes 69 college students for 4 weeks measuring oxLDL. Show me the RCT where avoiding seed oils beat PUFA substitution for cardiovascular outcomes.

New Anthropic research: Emotion concepts and their function in a large language model. All LLMs sometimes act like they have emotions. But why? We found internal representations of emotion concepts that can drive Claude’s behavior, sometimes in surprising ways.

My read on "normal policymaker & corp. leader on AI": mostly now they don't need to be convinced it is very important (unlike a year ago). But they still see its capabilities as today + epsilon. So just briefly, here is what even "AI is normal tech" folks in the labs believe: 1/8

Just saw the AI doc and came away pissed at the optimists. I sort of expected them to have any argument that actually addressed the x-risk side, but they were basically like 'historically tech is good, people have been worried before but it was fine!' They didn't address at ALL the extremely entry-level concerns of like 'building something smarter than us is a categorically new type of threat'. They just repeated that tech would help humanity. It's especially infuriating cause the most lifelong techno optimists I know ARE the doomers. The x-risk community are the ones who grew up on epic sci-fi fiction and have thought long and hard about what the singularity might bring. One of my friends (who was in the doc) once spent all night carrying ice into a hospital room to preserve the corpse of his friend in a desperate attempt to get him into a cryonics lab. It's real for them! But "AI has promise" is not even close to an adequate response to the extinction threat on the table. Even the AI CEOs in the movie - the ones that are *actually* doing the most acceleration - seemed to at least understand the gravity of the arguments they were engaging with. The optimists in the doc seemed to have domain expertise in their technical fields, but were amateurs. They both are insufficiently visionary and also fail to engage with the actual risk in a practical way. I think they pattern match the "ai might kill us" people onto general woke anti-tech movement, and shout against them from a place of ego. That's the only good explanation I can think of for why they must be beating an activist drum that's so damn empty.

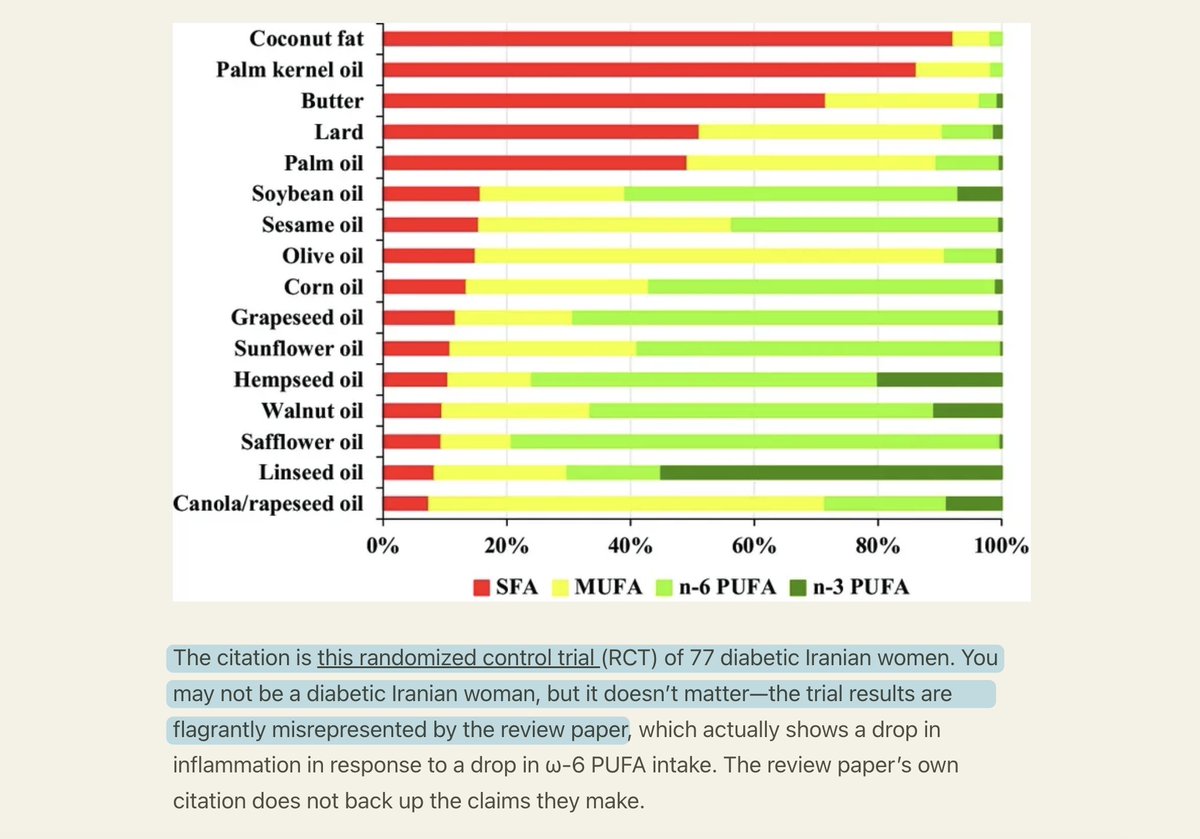

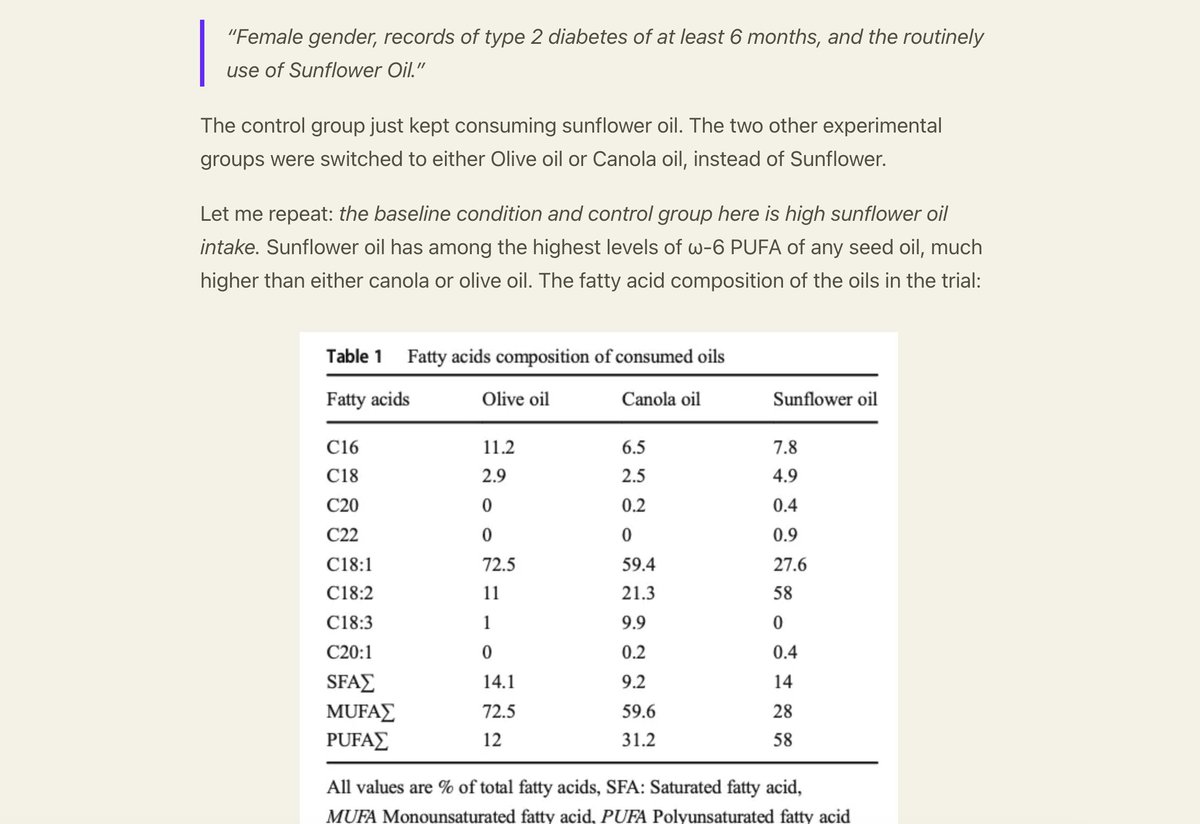

The anti-seed oil argument is based exclusively on weak, circumstantial evidence. Looking through all available evidence, the case for harm receives virtually no support; in fact, seed oils appear beneficial!