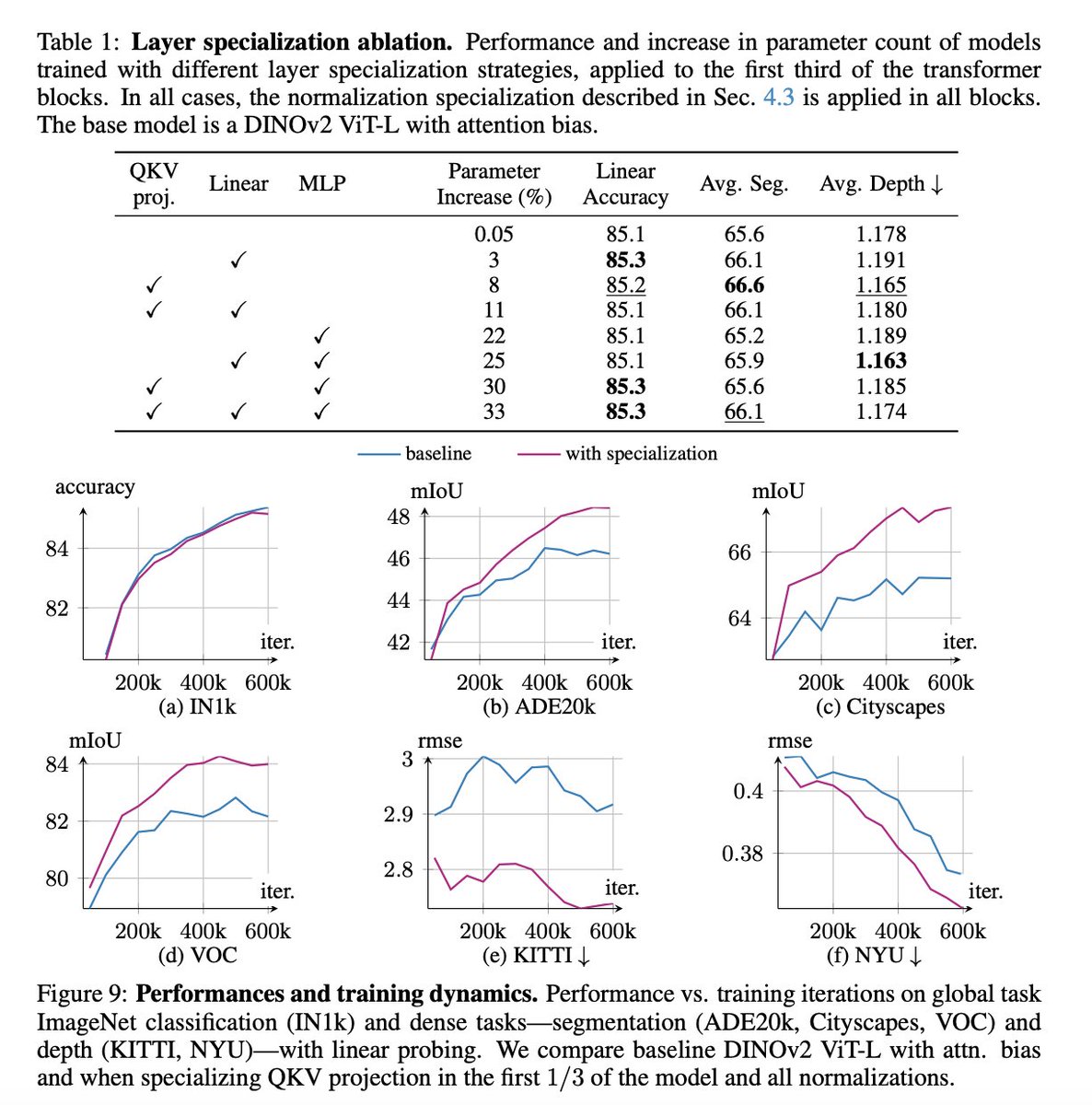

7/ Summary: Stop treating your tokens like they're identical. A little specialization goes a long way in making Vision Transformers more efficient and powerful.

Read the full paper here: arxiv.org/abs/2602.08626

#MachineLearning #ComputerVision #AI #ViT #ICLR2026

English