Sabitlenmiş Tweet

How we prompt AI is very different in 2026 than 2022 when ChatGPT came out.

I'm teaching a new course, AI Prompting for Everyone, to help you become an AI power user — whatever your current skill level.

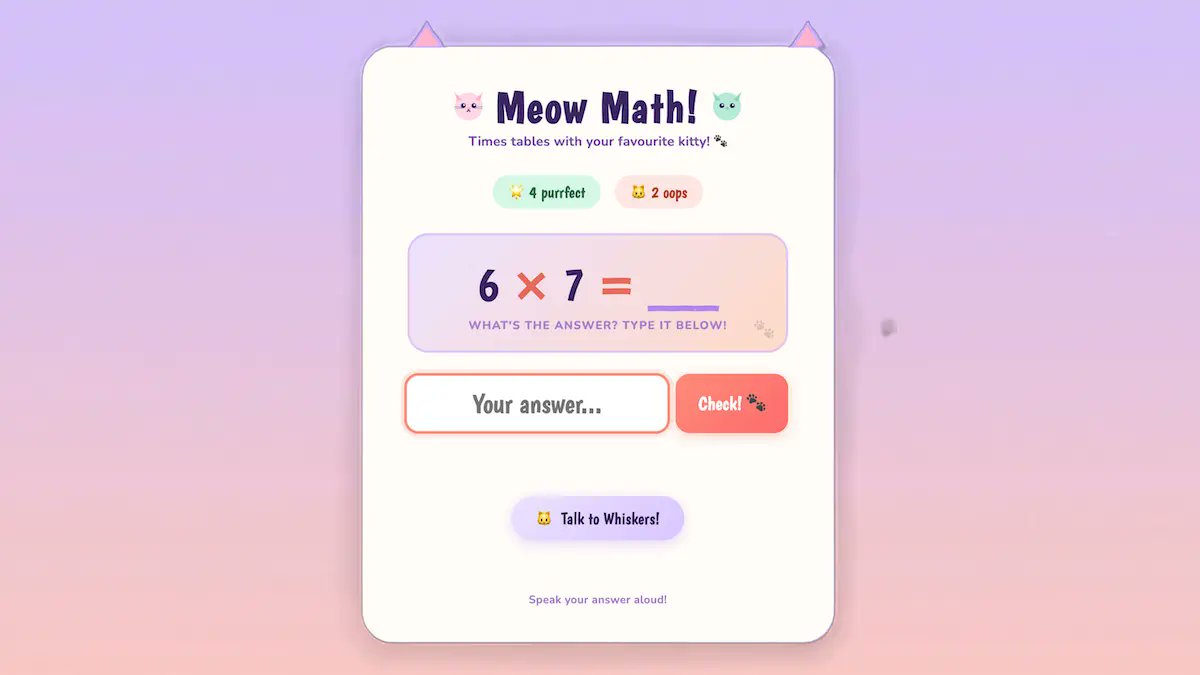

It covers skills that apply across ChatGPT, Gemini, Claude, and other AI tools. How to use deep research mode for well-researched reports on complex questions. How to give AI the right context, including more documents and images than most people realize you can provide. When to ask AI to think hard for several minutes on important decisions like what car to buy, what to study, or what job to take. And how to use AI to generate images, analyze data, and build simple games and websites.

I also cover intuitions about how these models work under the hood, so you know when to trust an answer and when not to.

Along the way, you'll see flying squirrels, a creativity test, some of my old family photos, and fireworks.

Join me at deeplearning.ai/courses/ai-pro…

English