Sabitlenmiş Tweet

Anton

62.5K posts

Anton

@AntonAlexander

Senior Generative AI Specialist at @awscloud | Helping startups & enterprises train large-scale models & optimize inferencing. Founders—DM to connect!

Washington, DC Katılım Mart 2009

1.7K Takip Edilen43.9K Takipçiler

Anton retweetledi

BREAKING: NVIDIA just dropped an open 30B model that beats GPT-OSS and Qwen3-30B — and runs 2.2–3.3× faster

Nemotron 3 Nano:

• Up to 1M-token context

• MoE: 31.6B total params, 3.6B active

• Best-in-class performance for SWE-Bench

• Open weights + training recipe + redistributable datasets

You can run the model locally with 24GB RAM.

English

🚀 New blog: Building custom LLMs for public sector on AWS

Learn how governments can develop national & domain-specific language models that meet sovereignty, compliance & cultural requirements.

Full 6-stage development guide 👇

aws.amazon.com/blogs/publicse…

#AWS #AI #PublicSector #LLM#MachineLearning

English

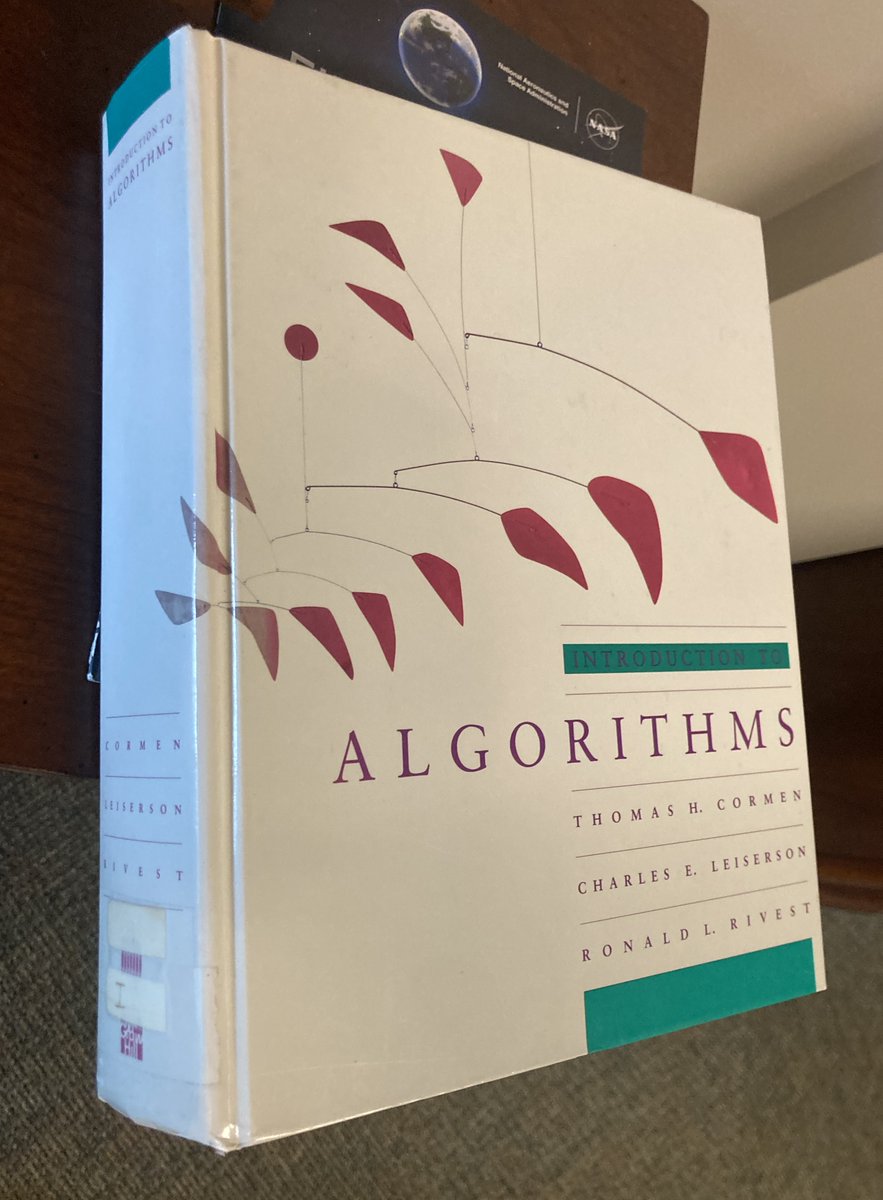

"Introduction to Algorithms", Thomas H. Cormen. Charles E. Leiserson. Ronald L. Rivest. One of the books at NIA inherited from ICASE, NASA Langley (1972-2002). I've used this book when I needed to speed up an agglomeration algorithm for FUN3D in around 2008; I ended up implementing the heap sort.

English

@AntonAlexander @awscloud Kernel crash causes the node unable to ssm into, so I cannot sudo reboot…

English

Anton retweetledi

Anton retweetledi

Anton retweetledi

Anton retweetledi

Learn how @nvidia Dynamo can be quickly setup & seamlessly deployed using Amazon EKS for automated scaling & simplified Kubernetes management ⚡💡🔧

NVIDIA Dynamo supports #AWS services such as Amazon S3, Amazon EFA & Amazon EKS.

👉 go.aws/3IK98YH

English

@yangzhouy @awscloud Go into the hyper pod console and scale down the node and then scale it back up. You can send me your email I can show you.

English

Grateful to be featured on AWS for AI Podcast (Ep. 8)! 🎙️ I shared insights on training foundation models at scale + my journey from 🇹🇹 to AWS.

Would love if you could watch, like & comment to support! 🙌 youtu.be/i95xUdpy0qQ?si…

YouTube

English

Anton retweetledi

Anton retweetledi

Today, we are releasing 4 hybrid reasoning models of sizes 70B, 109B MoE, 405B, 671B MoE under open license.

These are some of the strongest LLMs in the world, and serve as a proof of concept for a novel AI paradigm - iterative self-improvement (AI systems improving themselves).

The largest 671B MoE model is amongst the strongest open models in the world. It matches/exceeds the performance of the latest DeepSeek v3 and DeepSeek R1 models both, and approaches closed frontier models like o3 and Claude 4 Opus.

English

Anton retweetledi

Anton retweetledi

Anton retweetledi

Anton retweetledi