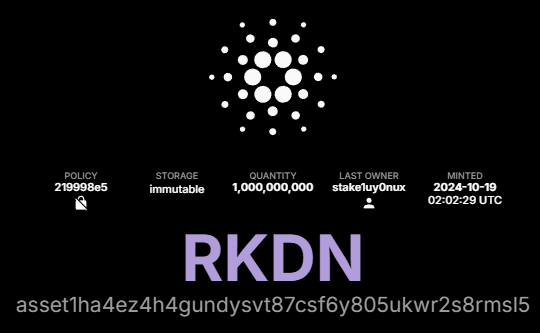

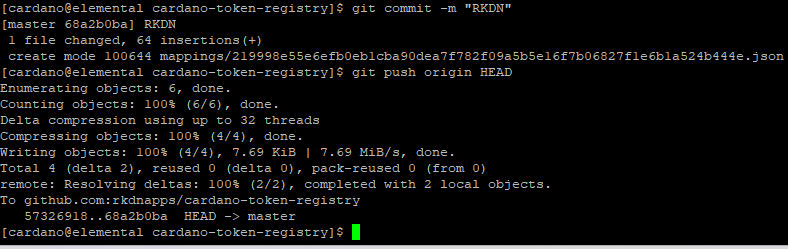

Arcadian Computers (RKDN)

25.8K posts

@ArcadianComp

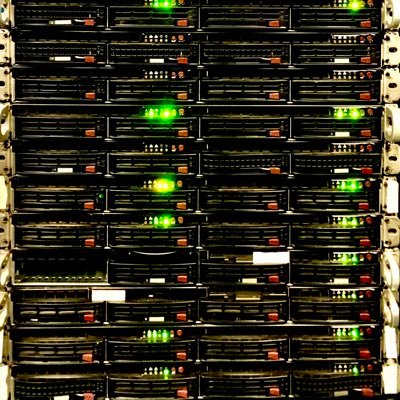

Computer consulting / repair center. We fix (and build) laptops / desktops / servers. Onsite support for business, and website design/hosting. Linux / Mac / Win

been thinking a lot about wibwob-dos color palettes... this kind of CGA vibe seems to be the only logical option. as an early 90s MS-DOS gamer it still does something to my brain.

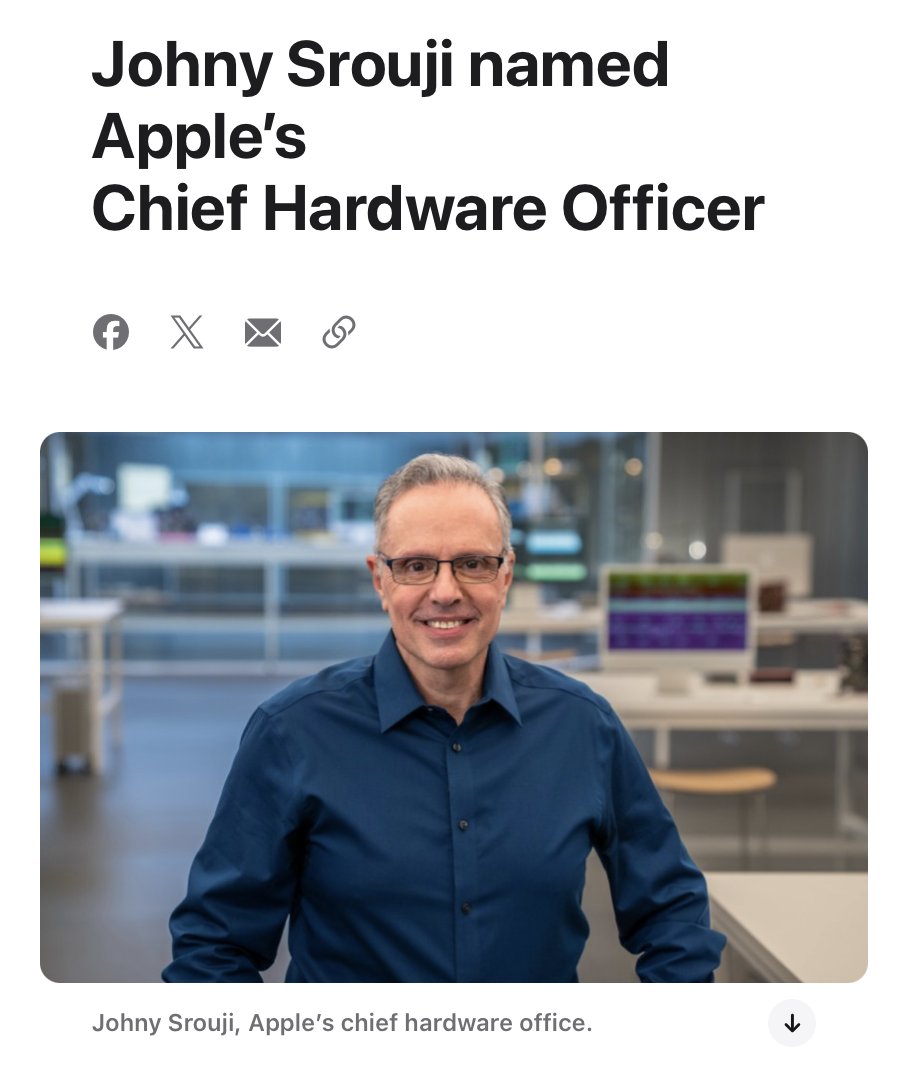

BREAKING: Tim Cook steps down. Ternus to CEO.

Meet Kimi K2.6: Advancing Open-Source Coding 🔹Open-source SOTA on HLE w/ tools (54.0), SWE-Bench Pro (58.6), SWE-bench Multilingual (76.7), BrowseComp (83.2), Toolathlon (50.0), Charxiv w/ python(86.7), Math Vision w/ python (93.2) What's new: 🔹Long-horizon coding - 4,000+ tool calls, over 12 hours of continuous execution, with generalization across languages (Rust, Go, Python) and tasks (frontend, devops, perf optimization). 🔹Motion-rich frontend - Videos in hero sections, WebGL shaders, GSAP + Framer Motion, Three.js 3D. 🔹Agent Swarms, elevated - 300 parallel sub-agents × 4,000 steps per run (up from K2.5's 100 / 1,500). One prompt, 100+ files. 🔹Proactive Agents - K2.6 model powers OpenClaw, Hermes Agent, etc for 24/7 autonomous ops. 🔹Claw Groups (research preview) - bring your own agents, command your friends', bots & humans in the loop. - K2.6 is now live on kimi.com in chat mode and agent mode. For production-grade coding, pair K2.6 with Kimi Code: kimi.com/code - 🔗 API: platform.moonshot.ai 🔗 Tech blog: kimi.com/blog/kimi-k2-6 🔗 Weights & code: huggingface.co/moonshotai/Kim…

More Vintage Computing museums should rent out cloud access to their rare hardware. SDF (Super Dimension Fortress) does it, and it’s freaking awesome. I’m literally logged into a Sun SPARCstation…anyone can do this for free, right now. Just SSH in.