Sabitlenmiş Tweet

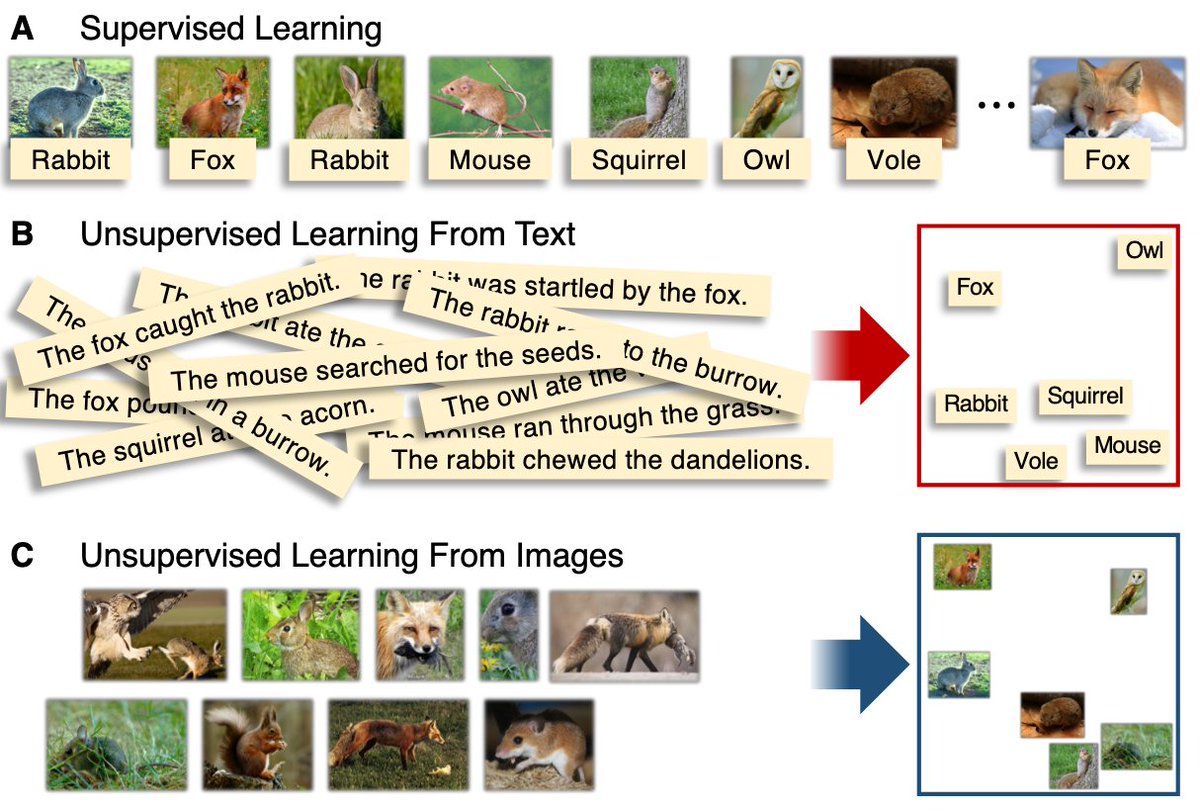

New paper w @ProfData, "Learning as the Unsupervised Alignment of Conceptual Systems" is now publicly available as a view-only version via the following SharedIt link: rdcu.be/b0w0k.

English

Brett David Roads

13 posts

@BDRoads

he/him, Terran, Postdoctoral Research Associate

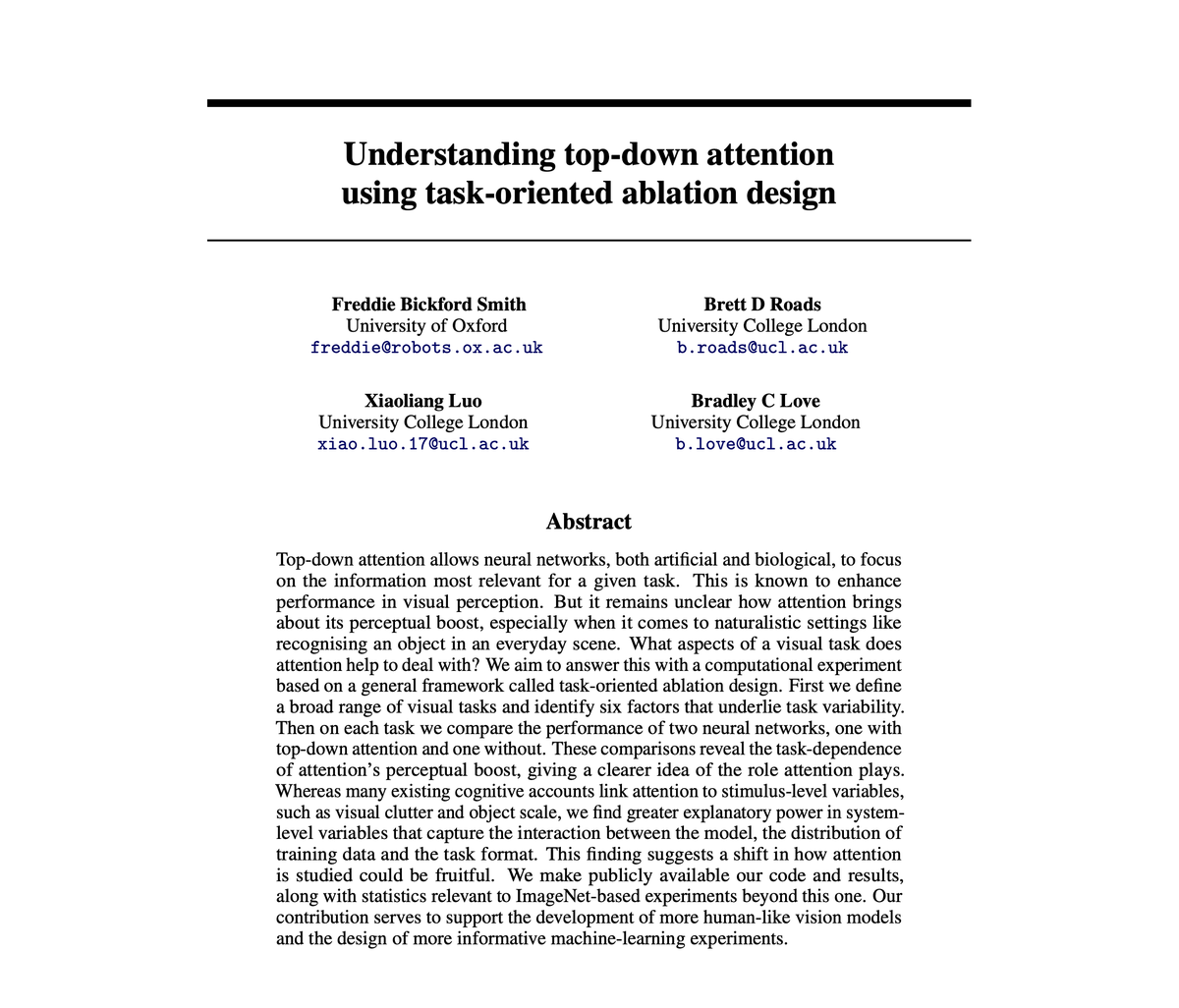

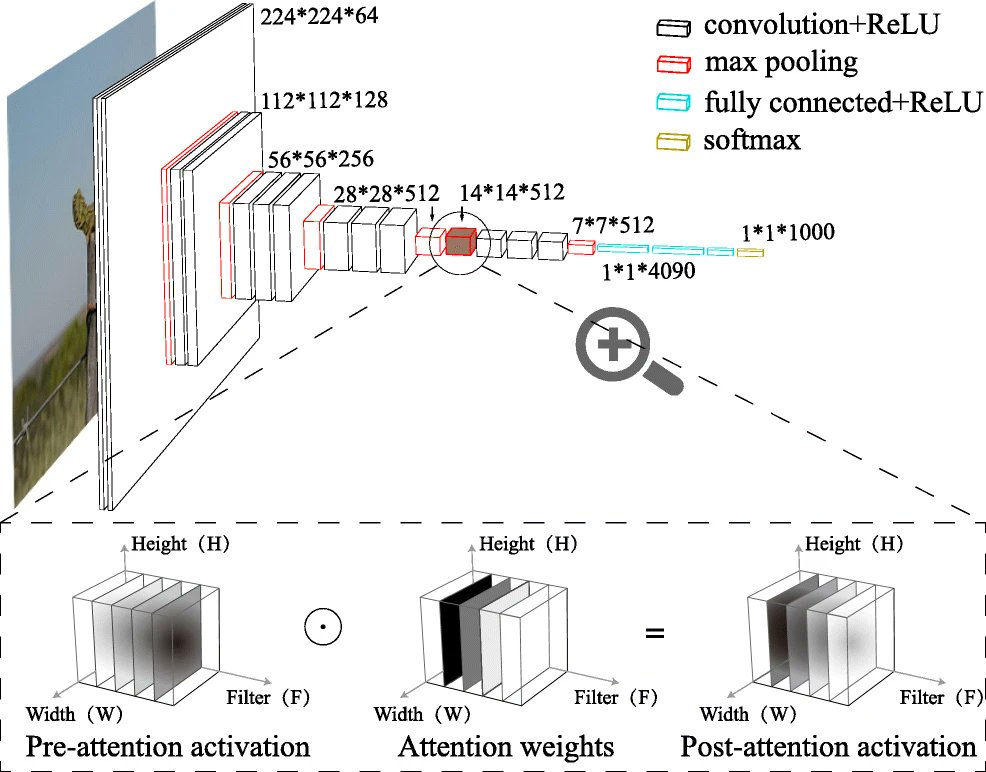

People use top-down attention, such as when searching for one's keys. One idea is that prefrontal cortex helps reconfigure our visual system to be more sensitive to features relevant to our goals. This has costs and benefits. 2/n

updated link: youtube.com/watch?v=R4L7aL…