Ben Zweibelson

2.5K posts

@BZweibelson

Author, Director for Strategic Innovation at US Space Command.

I was a 10x engineer. Now I'm useless.

The former Google CEO just dropped a terrifying AI timeline.

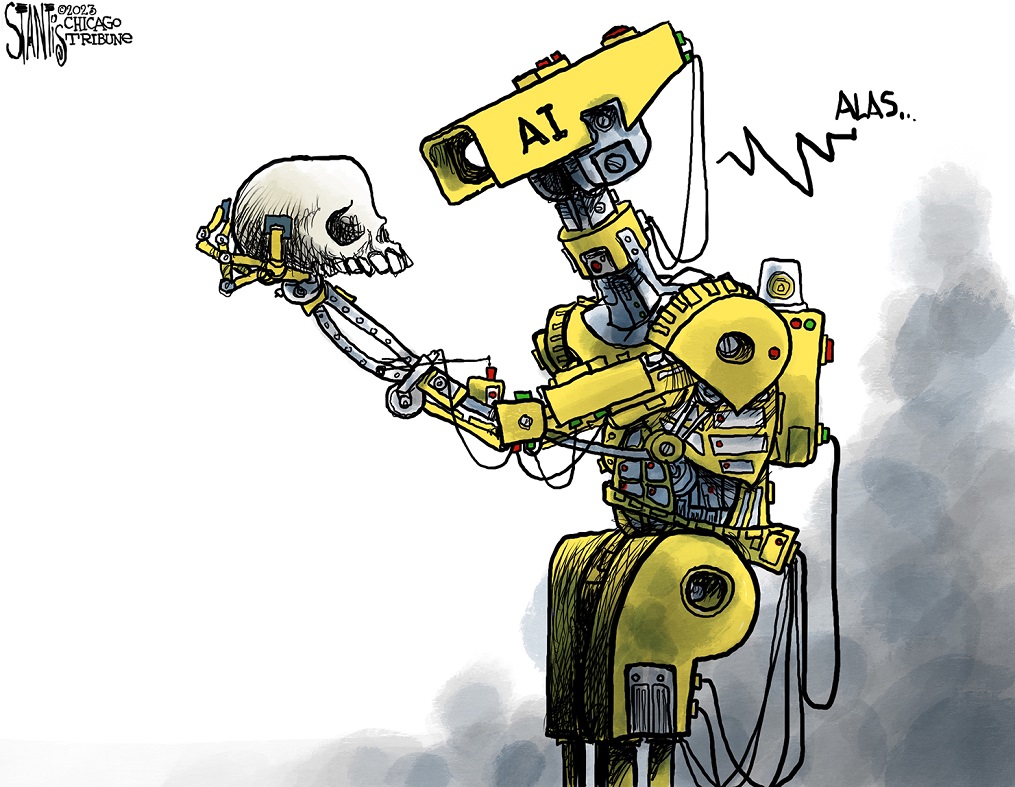

Why I’m asking you to invest 90–120 focused minutes in a 50-page article on Strong AI and the future of war... Most of the conversation right now is exactly where the Department of War’s new AI Acceleration Strategy (Jan 2026) wants it: how do we move faster with today’s narrow AI? Swarms. Agents. Better targeting. Tighter OODA loops. Beat China in the race for incremental advantage. That race matters. But it’s the wrong race if Strong AI (AGI) arrives in the next 5–10 years. Or, we might win it to then lose it in ways that seem paradoxical yet will be precisely how the AGI genie escapes the lamp. In this new piece for Marine Corps University Press, I argue that AGI is not “narrow AI at higher speed and scale.” It is a phase change — the strategic equivalent of moving from atomic to thermonuclear weapons, except the explosion happens in epistemology and societal cohesion, not just physics. I call the new form phantasmal war: conflict where armies may never form because shared reality itself fractures first. I call the new philosophy techno-eschatology. You’ll meet the three tribes already fighting over this future (the Boomers who say “it’s just better tools,” the Doomers who see extinction, and the Groomers who see utopia). You’ll get a crisp distinction between today’s useful narrow AI and tomorrow’s incomprehensible, self-improving systems. And then we finish with a Fermi-paradox-style question that quietly reframes everything we think we know about deterrence, victory, and the profession of arms. Yes, it’s 50 pages. Yes, it will take real concentration. But the first 8–10 pages are written so that if you don’t feel the hook, you can walk away guilt-free. Most people who finish tell me it permanently shifts how they see the next decade; at least in the last week it has been online. : ) Free, open-access link below. No paywall, no gatekeeping. If you read it, I’d genuinely value your honest take in the comments — especially if you disagree. The only bad outcome is if we keep sprinting down the narrow-AI track while the ground under the entire board disappears. usmcu.edu/outreach/Marin… What do you think — worth the time, or am I over-hyping the shift?

Why I’m asking you to invest 90–120 focused minutes in a 50-page article on Strong AI and the future of war... Most of the conversation right now is exactly where the Department of War’s new AI Acceleration Strategy (Jan 2026) wants it: how do we move faster with today’s narrow AI? Swarms. Agents. Better targeting. Tighter OODA loops. Beat China in the race for incremental advantage. That race matters. But it’s the wrong race if Strong AI (AGI) arrives in the next 5–10 years. Or, we might win it to then lose it in ways that seem paradoxical yet will be precisely how the AGI genie escapes the lamp. In this new piece for Marine Corps University Press, I argue that AGI is not “narrow AI at higher speed and scale.” It is a phase change — the strategic equivalent of moving from atomic to thermonuclear weapons, except the explosion happens in epistemology and societal cohesion, not just physics. I call the new form phantasmal war: conflict where armies may never form because shared reality itself fractures first. I call the new philosophy techno-eschatology. You’ll meet the three tribes already fighting over this future (the Boomers who say “it’s just better tools,” the Doomers who see extinction, and the Groomers who see utopia). You’ll get a crisp distinction between today’s useful narrow AI and tomorrow’s incomprehensible, self-improving systems. And then we finish with a Fermi-paradox-style question that quietly reframes everything we think we know about deterrence, victory, and the profession of arms. Yes, it’s 50 pages. Yes, it will take real concentration. But the first 8–10 pages are written so that if you don’t feel the hook, you can walk away guilt-free. Most people who finish tell me it permanently shifts how they see the next decade; at least in the last week it has been online. : ) Free, open-access link below. No paywall, no gatekeeping. If you read it, I’d genuinely value your honest take in the comments — especially if you disagree. The only bad outcome is if we keep sprinting down the narrow-AI track while the ground under the entire board disappears. usmcu.edu/outreach/Marin… What do you think — worth the time, or am I over-hyping the shift?

ツァルキヤン‼️