✨🔴_🔴✨ Ben Jones

959 posts

✨🔴_🔴✨ Ben Jones

@ben_chain

(not a pseudonym) weird ETH yankovic cofounder, eternal optimist @optimism 🔴✨

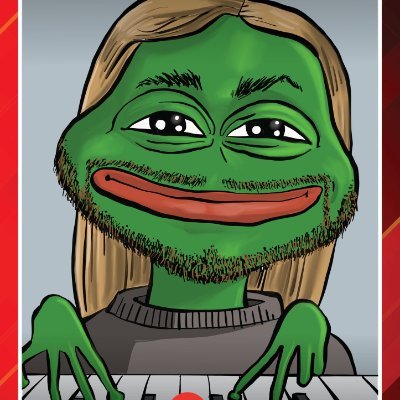

LISTEN UP EVERYBODY! Today we're launching OP Enterprise. We all know that crypto is at the cusp of major mainstream adoption. Nearly every major enterprise has a crypto strategy. We're the only team that has successfully launched chains for multiple companies. We've packaged up all of our learnings into a product offering that will onboard the next wave of enterprises. OP Enterprise is production-grade blockchain infrastructure for companies that want to build businesses, not become blockchain experts. 𝗬𝗼𝘂𝗿 𝗯𝗹𝗼𝗰𝗸𝗰𝗵𝗮𝗶𝗻. 𝗬𝗼𝘂𝗿 𝗿𝗲𝘃𝗲𝗻𝘂𝗲. 𝗘𝗻𝘁𝗲𝗿𝗽𝗿𝗶𝘀𝗲 𝗴𝘂𝗮𝗿𝗮𝗻𝘁𝗲𝗲𝘀. — 𝗧𝗵𝗲 𝗽𝗿𝗼𝗯𝗹𝗲𝗺 Here's what we keep hearing from enterprises: They're building on infrastructure where the incentives don't align with their own. They're competing for mindshare, fighting to keep users, working against platform economics that extract value from everything they build. Most blockchain platforms don't care if you're successful. Their focus is on their own TVL and metrics - not yours. You launch your stablecoin into an environment that competes with everyone else's stablecoin and hemorrhage capital to onboard your users onto a blockchain you have zero control over. And even if you decide to own your chain, you hit the real bottleneck—onboarding the ecosystem partners you need to go live. Stablecoins, oracles, bridges, wallets, indexers. Each negotiation takes months. Costs hundreds of thousands to millions of dollars. Vendors pick off blockchain teams one by one. We've seen this movie before. Many times. — 𝗢𝗣 𝗘𝗻𝘁𝗲𝗿𝗽𝗿𝗶𝘀𝗲 𝗳𝗶𝘅𝗲𝘀 𝗯𝗼𝘁𝗵 𝗥𝗲𝘃𝗲𝗻𝘂𝗲 𝗰𝗼𝗻𝘁𝗿𝗼𝗹 — When you own your chain, your infrastructure becomes a revenue-generating asset. Not a cost center. DeFi protocols deploy on your rails. The economic activity you enable accrues to you. This isn't about saving on fees. It's about owning the infrastructure layer where financial value is created. 𝗩𝗲𝗻𝗱𝗼𝗿 𝗺𝗮𝗻𝗮𝗴𝗲𝗺𝗲𝗻𝘁 𝗮𝘁 𝘀𝗰𝗮𝗹𝗲 — We've onboarded tier-one partners across 50+ production chains. They're already integrated, contracted, ready to deploy. We negotiate standard terms, manage costs down, and fast-track partnerships that would otherwise delay your launch by 6-12 months. We've done this work already. You don't have to. — 𝗪𝗵𝗮𝘁 𝘆𝗼𝘂 𝗴𝗲𝘁 𝗙𝘂𝗹𝗹𝘆 𝗠𝗮𝗻𝗮𝗴𝗲𝗱: We run your chain end-to-end. 24/7 monitoring, incident response, security ops, upgrade orchestration. You focus on product. 𝗦𝗲𝗹𝗳 𝗠𝗮𝗻𝗮𝗴𝗲𝗱: You operate, we support. Architecture guidance, security assessments, priority patches, direct access to core engineers. 𝗢𝗣 𝗠𝗮𝗶𝗻𝗻𝗲𝘁: Start on our flagship public network with enterprise support. Graduate to your own chain when ready. Same codebase, seamless migration. First conversation to production: 8-12 weeks. — 𝗧𝗵𝗲 𝘀𝗽𝗲𝗰𝘀 99.99% uptime SLO 15-minute P1 incident response Up to 5B RPC requests/month with multi-provider redundancy 10 Mgas/sec baseline, 100+ Mgas/sec for high-volume applications Sub-200ms block times 20k requests-per-second burst capacity Stage 1 security with permissionless fault proofs Optional ZK fault proofs for faster finality — 𝗪𝗵𝘆 𝗻𝗼𝘄 The window for enterprise blockchain has shifted from "if" to "how fast." MiCA is live in Europe. US policy is stabilizing. The enterprises that spent 2023-2024 in exploratory mode are now greenlighting production builds. Enterprise deals are now a competitive space. When we talk to enterprises, we see everyone trying to help them come onchain. But the OP Stack is the only stack that has successfully brought and scaled multiple enterprises onchain. We've seen what works and what doesn't. We've earned this knowledge by building alongside the fastest-growing enterprises in web3. We've encountered every failure mode because we've been doing this longer than anyone else. At the end of the day, enterprises want to control their own economics. They don't want to rent infrastructure from platforms that compete with them. The OP Stack vision will win. Shared standards balanced with chain autonomy. — 𝗪𝗵𝗮𝘁 𝗺𝗮𝗸𝗲𝘀 𝘂𝘀 𝗱𝗶𝗳𝗳𝗲𝗿𝗲𝗻𝘁 𝗘𝗻𝘁𝗲𝗿𝗽𝗿𝗶𝘀𝗲 𝘁𝗿𝗮𝗰𝗸 𝗿𝗲𝗰𝗼𝗿𝗱 — 50+ chains launched. Not pilots. Production systems serving millions. 𝗜𝗻𝗻𝗼𝘃𝗮𝘁𝗶𝗼𝗻 𝘃𝗲𝗹𝗼𝗰𝗶𝘁𝘆 — We control the entire stack. When we discover vulnerabilities, customers get patches within hours. Not weeks. 𝗗𝗶𝗿𝗲𝗰𝘁 𝗮𝗰𝗰𝗲𝘀𝘀 𝘁𝗼 𝘁𝗵𝗲 𝘀𝗼𝘂𝗿𝗰𝗲 — Questions go to the engineers who wrote the code. Feature requests go to the people who can actually implement them. No translation layer. 𝗘𝘁𝗵𝗲𝗿𝗲𝘂𝗺 𝗰𝗿𝗲𝗱𝗶𝗯𝗶𝗹𝗶𝘁𝘆 — We've worked on Ethereum's core protocol. Defined its scaling roadmap. Invented the L2 architecture that powers the industry. 𝗡𝗼 𝘃𝗲𝗻𝗱𝗼𝗿 𝗹𝗼𝗰𝗸-𝗶𝗻 — Open source. Fork if you want. Most teams discover they'd rather work with us—but the choice is always yours. — Our first customers: Unichain — Uniswap needed their own chain. They chose us. Uniswap Labs operates Unichain with Mission-Critical Support—priority response for high-stakes moments where downtime isn't an option. Celo — Scaling mobile payments across Latin America and Africa. Millions of users. Celo operates their network with Mission-Critical Support, ensuring enterprise-grade backing in emerging markets. Different use cases. Same infrastructure. Same commitment to their success We are here today because partners like these rolled up their sleeves and built together with us. — 𝗪𝗵𝗼 𝘁𝗵𝗶𝘀 𝗶𝘀 𝗳𝗼𝗿 Fintechs building next-generation financial services Centralized exchanges launching tokenized products Payments companies building cross-border rails Financial institutions exploring tokenization and digital assets If you need infrastructure that performs without the operational burden—and you want to own your economics instead of renting them—OP Enterprise is for you. — OP Enterprise is a major focus for us in 2026. We have active engagements across fintech, exchanges, payments, and financial services. The direction is clear: the OP Stack is becoming the standard for the next generation of financial systems. This is the first of many announcements to come. If you're serious about building onchain, we should talk.

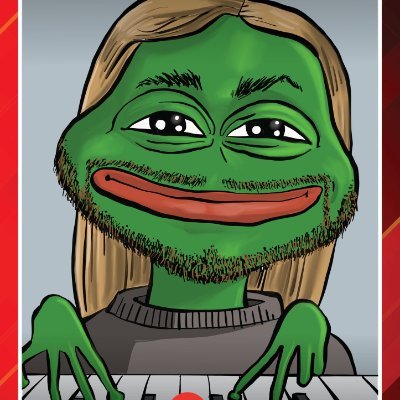

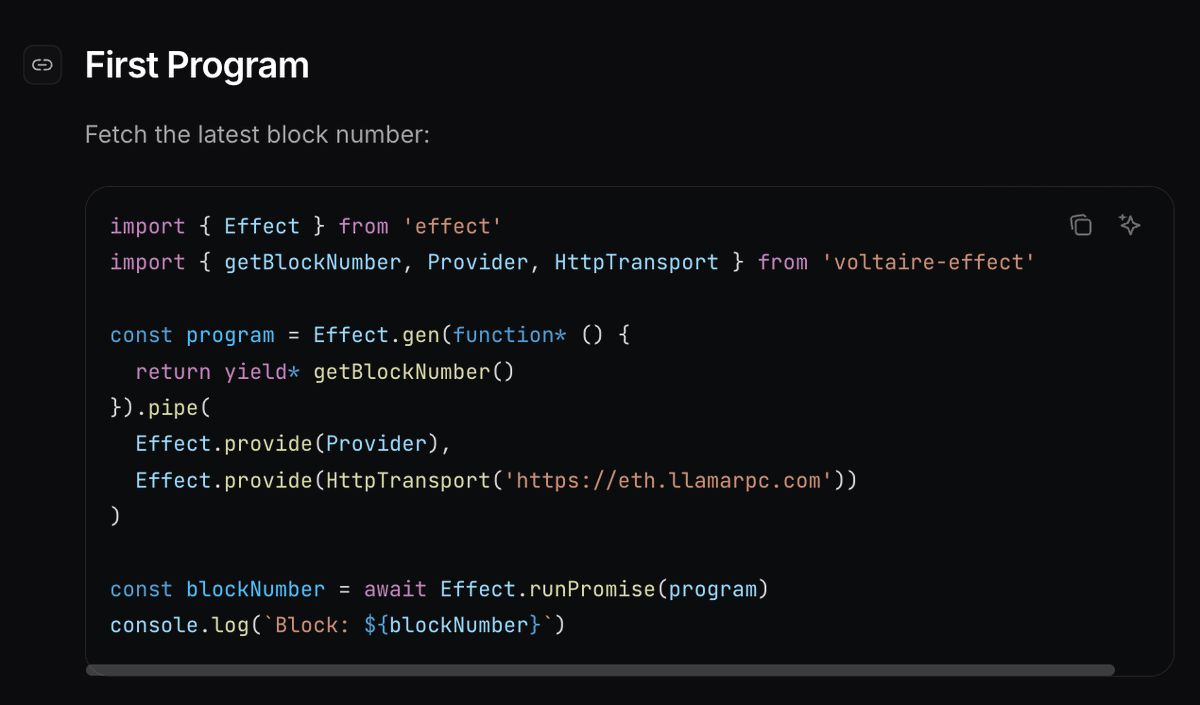

Our 2026 Roadmap Last year, we made transactions 10x faster and 10x cheaper. But that’s not enough. This year, we also want to make the OP Stack hands down the best toolset for scaling to your first million dollars in onchain revenue. To us, scaling means identifying and removing bottlenecks. And the bottleneck isn’t always throughput. Sometimes, the bottleneck is liquidity. Sometimes it’s security, UX or integrations. We build tools to solve every flavor of bottleneck. That’s why OP Stack chains earn up to 3x more in profit (2x more revenue) per dollar onchain than the most successful chains on other L2 stacks. That’s why we process 14% of all transactions in crypto. And we do this all while maintaining the lowest fees. Our goal is to build infrastructure that enables companies to expand their product lines faster, and build superior user experiences. So in 2026, a majority of our product development will be focused on the following three pillars: Performance The most at-scale chains use the OP Stack. @base is the fastest and highest throughput L2 on the market - they will continue to push the limits of scaling in 2026, and the rest of the Superchain will benefit too. We’ll be allowing more compute supply for lower infrastructure costs while simultaneously handling more compute demand with the same supply through features like BALs to scale node operation, lightweight derivation, and fee market modularization. op-reth will also be available to any chain that wants it. Customizability The OP Stack is widely regarded as the most modular and customizable chain stack on the market. Notable examples include: @world_chain_'s Proof of Humanity, @Celo's native fee abstraction, and @unichain's provable blockbuilding. In 2026, we’ll add more customization levers like multiple gas tokens, priority lanes, and dynamic opcode repricing for improved execution. And alas - ZK. Revenue Your money already works harder for you on the OP Stack. We want to push that even further with native token actions such as buy & burn for any chain, MEV auctions (in partnership with Flashbots), sequencer revenue modules that enable different transaction ordering schemes, and features that enable more liquidity to move securely and quickly between chains to help reduce LTV ratios in defi apps on the Superchain. We're excited to see our partners succeed in 2026, and to deliver the roadmap that enables that. If you'd like to talk to us, come find us at our Scaling Summit in ETH Denver:

Since I extended my own research using AI, I've been thinking about how it's going to reshape research and universities. We can now build new institutions where research is continuously updated, automatically verified, and carried out at immensely greater scale. Picture a research institute where senior scholars direct dozens or even hundreds of AI agents on coordinated programs. Small teams providing questions and judgment while agents handle collection, analysis, and verification. What would it take to build? The requirements are almost comically simple: (1) compute funding for researchers, and (2) a commitment to hire ambitious people and get out of their way. This new institute can unlock totally new way to do research: --Living research that automatically updates any time new data arrives, so our knowledge stays up to date --Automatically verified research that we know replicates from the moment it's posted publicly --Hyperscaled descriptive work that ingests enormous bodies of political data, like the entire history of changes to the US tax code or every bill introduced in every state legislature --Prototypes for new governance tools that are built for communities and then tested alongside them I think we're stepping into a crazy new era of how social science is done. I offer more thoughts on what's changing and how we might design an AI-first university of the future in my post, linked below.

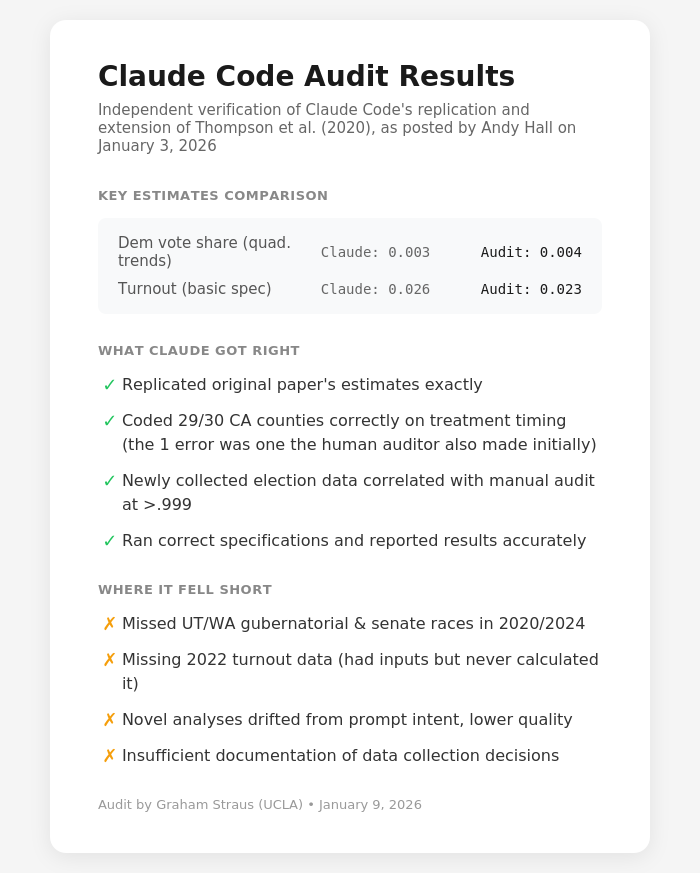

what if main loops are the real mistake

Here's proof that Claude Code can write an entire empirical polisci paper. To validate my claim that AI agents are coming for polisci "like a freight train", today I had Claude Code fully replicate and extend an old paper of mine estimating the effect of universal vote-by-mail on turnout and election outcome...essentially in one shot. After careful prompting, Claude Code: (1) Downloaded the old paper's repo and replicated the past results, translating our old Stata Code into Python (2) Crawled the web to get updated official election data and census data (3) Ran new analyses extending the results through 2024 (4) Created new tables and figures (5) Performed a lit review (6) Wrote a wholly new paper (7) Pushed the whole thing to a new github repo The whole thing took about an hour. This is an insane paradigm shift in how empirical work is done. It also validates the point that several people including @BrendanNyhan made yesterday---it's going to be especially easy to scale observational research with AI. Thanks to @alexolegimas, @arthur_spirling , and many others who gave me feedback. .