Universal Abundance

1.5K posts

Universal Abundance

@Beyond_Scarcity

Engineer. Lover of science. Ad Astra 💫

Katılım Ocak 2026

725 Takip Edilen1.2K Takipçiler

Sabitlenmiş Tweet

Canada Coast 2 Coast Update End Day 1:

We made it from Vancouver, BC to Medicine Hat, Alberta which was 814 miles (1,310km)

Trying to shoot for 1,000 mile day Monday

Follow @DevinOlsenn & @scotsrule08 for most up to date quickest updates

& of course our wonderful new live tracker thanks to @wholemars FSD Database

English

@IterIntellectus I worry for the safety of the athletes. It needs to be carefully introduced. Not, do whatever makes you the most athletic now, without regard to their quality of life after competition. I want humanity to advance. Safely.

English

@eliebakouch We will open source the 0.5T model towards the end of this year. It should still be quite useful.

English

Grok foundation model V9-Medium (1.5T) has finished training. Evals look good. A lot of Cursor data was added in supplementary training and there is more to come.

Fine-tuning is underway and reinforcement learning begins in a few days. 2 to 3 weeks to public release.

This will be a major improvement over the 0.5T v8-small that currently serves all Grok production traffic, especially for difficult coding tasks.

English

Taxes screw over both the companies and the states they’re in.

Taxes didn’t build those big companies. They show up after the success. They sure as hell didn’t create Silicon Valley.

The sky-high taxes came later, once it was already killing it.

California basically turned its successful companies into hosts and started parasitically draining them for more and more cash, jacking up the rates year after year.

They forget the first rule of parasitism: don’t kill the host.

Companies only have so much tolerance before they say screw it and bail for another state with better deals and resources.

And that breaking point is a lot lower these days.

English

@elonmusk I would like to see grok integrate the power of X with grokipedia. When someone uses the grok button on a post, the data should be used to improve grokipedia. Just an idea.

English

The team is gathering speed

X Freeze@XFreeze

Grok Build just got another update: v0.1.220-alpha.1 xAI is now shipping multiple updates in a single day with nonstop fixes, improvements, optimizations, and new features landing continuously The development velocity on Grok Build right now is absolutely insane

English

@MrBeast @Muah_Mindless How do you not like this guy? I legitimately don't understand it.

English

@Muah_Mindless I SAW THIS IN THE STANDS WHEN I WAS FILMING LOL

Okay I’ll give you $10k, check dm

English

@TheCinesthetic My favorite movie. Truly a masterpiece. Essentially 15 minutes of no dialogue to begin the movie. You are completely locked in because of that.

English

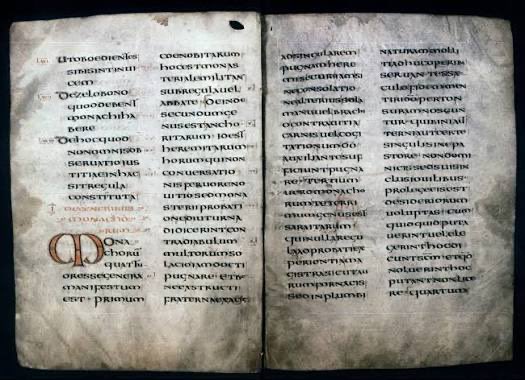

@elonmusk Thank goodness for the Rule of St. Benedict and the monks who preserved that knowledge. It continued western civilization.

English

@elonmusk Strong products are all that should matter to a brand. Tesla's products are what make the brand so successful.

English

@XFreeze It's really great. It would be great if it was also available in Grok imagine. As of now, I'm not seeing it in the app.

English

V3 is ~1Tbps per sat. Assuming learning from increased power density on AI Sats translates to Starlink which some certainly will. You have to wonder if V4 or V5 is capable of ~10Tbps.

Our current estimates are 100k Tbps of bandwidth from orbit with V3. If V5 is 10Tbps then we are talking about 1M Tbps or 1 Exabit per second.

Elon Musk@elonmusk

There will also ultimately be >100k V3/V4/V5 satellites for Starlink broadband and direct to cellphone connectivity. If growth continues, Starlink will one day carry the majority of Internet traffic. At that point, it is the Internet and everything else just connects to Starlink.

English

Starlink V3 is a massive capacity leap

From SpaceX’s S-1:

Starlink V2

• Launched February 2023

• 96 Gbps downlink capacity

Starlink V3

• Launch targeted for 2026

• 1,024 Gbps downlink capacity

That is roughly a 10.7× jump

This is the next phase of Starlink:

• More bandwidth

• More capacity

• More users served

• More global connectivity

V2 helped scale Starlink to 9,600+ satellites and ~10.3M subscribers.

V3 looks like the layer that pushes Starlink from “satellite internet” closer to true global broadband infrastructure

English