Bonan Zhao / 赵博囡

141 posts

@BonanZhao

PI of the Computational Cognitive Science Lab at the School of Informatics, University of Edinburgh.

How do we know what machines know? How can we understand the new, emerging behavior of machines? In this conversation between @_beenkim from @GoogleDeepMind and the @buZZrobot community, we dug into the challenges of interpretability and explored how humans and machines can better understand each other. Watch the full discussion on our YouTube channel. Thank you, Been, for taking the time to talk to us! Timestamps: 0:00 Intro 0:18 AI teaches grandmasters chess 05:30 AI neologisms 10:17 Extracting knowledge from machines 13:43 Interpretability research 17:19 Are we keeping up with AI progress 18:34 New AI related terms 20:26 The right direction for interpretability 23:35 AI lying 27:49 Conseptual maps 30:30 AI researchers bias 33:33 Generalizing AI teaching humans 35:11 Is AI sentient 36:04 Does AI has concepts 41:53 Progress in machine understanding

My Lab at the University of Edinburgh🇬🇧 has funded PhD positions for this cycle! We study the computational principles of how people learn, reason, and communicate. It's a new lab, and you will be playing a big role in shaping its culture and foundations. Spread the words!

My Lab at the University of Edinburgh🇬🇧 has funded PhD positions for this cycle! We study the computational principles of how people learn, reason, and communicate. It's a new lab, and you will be playing a big role in shaping its culture and foundations. Spread the words!

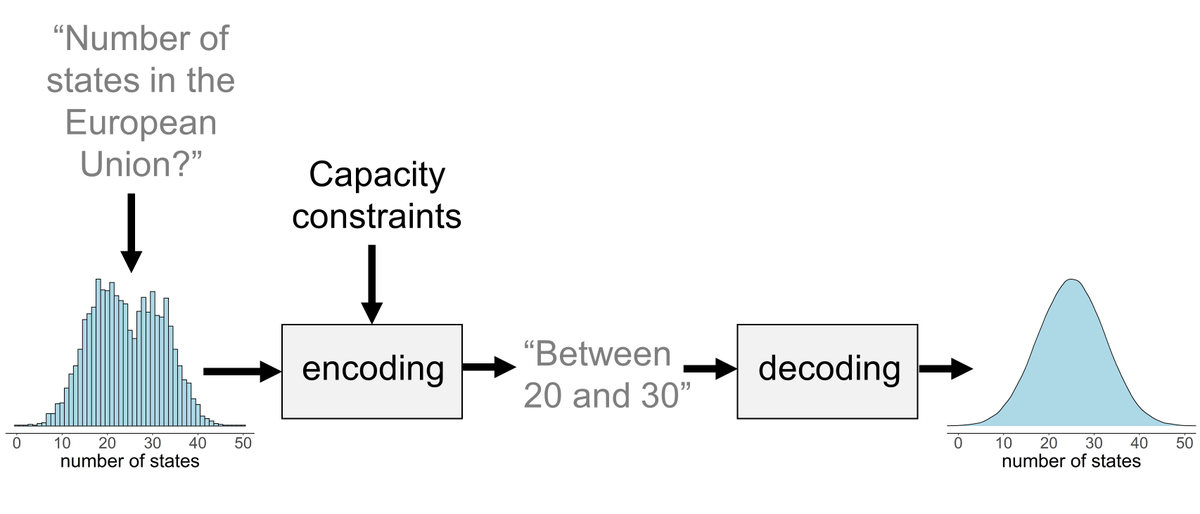

🚨New paper! We know models learn distinct in-context learning strategies, but *why*? Why generalize instead of memorize to lower loss? And why is generalization transient? Our work explains this & *predicts Transformer behavior throughout training* without its weights! 🧵 1/