Sabitlenmiş Tweet

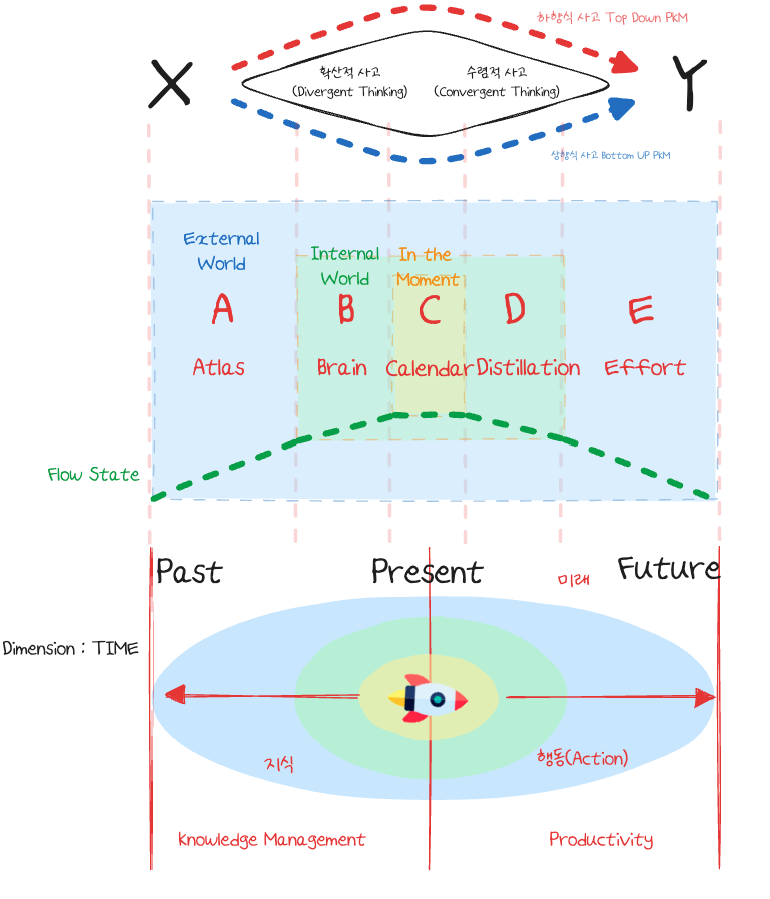

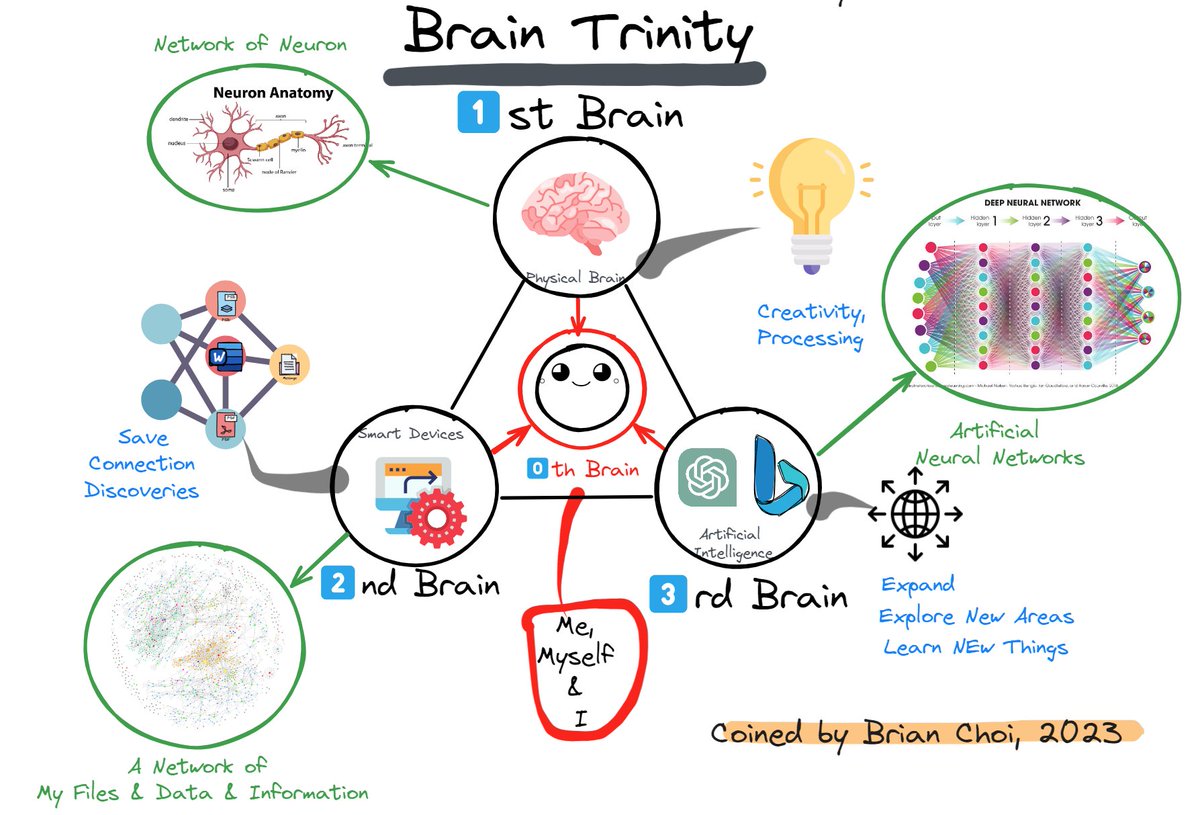

The Brain Trinity (BT) Framework offers a transformative approach to personal and professional development.

Here's what BT can do for you:

- Illuminate your path and decipher the complexities of the world, making the intricate seem accessible.

- Arm you with the tools and strategies essential for tackling new and challenging problems with confidence and readiness.

- Guide you in discovering your authentic self, ensuring you maintain your unique identity amidst life's turbulence.

- Equip you to maintain and sharpen your competitive advantage in the rapidly evolving landscape of artificial intelligence.

English